## Why

This is a small precursor to the larger permissions-migration work. Both

the comparison stack in

[#22401](https://github.com/openai/codex/pull/22401) /

[#22402](https://github.com/openai/codex/pull/22402) and the alternate

stack in [#22610](https://github.com/openai/codex/pull/22610) /

[#22611](https://github.com/openai/codex/pull/22611) /

[#22612](https://github.com/openai/codex/pull/22612) are easier to

review if the terminology is already settled underneath them.

Because `:project_roots` and `:danger-no-sandbox` have not shipped as

stable user-facing surface area, carrying them forward as aliases would

just add more migration logic to the later stacks. This PR removes that

ambiguity now so the follow-on work can rely on one spelling for each

built-in concept.

## What Changed

- renamed the config-facing special filesystem key from `:project_roots`

to `:workspace_roots`

- dropped unpublished `:project_roots` parsing support in

`core/src/config/permissions.rs`, so new config only recognizes

`:workspace_roots`

- renamed the built-in full-access permission profile id from

`:danger-no-sandbox` to `:danger-full-access`

- dropped unpublished `:danger-no-sandbox` support entirely, including

the old active-profile canonicalization path, and added explicit

rejection coverage for the legacy id

- introduced shared built-in permission-profile id constants in

`codex-rs/protocol/src/models.rs`

- updated `core`, `app-server`, and `tui` call sites that special-case

built-in profiles to use the shared constants and canonical ids

- updated tests and the Linux sandbox README to use `:workspace_roots` /

`:danger-full-access`

## Verification

I focused verification on the three places this rename can regress:

config parsing, active-profile identity surfaced back out of `core`, and

user/server call sites that special-case built-in profiles.

Targeted checks:

-

`config::tests::default_permissions_can_select_builtin_profile_without_permissions_table`

-

`config::tests::default_permissions_read_only_applies_additional_writable_roots_as_modifications`

-

`config::tests::default_permissions_can_select_builtin_full_access_profile`

- `config::tests::legacy_danger_no_sandbox_is_rejected`

- `workspace_root` filtered `codex-core` tests

-

`request_processors::thread_processor::thread_processor_tests::thread_processor_behavior_tests::requested_permissions_trust_project_uses_permission_profile_intent`

-

`suite::v2::turn_start::turn_start_rejects_invalid_permission_selection_before_starting_turn`

- `status::tests::status_snapshot_shows_auto_review_permissions`

-

`status::tests::status_permissions_full_disk_managed_with_network_is_danger_full_access`

-

`app_server_session::tests::embedded_turn_permissions_use_active_profile_selection`

## Summary

- Allow remote installed-plugin cache refresh to start whenever plugins

are enabled.

- Allow remote installed-plugin bundle sync to start whenever plugins

are enabled.

- Remove the extra local `remote_plugin_enabled` guard from those

background sync paths.

## Context

Server-side installed plugin state and optional bundle URL behavior are

owned by plugin-service `/public/plugins/installed`, so these local sync

paths only need the overall plugin enablement gate.

## Test plan

- `just fmt`

- `cargo test -p codex-core-plugins`

## Why

reapplies https://github.com/openai/codex/pull/22386 which was

previously reverted

Also, introduce `remoteControl/enable` and `remoteControl/disable`

app-server APIs to toggle on/off remote control at runtime for a given

running app-server instance.

## What Changed

- Adds experimental v2 RPCs:

- `remoteControl/enable`

- `remoteControl/disable`

- Adds `RemoteControlRequestProcessor` and routes the new RPCs through

it instead of `ConfigRequestProcessor`.

- Adds named `RemoteControlHandle::enable`, `disable`, and `status`

methods.

- Makes `remoteControl/enable` return an error when sqlite state DB is

unavailable, while keeping enrollment/websocket failures as async status

updates.

- Adds `AppServerRuntimeOptions.remote_control_enabled` and hidden

`--remote-control` flags for `codex app-server` and `codex-app-server`.

- Updates managed daemon startup to use `codex app-server

--remote-control --listen unix://`.

- Marks `Feature::RemoteControl` as removed and ignores

`[features].remote_control`.

- Updates app-server README entries for the new remote-control methods.

## Why

Users and support need a single command that captures the local Codex

runtime, configuration, auth, terminal, network, and state shape without

asking the user to know which diagnostic depth to choose first. `codex

doctor` now runs the useful checks by default and makes the detailed

human output the default because the command is usually run when someone

already needs context.

The command also targets concrete support failure modes we have seen

while iterating on the design:

- update-target mismatches like #21956, where the installed package

manager target can differ from the running executable

- terminal and multiplexer issues that depend on `TERM`, tmux/zellij

state, color handling, and TTY metadata

- provider-specific HTTP/WebSocket connectivity, including ChatGPT

WebSocket handshakes and API-key/provider endpoint reachability

- local state/log SQLite integrity problems and large rollout

directories

- feedback reports that need an attached, redacted diagnostic snapshot

without asking the user to run a second command

## What Changed

- Adds `codex doctor` as a grouped CLI diagnostic report with default

detailed output and `--summary` for the compact view.

- Adds stable report sections for Environment, Configuration, Updates,

Connectivity, and Background Server, plus a top Notes block that

promotes anomalies such as available updates, large rollout directories,

optional MCP issues, and mixed auth signals.

- Adds runtime provenance, install consistency, bundled/system search

readiness, terminal/multiplexer metadata, `config.toml` parse status,

auth mode details, sandbox details, feature flag summaries, update

cache/latest-version state, app-server daemon state, SQLite integrity

checks, rollout statistics, and provider-aware network diagnostics.

- Adds ChatGPT WebSocket diagnostics that report the negotiated HTTP

upgrade as `HTTP 101 Switching Protocols` and include timeout, DNS,

auth, and provider context in detailed output.

- Makes reachability provider-aware: API-key OpenAI setups check the API

endpoint, ChatGPT auth checks the ChatGPT path, and custom/AWS/local

providers check configured HTTP endpoints when available.

- Adds structured, redacted JSON output where `checks` is keyed by check

id and `details` is a key/value object for support tooling.

- Integrates doctor with feedback uploads by attaching a best-effort

`codex-doctor-report.json` report and adding derived Sentry tags for

overall status and failing/warning checks.

- Updates the TUI feedback consent copy so users can see that the doctor

report is included when logs/diagnostics are uploaded.

- Updates the CLI bug issue template to ask reporters for `codex doctor

--json` and render pasted reports as JSON.

## Example Output

The examples below are sanitized from local smoke runs with `--no-color`

so the structure is reviewable in plain text.

### `codex doctor`

```text

Codex Doctor v0.0.0 · macos-aarch64

Notes

↑ updates 0.130.0 available (current 0.0.0, dismissed 0.128.0)

⚠ rollouts 1,526 active files · 2.53 GB on disk

⚠ mcp MCP configuration has optional issues

⚠ auth mixed auth signals: ChatGPT login plus API key env var; HTTP reachability uses API-key mode

─────────────────────────────────────────────────────────────

Environment

✓ runtime local debug build

version 0.0.0

install method other

commit unknown

executable ~/code/codex.fcoury-doct…x-rs/target/debug/codex

✓ install consistent

context other

managed by npm: no · bun: no · package root —

PATH entries (2) ~/.local/share/mise/installs/node/24/bin/codex

~/.local/share/mise/shims/codex

✓ search ripgrep 15.1.0 (system, `rg`)

✓ terminal Ghostty 1.3.2-main-+b0f827665 · tmux 3.6a · TERM=xterm-256color

terminal Ghostty

TERM_PROGRAM ghostty

terminal version 1.3.2-main-+b0f827665

TERM xterm-256color

multiplexer tmux 3.6a

tmux extended-keys on

tmux allow-passthrough on

tmux set-clipboard on

✓ state databases healthy

CODEX_HOME ~/.codex (dir)

state DB ~/.codex/state_5.sqlite (file) · integrity ok

log DB ~/.codex/logs_2.sqlite (file) · integrity ok

active rollouts 1,526 files · 2.53 GB (avg 1.70 MB)

archived rollouts 8 files · 3.84 MB (avg 491.11 KB)

Configuration

✓ config loaded

model gpt-5.5 · openai

cwd ~/code/codex.fcoury-doctor/codex-rs

config.toml ~/.codex/config.toml

config.toml parse ok

MCP servers 1

feature flags 36 enabled · 7 overridden (full list with --all)

overrides code_mode, code_mode_only, memories, chronicle, goals, remote_control, prevent_idle_sleep

✓ auth auth is configured

auth storage mode File

auth file ~/.codex/auth.json

auth env vars present OPENAI_API_KEY

stored auth mode chatgpt

stored API key false

stored ChatGPT tokens true

stored agent identity false

⚠ mcp MCP configuration has optional issues — Set the missing MCP env vars or disable the affected server.

configured servers 1

disabled servers 0

streamable_http servers 1

optional reachability openaiDeveloperDocs: https://developers.openai.com/mcp (HEAD connect failed; GET connect failed)

✓ sandbox restricted fs + restricted network · approval OnRequest

approval policy OnRequest

filesystem sandbox restricted

network sandbox restricted

Connectivity

✓ network network-related environment looks readable

✓ websocket connected (HTTP 101 Switching Protocols) · 15s timeout

model provider openai

provider name OpenAI

wire API responses

supports websockets true

connect timeout 15000 ms

auth mode chatgpt

endpoint wss://chatgpt.com/backend-api/<redacted>

DNS 2 IPv4, 2 IPv6, first IPv6

handshake result HTTP 101 Switching Protocols

✗ reachability one or more required provider endpoints are unreachable over HTTP — Check proxy, VPN, firewall, DNS, and custom CA configuration.

reachability mode API key auth

openai API https://api.openai.com/v1 connect failed (required)

Background Server

○ app-server not running (ephemeral mode)

─────────────────────────────────────────────────────────────

11 ok · 1 idle · 4 notes · 1 warn · 1 fail failed

--summary compact output --all expand truncated lists

--json redacted report

```

### `codex doctor --summary`

```text

Codex Doctor v0.0.0 · macos-aarch64

Notes

↑ updates 0.130.0 available (current 0.0.0, dismissed 0.128.0)

⚠ rollouts 1,526 active files · 2.53 GB on disk

⚠ mcp MCP configuration has optional issues

⚠ auth mixed auth signals: ChatGPT login plus API key env var; HTTP reachability uses API-key mode

─────────────────────────────────────────────────────────────

Environment

✓ runtime local debug build

✓ install consistent

✓ search ripgrep 15.1.0 (system, `rg`)

✓ terminal Ghostty 1.3.2-main-+b0f827665 · tmux 3.6a · TERM=xterm-256color

✓ state databases healthy

Configuration

✓ config loaded

✓ auth auth is configured

⚠ mcp MCP configuration has optional issues — Set the missing MCP env vars or disable the affected server.

✓ sandbox restricted fs + restricted network · approval OnRequest

Updates

✓ updates update configuration is locally consistent

Connectivity

✓ network network-related environment looks readable

✓ websocket connected (HTTP 101 Switching Protocols) · 15s timeout

✗ reachability one or more required provider endpoints are unreachable over HTTP — Check proxy, VPN, firewall, DNS, and custom CA configuration.

Background Server

○ app-server not running (ephemeral mode)

─────────────────────────────────────────────────────────────

11 ok · 1 idle · 4 notes · 1 warn · 1 fail failed

Run codex doctor without --summary for detailed diagnostics.

--all expand truncated lists --json redacted report

```

### `codex doctor --json` shape

```json

{

"schema_version": 1,

"overall_status": "fail",

"checks": {

"runtime.provenance": {

"id": "runtime.provenance",

"category": "Environment",

"status": "ok",

"summary": "local debug build",

"details": {

"version": "0.0.0",

"install method": "other",

"commit": "unknown"

}

},

"sandbox.helpers": {

"id": "sandbox.helpers",

"category": "Configuration",

"status": "ok",

"summary": "restricted fs + restricted network · approval OnRequest",

"details": {

"approval policy": "OnRequest",

"filesystem sandbox": "restricted",

"network sandbox": "restricted"

}

}

}

}

```

### `/feedback` new sentry attachment

<img width="938" height="798" alt="CleanShot 2026-05-13 at 15 36 14"

src="https://github.com/user-attachments/assets/715e62e0-d7b4-4fea-a35a-fd5d5d33c4c0"

/>

### New section in CLI issue template

<img width="1164" height="435" alt="CleanShot 2026-05-13 at 15 47 24"

src="https://github.com/user-attachments/assets/9081dc25-a28c-4afa-8ba1-e299c2b4031d"

/>

## How to Test

1. Run `cargo run --bin codex -- doctor --no-color`.

2. Confirm the detailed report is the default and includes promoted

Notes, grouped sections, terminal details, state DB integrity, rollout

stats, provider reachability, WebSocket diagnostics, and app-server

status.

3. Run `cargo run --bin codex -- doctor --summary --no-color`.

4. Confirm the compact view keeps the same sections and summary counts

but omits detailed key/value rows.

5. Run `cargo run --bin codex -- doctor --json`.

6. Confirm the output is redacted JSON, `checks` is an object keyed by

check id, and each check's `details` is a key/value object.

7. Preview the CLI bug issue template and confirm the `Codex doctor

report` field appears after the terminal field, asks for `codex doctor

--json`, and renders pasted output as JSON.

8. Start a feedback flow that includes logs.

9. Confirm the upload consent copy lists `codex-doctor-report.json`

alongside the log attachments.

Targeted tests:

- `cargo test -p codex-cli doctor`

- `cargo test -p codex-app-server

doctor_report_tags_summarize_status_counts`

- `cargo test -p codex-feedback`

- `cargo test -p codex-tui feedback_view`

- `just argument-comment-lint`

- `git diff --check`

## Why

Remote control starts by letting `codex-backend` initialize against the

app-server as an infrastructure health/proxy client before the real

remote client connects. App-server initialization also sets the

process-wide `originator` from `client_info.name`, so `codex-backend`

could become the sticky originator for later model/API requests even

after the real client initialized.

## What changed

- Treat `codex-backend` as a non-originating initialize client,

alongside the existing `codex_app_server_daemon` probe client.

- Preserve normal per-connection initialize behavior, including session

metadata and initialize analytics.

- Add regression coverage that verifies `codex-backend` initialize does

not replace the default originator.

## Testing

- `cargo test -p codex-app-server --test all

initialize_codex_backend_does_not_override_originator`

## Why

Codex intentionally ignores unknown `config.toml` fields by default so

older and newer config files keep working across versions. That leniency

also makes typo detection hard because misspelled or misplaced keys

disappear silently.

This change adds an opt-in strict config mode so users and tooling can

fail fast on unrecognized config fields without changing the default

permissive behavior.

This feature is possible because `serde_ignored` exposes the exact

signal Codex needs: it lets Codex run ordinary Serde deserialization

while recording fields Serde would otherwise ignore. That avoids

requiring `#[serde(deny_unknown_fields)]` across every config type and

keeps strict validation opt-in around the existing config model.

## What Changed

### Added strict config validation

- Added `serde_ignored`-based validation for `ConfigToml` in

`codex-rs/config/src/strict_config.rs`.

- Combined `serde_ignored` with `serde_path_to_error` so strict mode

preserves typed config error paths while also collecting fields Serde

would otherwise ignore.

- Added strict-mode validation for unknown `[features]` keys, including

keys that would otherwise be accepted by `FeaturesToml`'s flattened

boolean map.

- Kept typed config errors ahead of ignored-field reporting, so

malformed known fields are reported before unknown-field diagnostics.

- Added source-range diagnostics for top-level and nested unknown config

fields, including non-file managed preference source names.

### Kept parsing single-pass per source

- Reworked file and managed-config loading so strict validation reuses

the already parsed `TomlValue` for that source.

- For actual config files and managed config strings, the loader now

reads once, parses once, and validates that same parsed value instead of

deserializing multiple times.

- Validated `-c` / `--config` override layers with the same

base-directory context used for normal relative-path resolution, so

unknown override keys are still reported when another override contains

a relative path.

### Scoped `--strict-config` to config-heavy entry points

- Added support for `--strict-config` on the main config-loading entry

points where it is most useful:

- `codex`

- `codex resume`

- `codex fork`

- `codex exec`

- `codex review`

- `codex mcp-server`

- `codex app-server` when running the server itself

- the standalone `codex-app-server` binary

- the standalone `codex-exec` binary

- Commands outside that set now reject `--strict-config` early with

targeted errors instead of accepting it everywhere through shared CLI

plumbing.

- `codex app-server` subcommands such as `proxy`, `daemon`, and

`generate-*` are intentionally excluded from the first rollout.

- When app-server strict mode sees invalid config, app-server exits with

the config error instead of logging a warning and continuing with

defaults.

- Introduced a dedicated `ReviewCommand` wrapper in `codex-rs/cli`

instead of extending shared `ReviewArgs`, so `--strict-config` stays on

the outer config-loading command surface and does not become part of the

reusable review payload used by `codex exec review`.

### Coverage

- Added tests for top-level and nested unknown config fields, unknown

`[features]` keys, typed-error precedence, source-location reporting,

and non-file managed preference source names.

- Added CLI coverage showing invalid `--enable`, invalid `--disable`,

and unknown `-c` overrides still error when `--strict-config` is

present, including compound-looking feature names such as

`multi_agent_v2.subagent_usage_hint_text`.

- Added integration coverage showing both `codex app-server

--strict-config` and standalone `codex-app-server --strict-config` exit

with an error for unknown config fields instead of starting with

fallback defaults.

- Added coverage showing unsupported command surfaces reject

`--strict-config` with explicit errors.

## Example Usage

Run Codex with strict config validation enabled:

```shell

codex --strict-config

```

Strict config mode is also available on the supported config-heavy

subcommands:

```shell

codex --strict-config exec "explain this repository"

codex review --strict-config --uncommitted

codex mcp-server --strict-config

codex app-server --strict-config --listen off

codex-app-server --strict-config --listen off

```

For example, if `~/.codex/config.toml` contains a typo in a key name:

```toml

model = "gpt-5"

approval_polic = "on-request"

```

then `codex --strict-config` reports the misspelled key instead of

silently ignoring it. The path is shortened to `~` here for readability:

```text

$ codex --strict-config

Error loading config.toml:

~/.codex/config.toml:2:1: unknown configuration field `approval_polic`

|

2 | approval_polic = "on-request"

| ^^^^^^^^^^^^^^

```

Without `--strict-config`, Codex keeps the existing permissive behavior

and ignores the unknown key.

Strict config mode also validates ad-hoc `-c` / `--config` overrides:

```text

$ codex --strict-config -c foo=bar

Error: unknown configuration field `foo` in -c/--config override

$ codex --strict-config -c features.foo=true

Error: unknown configuration field `features.foo` in -c/--config override

```

Invalid feature toggles are rejected too, including values that look

like nested config paths:

```text

$ codex --strict-config --enable does_not_exist

Error: Unknown feature flag: does_not_exist

$ codex --strict-config --disable does_not_exist

Error: Unknown feature flag: does_not_exist

$ codex --strict-config --enable multi_agent_v2.subagent_usage_hint_text

Error: Unknown feature flag: multi_agent_v2.subagent_usage_hint_text

```

Unsupported commands reject the flag explicitly:

```text

$ codex --strict-config cloud list

Error: `--strict-config` is not supported for `codex cloud`

```

## Verification

The `codex-cli` `strict_config` tests cover invalid `--enable`, invalid

`--disable`, the compound `multi_agent_v2.subagent_usage_hint_text`

case, unknown `-c` overrides, app-server strict startup failure through

`codex app-server`, and rejection for unsupported commands such as

`codex cloud`, `codex mcp`, `codex remote-control`, and `codex

app-server proxy`.

The config and config-loader tests cover unknown top-level fields,

unknown nested fields, unknown `[features]` keys, source-location

reporting, non-file managed config sources, and `-c` validation for keys

such as `features.foo`.

The app-server test suite covers standalone `codex-app-server

--strict-config` startup failure for an unknown config field.

## Documentation

The Codex CLI docs on developers.openai.com/codex should mention

`--strict-config` as an opt-in validation mode for supported

config-heavy entry points once this ships.

Adds plugin/share/checkout to turn a shared remote plugin into a local

working copy under ~/plugins/<name>.

Registers the copy in the managed personal marketplace and records the

remote-to-local mapping for later share/save flows.

---------

Co-authored-by: Codex <noreply@openai.com>

# Why

Hook trust happens through the TUI in `/hooks` so it can block

non-interactive use cases. This flag will allow users that are using

codex headlessly to bypass hooks when they want to.

# What

This adds one invocation-scoped escape hatch.

- the CLI flag sets a runtime-only `bypass_hook_trust` override; there

is no durable `config.toml` setting

- hook discovery still respects normal enablement, so explicitly

disabled hooks remain disabled

- we show a `--dangerously-bypass-hook-trust is enabled. Enabled hooks

may run without review for this invocation.` message on startup so

accidental use is visible in both interactive and exec flows

This keeps “enabled” and “trusted” as separate concepts in the normal

path, while giving CI/E2E callers a stable way to opt into the

exceptional path when they already control the hook set.

## Why

Enterprise-managed hook policy needs a narrow way to require Codex to

ignore user-controlled lifecycle hooks without adopting the broader

trust-precedence model from earlier hook work. This keeps the policy

anchored in `requirements.toml`, so admins can opt into managed hooks

only while normal `config.toml` files cannot enable the restriction

themselves.

## What changed

- Added `allow_managed_hooks_only` to the requirements data flow and

preserved explicit `false` values.

- Also adds it to /debug-config

- Marked MDM, system, and legacy managed config layers as managed for

hook discovery.

- Updated hook discovery so `allow_managed_hooks_only = true`:

- keeps managed requirements hooks and managed config-layer hooks,

- skips user/project/session `hooks.json` and `[hooks]` entries with

concise startup warnings,

- skips current unmanaged plugin hooks,

- ignores any `allow_managed_hooks_only` key placed in ordinary

`config.toml` layers.

- Adds localVersion to plugin summaries and remoteVersion to share

context, including generated API schemas.

- Hydrates local and remote plugin versions from manifests and remote

release metadata.

- Adds default-on plugin_sharing gate for shared-with-me listing and

plugin/share/save, with disabled-path errors

and focused coverage.

## Why

`remote_control` can appear in `config.toml`, CLI feature overrides, and

the app-server config APIs. Before this PR, app-server startup treated

`config.features.enabled(Feature::RemoteControl)` as the signal to start

remote control ([base

code](5e3ee5eddf/codex-rs/app-server/src/lib.rs (L678-L680))).

That meant a user with:

```toml

[features]

remote_control = true

```

would accidentally opt every app-server process into remote control.

Remote-control startup should instead be a per-process launch decision

made by CLI flags.

## What Changed

- Marks `Feature::RemoteControl` as `Stage::Removed`, keeping

`remote_control` as a known compatibility key while making it

config-inert.

- Adds a hidden `--remote-control` process flag to `codex app-server`

and standalone `codex-app-server`.

- Plumbs that flag through

`AppServerRuntimeOptions.remote_control_enabled` and makes app-server

startup use only that runtime option to decide whether to start remote

control.

- Removes the app-server config mutation hook that reloaded config and

toggled remote control at runtime.

- Updates managed daemon spawning to use `codex app-server

--remote-control --listen unix://` instead of `--enable remote_control`.

Config APIs can still list, read, write, and set `remote_control`; those

operations just no longer affect remote-control process enrollment.

- make ThreadStore::update_thread_metadata accept a broad range of

metadata patches

- keep ThreadStore::append_items as raw canonical history append (no

metadata side effects)

- in the local store, write these metadata updates to a combination of

sqlite and rollout jsonl files for backwards-compat. It special cases

which fields need to go into jsonl vs sqlite vs whatever, confining the

awkwardness to just this implementation

- in remote stores we can simply persist the metadata directly to a

database, no special casing required.

- move the "implicit metadata updates triggered by appending rollout

items" from the RolloutRecorder (which is local-threadstore-specific) to

the LiveThread layer above the ThreadStore, inside of a private helper

utility called ThreadMetadataSync. LiveThread calls ThreadStore

append_items and update_metadata separately.

- Add a generic update metadata method to ThreadManager that works on

both live threads and "cold" threads

- Call that ThreadManager method from app server code, so app server

doesn't need to worry about whether the thread is live or not

Makes plugin summaries use config-style plugin@marketplace IDs while

exposing backend remote IDs separately as remotePluginId.

Also fix the consistency issue of REMOTE_SHARED_WITH_ME_MARKETPLACE_NAME

## Why

While investigating `codex exec hi` startup latency, the useful

questions were not "is startup slow?" but "which durable bucket is slow

in production?"

The path we observed has a few distinct stages:

1. `thread/start` creates the session

2. startup prewarm builds the turn context, tools, and prompt

3. startup prewarm warms the websocket

4. the first real turn resolves the prewarm

5. the model produces the first token

Before this PR, production telemetry had some of the raw measurements

already:

- aggregate startup-prewarm duration / age-at-first-turn metrics

- TTFT as a metric

- websocket request telemetry

But there was no coherent production event stream for the startup

breakdown itself, and TTFT was metric-only. That made it hard to answer

the same latency questions from OpenTelemetry-backed logs without adding

one-off local instrumentation.

## What changed

Add durable production telemetry on the existing `SessionTelemetry`

path:

- new `codex.startup_phase` OTel log/trace events plus

`codex.startup.phase.duration_ms`

- new `codex.turn_ttft` OTel log/trace events while preserving the

existing TTFT metric

The startup phase event is emitted for the coarse buckets we actually

observed while running `exec hi`:

- `thread_start_create_thread`

- `startup_prewarm_total`

- `startup_prewarm_create_turn_context`

- `startup_prewarm_build_tools`

- `startup_prewarm_build_prompt`

- `startup_prewarm_websocket_warmup`

- `startup_prewarm_resolve`

These phases are intentionally low-cardinality so they remain safe as

production telemetry tags.

## Why this shape

This keeps the instrumentation on the same production path as the rest

of the session telemetry instead of adding a local debug-only trace

mode. It also avoids changing startup behavior:

- prewarm still runs

- no control flow changes

- no extra remote calls

- no user-visible behavior changes

One boundary is intentional: very early process bootstrap that happens

before a session exists is not included here, because this PR uses

session-scoped production telemetry. The expensive buckets we were

trying to understand after `thread/start` are now covered durably.

## Verification

- `cargo test -p codex-otel`

- `cargo test -p codex-core turn_timing`

- `cargo test -p codex-core

regular_turn_emits_turn_started_without_waiting_for_startup_prewarm`

- `cargo test -p codex-core

interrupting_regular_turn_waiting_on_startup_prewarm_emits_turn_aborted`

- `cargo test -p codex-app-server thread_start`

- `just fix -p codex-otel -p codex-core -p codex-app-server`

I also ran `cargo test -p codex-core`; it built successfully and then

hit an existing unrelated stack overflow in

`tools::handlers::multi_agents::tests::tool_handlers_cascade_close_and_resume_and_keep_explicitly_closed_subtrees_closed`.

# Why

Managed hook configs need a shared cross-platform shape without making

the existing `command` field polymorphic. The common case is still one

command string, with Windows needing a different entrypoint only when

the runtime is actually Windows.

Keeping `command` as the portable/default path and adding an optional

Windows override keeps the config easier to read, preserves the existing

scalar shape for non-Windows users, and avoids forcing every caller into

a `{ unix, windows }` object when only one platform needs special

handling.

# What

- Add optional `command_windows` / `commandWindows` alongside the

existing hook `command` field.

- Resolve `command_windows` only on Windows during hook discovery; other

platforms continue to use `command` unchanged.

- Keep trust hashing aligned to the effective command selected for the

current runtime.

# Docs

The Codex hooks/config reference should document `command_windows` as

the Windows-only override for command hooks.

## Summary

Remote clients can still receive large `thread/resume` histories when

prior turns include MCP tool call payloads or image-generation results.

This adds a temporary response-only redaction path for the known remote

client names.

Longer term we will move towards fully paginated APIs backed by SQLite.

## Changes

- Redact MCP tool call payload-bearing fields in `thread/resume`

responses for `codex_chatgpt_android_remote` and

`codex_chatgpt_ios_remote`.

- Drop `imageGeneration` items from those `thread/resume` responses.

- Keep redaction out of persisted rollout files, `thread/read`,

`thread/turns/list`, live notifications, and token usage replay.

- Cover the behavior with app-server helper tests and a v2 resume

integration test that checks both remote clients plus a non-target

control client.

## Testing

- `cargo test -p codex-app-server thread_resume_redaction`

- `cargo test -p codex-app-server

thread_resume_redacts_payloads_for_chatgpt_remote_clients`

This PR replaces the TUI’s file-only `@mention` popup with a unified

mentions experience. Typing `@...` now searches across filesystem

matches, installed plugins, and skills in one popup, with result types

clearly labeled and selectable from the same flow.

- Adds a unified `@mentions` popup that returns:

- plugins

- skills

- files

- directories

- Adds search modes so users can narrow the popup without changing their

query:

- All Results _(default/same as Codex App)_

- Filesystem Only

- Plugins _(...and skills)_

- Preserves existing insertion behavior:

- selected file paths are inserted into the prompt

- paths with spaces are quoted

- image file selections still attach as images when possible

- selecting a plugin or skill inserts the corresponding `$name`

- the composer records the canonical mention binding, such as

`plugin://...` or the skill path

- Expanded `@mentions` rendering:

- type tags for Plugin, Skill, File, and Dir

- distinct plugin/filesystem colors

- stable fixed-height layout (8 rows)

- truncation behavior for narrow terminals

Note:

- The unified mentions popup does not display app connectors under

`@mention` results for Codex App parity. Connector mentions remain

available through the existing `$mention` path.

https://github.com/user-attachments/assets/f93781ed-57d3-4cb5-9972-675bc5f3ef3f

## Summary

- add SQLite init, backfill-gate, and fallback telemetry without

introducing a cross-cutting state-db access wrapper

- install one process-scoped telemetry sink after OTEL startup and let

low-level state/rollout paths emit through it directly

- add process-start metrics for the process owners that initialize

SQLite

---------

Co-authored-by: Owen Lin <owen@openai.com>

## Why

Users have requested the ability to edit a goal's objective after a goal

has been created. This PR exposes a new `/goal edit` command in the TUI

to address this request.

In the process of implementing this, I also noticed an existing bug in

the goal runtime. When a goal's objective is updated through the

`thread/goal/set` app server API, the goal runtime didn't emit a new

steering prompt to tell the agent about the new objective. This PR also

fixes this hole.

## What Changed

- Adds `/goal edit` in the TUI, opening an edit box prefilled with the

current goal objective.

- Keeps active and paused goals in their current state, resets completed

goals to active, keeps budget-limited goals budget-limited, and

preserves the existing token budget.

- Changes the existing `thread/goal/set` behavior so editing an

objective preserves goal accounting instead of resetting it. The older

reset-on-new-objective behavior was left over from before

`thread/goal/clear`; clients that need to reset accounting can now clear

the existing goal and create a new one.

- Reuses the existing goal set API path; this does not add or change

app-server protocol surface area.

- Adds a dedicated goal runtime steering prompt when an externally

persisted goal mutation changes the objective, so active turns receive

the updated objective.

## Validation

- Make sure `/goal edit` returns an error if no goal currently exists

- Make sure `/goal edit` displays an edit box that can be optionally

canceled with no side effects

- Make sure that an edited goal results in a steer so the agent starts

pursuing the new objective

- Make sure the new objective is reflected in the goal if you use

`/goal` to display the goal summary

- Make sure that `/goal edit` doesn't reset the token budget, time/token

accounting on the updated goal

Fixes#20792

## Why

`/goal`-first threads are valid resumable threads, but they can be

missing from `codex resume` and app recents because discovery depends on

metadata derived from a normal first user message.

PR #21489 attempted to fix this by using the goal objective as

`first_user_message`. Review feedback pointed out that

`first_user_message` does more than provide visible text today: it gates

listing, supplies preview text, and participates in deciding whether a

later title should surface as a distinct thread name. Reusing it for the

goal objective could leave a `/goal`-first thread with

`first_user_message=<goal>` and `title=<later prompt>`, even though the

goal should only provide the initial visible preview.

This PR follows that feedback by and keeps the `first_user_message` as

is but introduces a new `preview` field to separate concerns. The

`preview` field is populated from the first user message or the goal

objective. We can extend it in the future to include other sources.

## What Changed

- Added internal thread `preview` metadata in `codex-state`, including a

SQLite migration that backfills from `first_user_message` and from

existing `thread_goals` objectives when needed.

- Treated `ThreadGoalUpdated` as preview-bearing metadata so goal-first

threads can be listed and searched without mutating

`first_user_message`.

- Updated rollout listing, state queries, thread-store conversion, and

app-server mapping to use preview metadata while continuing to expose

the existing public `preview` field.

- Preserved title/name distinctness behavior around literal

`first_user_message`, so a later normal prompt after `/goal` does not

surface as a separate name just because the goal supplied the initial

preview.

- Preserved compatibility for older/internal metadata writes by deriving

preview from `first_user_message` when explicit preview metadata is

absent.

## Verification

- Manually verified that a thread that starts with a `/goal <objective>`

shows up in the resume picker.

Expose discoverability and full share principals in share context, carry

roles through save/updateTargets, hydrate local shared plugin reads, and

keep share URLs only under plugin.shareContext.

## Why

PR #21460 reverted the earlier move of skills change watching from

`codex-core` into app-server. This reapplies that boundary change so

app-server owns client-facing `skills/changed` notifications and core no

longer carries the watcher.

## What

- Restore the app-server `SkillsWatcher` and register it from thread

listener setup.

- Remove the core-owned skills watcher and its core live-reload

integration surface.

- Restore app-server coverage for `skills/changed` notifications after a

watched skill file changes.

## Validation

- `cargo test -p codex-app-server --test all

suite::v2::skills_list::skills_changed_notification_is_emitted_after_skill_change

-- --exact --nocapture`

- `cargo test -p codex-core --lib --no-run`

## Summary

Support registry-backed remote executors end to end so downstream

services can resolve an executor id into an exec-server URL and make

that environment available to Codex without relying on the legacy cloud

environments flow.

## What changed

- switch remote executor registration to the executor registry bootstrap

contract

- allow named remote environments to be inserted into

`EnvironmentManager` at runtime

- add the experimental app-server RPC `environment/add` so initialized

experimental clients can register those remote environments for later

`thread/start` and `turn/start` selection

## Validation

Ran focused validation locally:

- `cargo test -p codex-exec-server environment_manager_`

- `cargo test -p codex-exec-server

register_executor_posts_with_bearer_token_header`

- `cargo test -p codex-app-server-protocol`

## Why

The app-server daemon work needs two app-server behaviors to be safe

when lifecycle management is driven by a helper process:

- a readiness probe must not become the process-wide client identity

just because it connects first

- a graceful reload signal needs to keep draining active turns even if

it is delivered more than once

## What changed

- Treat `codex_app_server_daemon` initialization as a probe-only client

for process-global originator and user-agent suffix state.

- Distinguish forceable shutdown signals from graceful-only ones, and

treat Unix `SIGHUP` as graceful-only while leaving `SIGTERM` and Ctrl-C

forceable.

- Add regression coverage for daemon probe initialization and repeated

`SIGHUP` delivery while a turn is still running.

## Testing

- `cargo test -p codex-app-server`

- The new daemon-probe and repeated-`SIGHUP` coverage passed.

- The run still failed in the existing

`suite::conversation_summary::get_conversation_summary_by_relative_rollout_path_resolves_from_codex_home`

and

`suite::conversation_summary::get_conversation_summary_by_thread_id_reads_rollout`

tests because their initialize handshake timed out.

- `cargo test -p codex-app-server --test all

suite::conversation_summary::`

- Reproduced the same two existing initialize-timeout failures in

isolation.

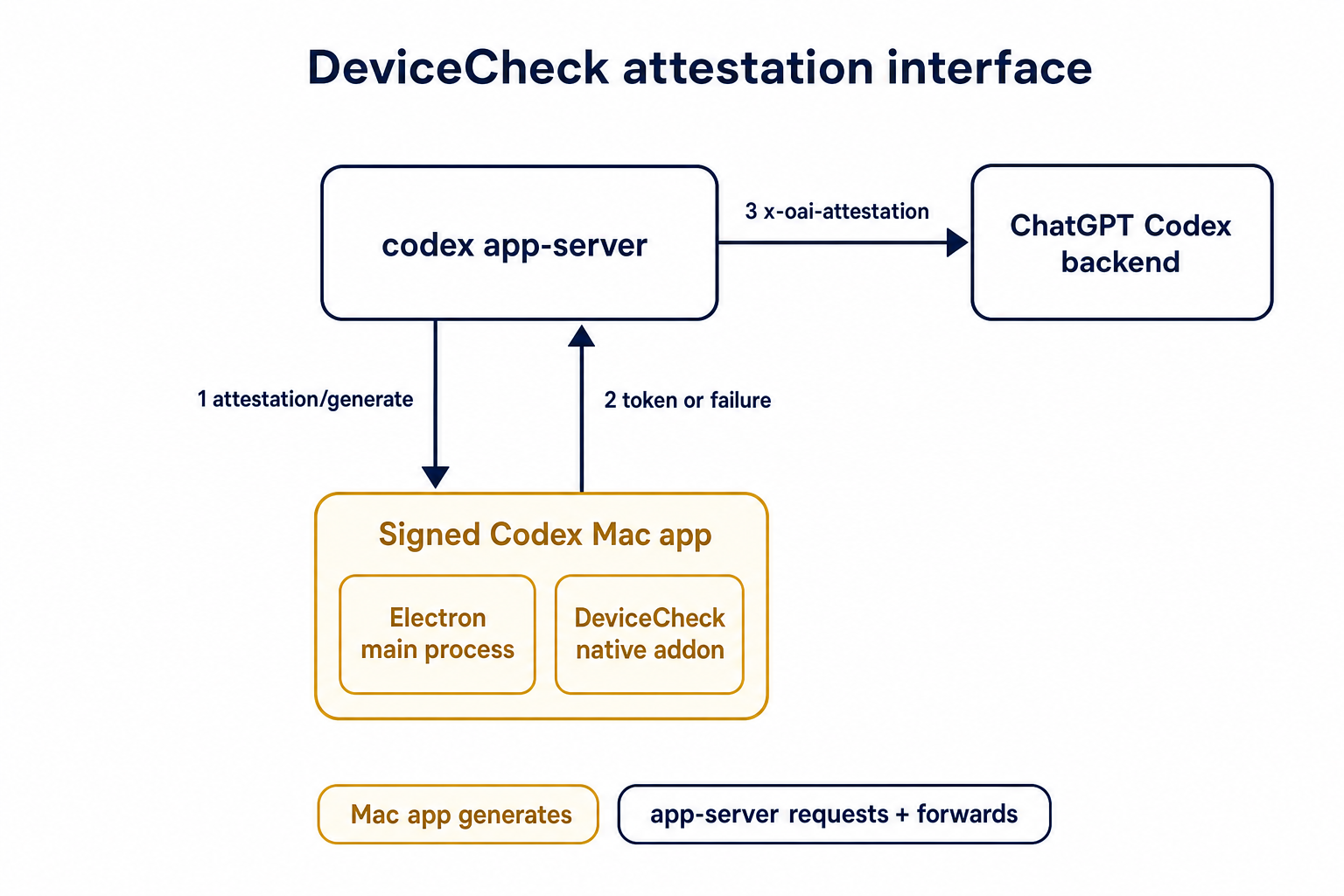

## Summary

TL;DR: teaches `codex-rs` / app-server to request a desktop-provided

attestation token and attach it as `x-oai-attestation` on the scoped

ChatGPT Codex request paths.

## Details

This PR teaches the Codex app-server runtime how to request and attach

an attestation token. It does not generate DeviceCheck tokens directly;

instead, it relies on the connected desktop app to advertise that it can

generate attestation and then asks that app for a fresh header value

when needed.

The flow is:

1. The Codex desktop app connects to app-server.

2. During `initialize`, the app can advertise that it supports

`requestAttestation`.

3. Before app-server calls selected ChatGPT Codex endpoints, it sends

the internal server request `attestation/generate` to the app.

4. app-server receives a pre-encoded header value back.

5. app-server forwards that value as `x-oai-attestation` on the scoped

outbound requests.

The code in this repo is mostly protocol and runtime plumbing: it adds

the app-server request/response shape, introduces an attestation

provider in core, wires that provider into Responses / compaction /

realtime setup paths, and covers the intended scoping with tests. The

signed macOS DeviceCheck generation remains owned by the desktop app PR.

## Related PR

- Codex desktop app implementation:

https://github.com/openai/openai/pull/878649

## Validation

<details>

<summary>Tests run</summary>

```sh

cargo test -p codex-app-server-protocol

cargo test -p codex-core attestation --lib

cargo test -p codex-app-server --lib attestation

```

Also ran:

```sh

just fix -p codex-core

just fix -p codex-app-server

just fix -p codex-app-server-protocol

just fmt

just write-app-server-schema

```

</details>

<details>

<summary>E2E DeviceCheck validation</summary>

First validated the signed desktop app boundary directly: launched a

packaged signed `Codex.app`, sent `attestation/generate`, decoded the

returned `v1.` attestation header, and validated the extracted

DeviceCheck token with `personal/jm/verify_devicecheck_token.py` using

bundle ID `com.openai.codex`. Apple returned `status_code: 200` and

`is_ok: true`.

Then ran the fuller app + app-server flow. The packaged `Codex.app`

launched a current-branch app-server via `CODEX_CLI_PATH`, and a local

MITM proxy intercepted outbound `chatgpt.com` traffic. The app-server

requested `attestation/generate` from the real Electron app process, and

the intercepted `/backend-api/codex/responses` traffic included

`x-oai-attestation` on both routes:

```text

GET /backend-api/codex/responses Upgrade: websocket x-oai-attestation: present

POST /backend-api/codex/responses Upgrade: none x-oai-attestation: present

```

The captured header decoded to a DeviceCheck token that also validated

with Apple for `com.openai.codex` (`status_code: 200`, `is_ok: true`,

team `2DC432GLL2`).

</details>

---------

Co-authored-by: Codex <noreply@openai.com>

Requires discoverability on plugin/share/updateTargets so the server can

manage workspace link access consistently, including auto-adding the

workspace principal for UNLISTED.

Also rejects LISTED on share creation and blocks client-supplied

workspace principals while preserving response parsing for LISTED.

## Summary

- Remove `perCwdExtraUserRoots` / `SkillsListExtraRootsForCwd` from the

`skills/list` app-server API.

- Drop Rust app-server and `codex-core-skills` extra-root plumbing so

skill scans are keyed by the normal cwd/user/plugin roots only.

- Regenerate app-server schemas and update docs/tests that only existed

for the removed extra-roots behavior.

## Validation

- `just write-app-server-schema`

- `just fmt`

- `cargo test -p codex-app-server-protocol`

- `cargo test -p codex-core-skills`

- `just fix -p codex-app-server-protocol`

- `just fix -p codex-core-skills`

- `just fix -p codex-app-server`

- `just fix -p codex-tui`

## Notes

- `cargo test -p codex-app-server --test all skills_list` ran the edited

skills-list cases, but the full filtered run ended on existing

`skills_changed_notification_is_emitted_after_skill_change` timeout

after a websocket `401`.

- `cargo test -p codex-tui --lib` compiled the changed TUI callers, then

failed two unrelated status permission tests because local

`/etc/codex/requirements.toml` forbids `DangerFullAccess`.

- Source-truth check found the OpenAI monorepo still has

generated/app-server-kit mirror references to the removed field; those

should be cleaned up when generated app-server types are synced or in a

companion OpenAI cleanup.

## Why

The goal of this PR is to align on app-server and `ThreadStore` API

updates for paginating through large threads.

#### app-server

##### `thread/turns/list`

- Updates `thread/turns/list` to support `itemsView?: "notLoaded" |

"summary" | "full" | null`, defaulting to `summary`.

- Implements the current `thread/turns/list` behavior over the existing

persisted rollout-history fallback:

- `notLoaded` returns turn envelopes with empty `items`.

- `summary` returns the first user message and final assistant message

when available.

- `full` preserves the existing full item behavior.

Note that this method still uses the naive approach of loading the

entire rollout file, and returns just the filtered slice of the data.

Real pagination will come later by leveraging SQLite.

##### `thread/turns/items/list`

- Adds the experimental `thread/turns/items/list` protocol, schema,

dispatcher, and processor stub. The app-server currently returns

JSON-RPC `-32601` with `thread/turns/items/list is not supported yet`.

#### ThreadStore

- Adds the experimental `thread/turns/items/list` protocol, schema,

dispatcher, and processor stub. The app-server currently returns

JSON-RPC `-32601` with `thread/turns/items/list is not supported yet`.

- Adds `ThreadStore` contract types and stubbed methods for listing

thread turns and listing items within a turn.

- Adds a typed `StoredTurnStatus` and `StoredTurnError` to avoid baking

app-server API enums or lossy string status values into the store-facing

turn contract.

- Adds a typed `StoredTurnStatus` and `StoredTurnError` to avoid baking

app-server API enums or lossy string status values into the store-facing

turn contract.

This also sketches the storage abstraction we expect to need once turns

are indexed/stored. In particular, `notLoaded` is useful only if

ThreadStore can eventually list turn metadata without loading every

persisted item for each turn.

## Validation

- Added/updated protocol serialization coverage for the new request and

response shapes.

- Added app-server integration coverage for `thread/turns/list` default

summary behavior and all three `itemsView` modes.

- Added app-server integration coverage that `thread/turns/items/list`

returns the expected unsupported JSON-RPC error when experimental APIs

are enabled.

- Added thread-store coverage that the default trait methods return

`ThreadStoreError::Unsupported`.

No developers.openai.com documentation update is needed for this

internal experimental app-server API surface.

## Summary

Fix `getConversationSummary` so thread-id summaries work for stored

threads that do not have a local rollout path, such as remote thread

stores.

The root cause was that `summary_from_stored_thread` returned `None`

when `StoredThread.rollout_path` was absent, and

`get_thread_summary_response_inner` treated that as an internal error.

This made conversation-id lookups depend on a local-only field even

though the thread store can address the thread by id.

- Route live thread renames through `ThreadStore` metadata updates.

- Read resumed thread names from store metadata with legacy local

fallback preserved in the store.

## Why

App-server config writes were leaving existing threads partially stale.

After a config mutation, the app-server told each live thread to run

`Op::ReloadUserConfig`, but that path only re-read the user

`config.toml` layer. Settings that came from the app-server's

materialized config snapshot did not propagate to existing threads until

restart.

This change prevent a FS access from `core` for CCA.

## What changed

- add `CodexThread::refresh_runtime_config()` and

`Session::refresh_runtime_config()` so the app-server can push a freshly

rebuilt config snapshot into a live thread

- rebuild the latest config with each thread's `cwd` after config

mutations, then refresh the thread from that snapshot instead of asking

it to reload only `config.toml`

- keep session-static settings unchanged during refresh, while updating

runtime-refreshable state such as the config layer stack,

`tool_suggest`, and derived hook/plugin/skill state

- keep `reload_user_config_layer()` as the file-backed fallback for

legacy local reload flows, but route the shared refresh logic through

the new runtime refresh path

## Testing

- add a session test that verifies `refresh_runtime_config()` rebuilds

hooks from refreshed config

- add a session test that verifies runtime-refreshable fields update

while session-static settings like `model` and `notify` stay unchanged

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

Feedback uploads already carry auth-derived context like

`chatgpt_user_id`, but they do not include the authenticated

workspace/account id. Adding `account_id` makes feedback triage easier

when a user can operate across multiple ChatGPT workspaces.

## What changed

- emit auth-derived `account_id` into feedback tags in `app-server`

before the feedback snapshot is uploaded

- preserve that tag through `codex-feedback` upload tag assembly

alongside the existing merge behavior for other tags

- extend `codex-feedback` coverage to assert that snapshot-derived

`account_id` is present in uploaded tags

## Verification

- `cargo test -p codex-feedback

upload_tags_include_client_tags_and_preserve_reserved_fields`

- `cargo test -p codex-app-server --lib feedback_processor`

Supersedes the abandoned #19859, rebuilt on latest `main`.

# Why

PR #19705 adds discovery for hooks bundled with plugins, but `/plugins`

still only shows skills, apps, and MCP servers. This follow-up makes

bundled hooks visible in the same plugin detail view so users can

inspect the full plugin surface in one place.

We also need `PluginHookSummary` to populate Plugin Hooks in the app;

`hooks/list` is not enough there because plugin detail needs to show

hooks for disabled plugins too.

# What

- extend `plugin/read` with `PluginHookSummary` entries for bundled

hooks

- summarize plugin hooks while loading plugin details

- render a `Hooks` row in the `/plugins` detail popup

<img width="3456" height="848" alt="CleanShot 2026-04-27 at 11 45 34@2x"

src="https://github.com/user-attachments/assets/fe3a38d6-a260-4351-8513-fb04c93d725b"

/>

## Why

Reverts #20689 to restore the previous optional state DB plumbing. The

conflict resolution keeps the newer installation ID and session/thread

identity changes that landed after #20689, while removing the mandatory

state DB and agent graph store dependency from ThreadManager

construction.

## What changed

- Restored `Option<StateDbHandle>` through app-server, MCP server,

prompt debug, and test entry points.

- Removed the `codex-core` dependency on `codex-agent-graph-store` and

reverted descendant lookup back to the existing state DB path when

available.

- Kept newer `installation_id` forwarding by passing it beside the

optional DB handle.

- Kept local thread-name updates working when the optional state DB

handle is absent.

## Validation

- `git diff --check`

- `cargo test -p codex-thread-store`

- `cargo test -p codex-state -p codex-rollout -p

codex-app-server-protocol`

- Attempted `env CARGO_INCREMENTAL=0 cargo test -p codex-core -p

codex-app-server -p codex-app-server-client -p codex-mcp-server -p

codex-thread-manager-sample -p codex-tui`; blocked locally by a rustc

ICE while compiling `v8 v146.4.0` with `rustc 1.93.0 (254b59607

2026-01-19)` on `aarch64-apple-darwin`.

## Summary

- process `skills/list` cwd entries with bounded concurrency of 5

- preserve the caller's requested cwd order in the response

- add coverage that verifies response ordering remains stable

## Why

Cold-start desktop traces showed that `skills/list` can dominate the

shared config queue when it scans many workspace roots serially. The

expensive work is largely independent per cwd, so the request was paying

the sum of all cwd costs instead of the cost of the slowest bounded

batch.

## Impact

This keeps current request semantics intact while reducing the

wall-clock time of large multi-root `skills/list` calls. That should

also reduce how long later config-family requests, such as

`plugin/list`, wait behind `skills/list` during startup.

## Validation

- `just fmt`

- `cargo test -p codex-app-server`

- `cargo test -p codex-app-server

skills_list_preserves_requested_cwd_order`

Based on work from Vincent K -

https://github.com/openai/codex/pull/19060

<img width="1836" height="642" alt="CleanShot 2026-04-29 at 20 47 40@2x"

src="https://github.com/user-attachments/assets/b647bb89-65fe-40c8-80b0-7a6b7c984634"

/>

## Why

Compaction rewrites the conversation context that future model turns

receive, but hooks currently have no deterministic lifecycle point

around that rewrite. This adds compact lifecycle hooks so users can

audit manual and automatic compaction, surface hook messages in the UI,

and run post-compaction follow-up without overloading tool or prompt

hooks.

## What Changed

- Added `PreCompact` and `PostCompact` hook events across hook config,

discovery, dispatch, generated schemas, app-server notifications,

analytics, and TUI hook rendering.

- Added trigger matching for compact hooks with the documented `manual`

and `auto` matcher values.

- Wired `PreCompact` before both local and remote compaction, and

`PostCompact` after successful local or remote compaction.

- Kept compact hook command input to lifecycle metadata: session id,

Codex turn id, transcript path, cwd, hook event name, model, and

trigger.

- Made compact stdout handling consistent with other hooks: plain stdout

is ignored as debug output, while malformed JSON-looking stdout is

reported as failed hook output.

- Added integration coverage for compact hook dispatch, trigger

matching, post-compact execution, and the audited behavior that

`decision:"block"` does not block compaction.

## Out of Scope

- Hook-specific compaction blocking is not implemented;

`decision:"block"` and exit-code-2 blocking semantics are intentionally

unsupported for `PreCompact`.

- Custom compaction instructions are not exposed to compact hooks in

this PR.

- Compact summaries, summary character counts, and summary previews are

not exposed to compact hooks in this PR.

## Verification

- `cargo test -p codex-hooks`

- `cargo test -p codex-core

manual_pre_compact_block_decision_does_not_block_compaction`

- `cargo test -p codex-app-server hooks_list`

- `cargo test -p codex-core config_schema_matches_fixture`

- `cargo test -p codex-tui hooks_browser`

## Docs

The developer documentation for Codex hooks should be updated alongside

this feature to document `PreCompact` and `PostCompact`, the

`manual`/`auto` matcher values, and the compact hook payload fields.

---------

Co-authored-by: Vincent Koc <vincentkoc@ieee.org>

Adds marketplaceKinds to plugin/list for local, workspace-directory, and

shared-with-me; omitted params keep default local plus gated global

behavior, while explicit kinds are exact.

Exposes shareContext on plugin summaries from local share mappings and

remote workspace/shared responses, including remotePluginId and nullable

creator metadata.

Adds shared-with-me listing through /ps/plugins/workspace/shared,

renames the workspace remote namespace to workspace-directory, and keeps

direct remote read/share/install/update/delete paths gated by plugins

rather than remote_plugin.

## Why

Skills update notifications are app-server API behavior, but the watcher

lived in `codex-core` and surfaced through

`EventMsg::SkillsUpdateAvailable`. Moving the watcher out keeps core

focused on thread execution and lets app-server own both cache

invalidation and the `skills/changed` notification.

## What changed

- Added an app-server-owned skills watcher that watches local skill

roots, clears the shared skills cache, and emits `skills/changed`

directly.

- Registers skill watches from the common app-server thread listener

attach path, including direct starts, resumes, and app-server-observed

child or forked threads.

- Stores the `WatchRegistration` on `ThreadState`, so listener

replacement, thread teardown, idle unload, and app-server shutdown

deregister by dropping the RAII guard.

- Removed `EventMsg::SkillsUpdateAvailable`, the core watcher, and the

old core live-reload test.

- Extended the app-server skills change test to verify a cached skills

list is refreshed after a filesystem change without forcing reload.

## Validation

- `cargo check -p codex-core -p codex-app-server -p codex-mcp-server -p

codex-rollout -p codex-rollout-trace`

- `cargo test -p codex-app-server

skills_changed_notification_is_emitted_after_skill_change`

## Why

After the tool-item schemas are in place, analytics needs to emit them

from the app-server item lifecycle rather than requiring bespoke

tracking at each callsite. The reducer should also reuse the shared

thread analytics context introduced below it in the stack so later event

families do not repeat the same reducer joins or missing-state ladder.

## What changed

- Tracks tool-item completion notifications and emits the matching tool

analytics event when a terminal item arrives.

- Derives event-specific payload details for command execution, file

changes, MCP calls, dynamic tools, collaboration tools, web search, and

image generation.

- Denormalizes thread, app-server client, runtime, and subagent

provenance metadata through the shared thread analytics context.

- Adds reducer coverage for item lifecycle emission and subagent

metadata inheritance.

## Duration semantics

`duration_ms` is computed from the app-server item lifecycle timestamps:

`completed_at_ms - started_at_ms`. That makes it the duration of the

lifecycle Codex observed locally, not necessarily the upstream

provider's full execution time.

- Web search usually has a meaningful observed lifecycle because

Responses can send `response.output_item.added` before

`response.output_item.done`; in that case `started_at_ms` comes from the

added event and `completed_at_ms` comes from the done event.

- Image generation can be much less precise. In the current observed

stream, image generation often arrives only as a completed

`response.output_item.done`; when there is no earlier added event, Codex

synthesizes the started item immediately before completion, so

`duration_ms` can be `0` even though upstream image generation took

longer.

- Standalone web search and standalone image generation work is expected

to land after this stack. Those paths may introduce more direct

lifecycle events or timing points, so the current

web-search/image-generation duration semantics should be treated as the

best available item-lifecycle approximation, not the final latency

contract for those tool families.

- `execution_duration_ms` is populated only where the completed item

already carries a native execution duration; otherwise it remains `null`

while `duration_ms` still reflects the local lifecycle interval.

## Currently placeholder / partial fields

Some fields are included in the schema for the intended steady-state

contract, but this PR does not yet populate them from real

approval/review state:

- `review_count`, `guardian_review_count`, and `user_review_count`

currently default to `0`.

- `final_approval_outcome` currently defaults to `unknown`.

- `requested_additional_permissions` and `requested_network_access`

currently default to `false`.

## Verification

- `cargo test -p codex-analytics`

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/17090).

* #18748

* #18747

* __->__ #17090

* #17089

* #20514

## Why

`session_id` and `thread_id` are separate identities after #20437, but

app-server only surfaced `sessionId` on the `thread/start`,

`thread/resume`, and `thread/fork` response envelopes. Other

thread-bearing surfaces such as `thread/list`, `thread/read`,

`thread/started`, `thread/rollback`, `thread/metadata/update`, and

`thread/unarchive` either lacked the grouping key or forced clients to

special-case those three responses.

Making `sessionId` part of the reusable `Thread` payload gives every v2

API surface one place to expose session-tree identity.

## Mental model

1. thread.sessionId lives on `Thread`

2. It is a view/runtime identity for the current live session tree, not

durable stored lineage metadata

3. When app-server has a live loaded thread, it copies the real value

from core’s session_configured.session_id

4. When it only has stored/unloaded data, it falls back to

thread.sessionId = thread.id

## What changed

- Added `sessionId` to the v2

[`Thread`](8fc9e9b4cf/codex-rs/app-server-protocol/src/protocol/v2/thread_data.rs (L105-L109)).

- Removed the duplicate top-level `sessionId` fields from

`thread/start`, `thread/resume`, and `thread/fork`; clients should now

read `response.thread.sessionId`.

- Populated `thread.sessionId` when building live thread responses,

replaying loaded threads, and returning stored-thread summaries so the

field is present across start, resume, fork, list, read, rollback,

metadata-update, unarchive, and `thread/started` paths. See

[`load_thread_from_resume_source_or_send_internal`](8fc9e9b4cf/codex-rs/app-server/src/request_processors/thread_processor.rs (L2824-L2918))

and

[`thread_from_stored_thread`](8fc9e9b4cf/codex-rs/app-server/src/request_processors/thread_processor.rs (L3671-L3719)).

- Preserved the stored-thread fallback: if a thread has not been loaded

into a live session tree yet, `thread.sessionId` falls back to

`thread.id`; once the thread is live again, the field reports the active

session tree root.

- Regenerated the JSON/TypeScript schemas and updated the app-server

README examples to show

[`thread.sessionId`](8fc9e9b4cf/codex-rs/app-server/README.md (L306-L310))

on the thread object.

## Why

`thread/start` and `thread/resume` already return `sessionId`, but

`thread/fork` only returned the new thread. That left clients to infer

the forked thread's session identity from `thread.id`, which kept the

new `session_id` / `thread_id` split implicit at one lifecycle boundary.

Follow-up to #20437.

## What changed

- Add `sessionId` to `ThreadForkResponse`.

- Populate it from the forked session configuration.

- Regenerate the v2 JSON/TypeScript schema fixtures and update the

app-server docs/example.

- Extend the fork integration test to assert the returned `sessionId`.

## Verification

- Added coverage in `thread_fork_creates_new_thread_and_emits_started`

for the new response field.

## Summary

Related to

https://openai.slack.com/archives/C095U48JNL9/p1777537279707449

TLDR:

We update the meaning of session ids and thread ids:

* thread_id stays as now

* session_id become a shared id between every thread under a /root

thread (i.e. every sub-agent share the same session id)

This PR introduces an explicit `SessionId` and threads it through the

protocol/client boundary so `session_id` and `thread_id` can diverge

when they need to, while preserving compatibility for older serialized

`session_configured` events.

---------

Co-authored-by: Codex <noreply@openai.com>

# Overview

MCP refreshes were rebuilding active threads from fresh disk-backed

config only, which dropped thread-start session overlays such as

app-injected MCP servers. This keeps refreshes current with disk config

while preserving the thread-local config that only the active thread

knows about.

# Changes

- Rebuild refreshed config per active thread using that thread's current

`cwd`, rather than fanning out one app-server config to every thread.

- Preserve each thread's `SessionFlags` layer while replacing reloadable

config layers with freshly loaded config, then derive the MCP refresh

payload from the rebuilt result.

- Move MCP refresh orchestration into app-server so manual refreshes

fail loudly while background refreshes remain best-effort, and route

plugin-triggered refreshes through the same per-thread reload path.

- Add regression coverage for session overlays, fresh project config,

plugin-derived MCP config, current requirements, and strict vs

best-effort refresh behavior.

# Verification

- Passed focused Rust coverage for the thread-config rebuild behavior

and deferred MCP refresh flow, plus `cargo test -p codex-app-server

--lib`.

- Verified end to end in the Codex dev app against the locally built

CLI: registered an MCP via thread config, verified that it could be used

successfully before refresh, manually triggered MCP refresh, and

verified that it continued to be available afterward.