## Summary

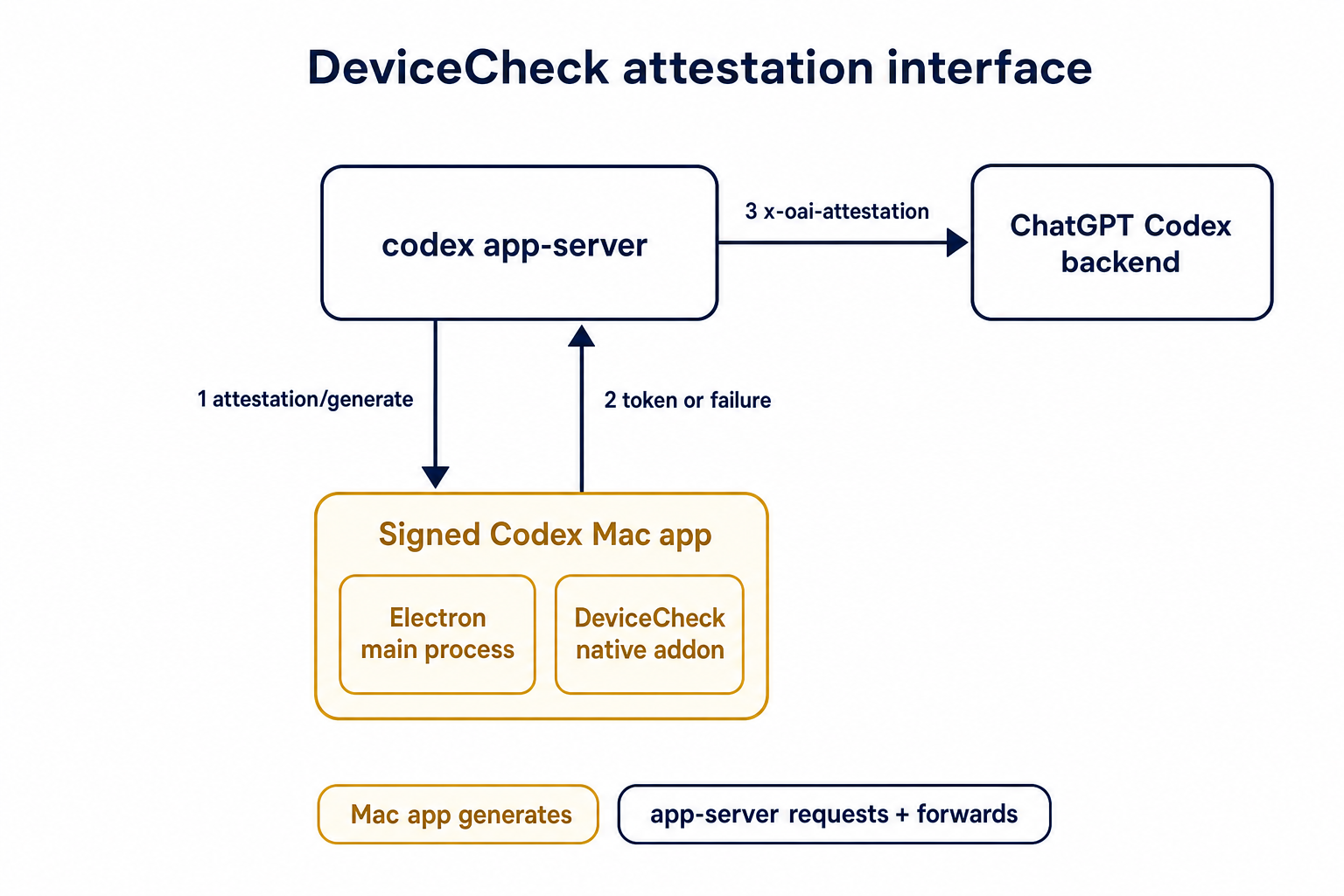

TL;DR: teaches `codex-rs` / app-server to request a desktop-provided

attestation token and attach it as `x-oai-attestation` on the scoped

ChatGPT Codex request paths.

## Details

This PR teaches the Codex app-server runtime how to request and attach

an attestation token. It does not generate DeviceCheck tokens directly;

instead, it relies on the connected desktop app to advertise that it can

generate attestation and then asks that app for a fresh header value

when needed.

The flow is:

1. The Codex desktop app connects to app-server.

2. During `initialize`, the app can advertise that it supports

`requestAttestation`.

3. Before app-server calls selected ChatGPT Codex endpoints, it sends

the internal server request `attestation/generate` to the app.

4. app-server receives a pre-encoded header value back.

5. app-server forwards that value as `x-oai-attestation` on the scoped

outbound requests.

The code in this repo is mostly protocol and runtime plumbing: it adds

the app-server request/response shape, introduces an attestation

provider in core, wires that provider into Responses / compaction /

realtime setup paths, and covers the intended scoping with tests. The

signed macOS DeviceCheck generation remains owned by the desktop app PR.

## Related PR

- Codex desktop app implementation:

https://github.com/openai/openai/pull/878649

## Validation

<details>

<summary>Tests run</summary>

```sh

cargo test -p codex-app-server-protocol

cargo test -p codex-core attestation --lib

cargo test -p codex-app-server --lib attestation

```

Also ran:

```sh

just fix -p codex-core

just fix -p codex-app-server

just fix -p codex-app-server-protocol

just fmt

just write-app-server-schema

```

</details>

<details>

<summary>E2E DeviceCheck validation</summary>

First validated the signed desktop app boundary directly: launched a

packaged signed `Codex.app`, sent `attestation/generate`, decoded the

returned `v1.` attestation header, and validated the extracted

DeviceCheck token with `personal/jm/verify_devicecheck_token.py` using

bundle ID `com.openai.codex`. Apple returned `status_code: 200` and

`is_ok: true`.

Then ran the fuller app + app-server flow. The packaged `Codex.app`

launched a current-branch app-server via `CODEX_CLI_PATH`, and a local

MITM proxy intercepted outbound `chatgpt.com` traffic. The app-server

requested `attestation/generate` from the real Electron app process, and

the intercepted `/backend-api/codex/responses` traffic included

`x-oai-attestation` on both routes:

```text

GET /backend-api/codex/responses Upgrade: websocket x-oai-attestation: present

POST /backend-api/codex/responses Upgrade: none x-oai-attestation: present

```

The captured header decoded to a DeviceCheck token that also validated

with Apple for `com.openai.codex` (`status_code: 200`, `is_ok: true`,

team `2DC432GLL2`).

</details>

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`ToolName::display()` made it too easy to flatten tool identity and

accidentally compare rendered strings. Tool identity should stay

structural until a legacy string boundary actually requires the

flattened spelling.

## What

- Removes `ToolName::display()` and relies on the existing `Display`

impl for messages and errors.

- Adds structural ordering for `ToolName` and uses it for

sorting/deduping deferred tools.

- Carries `ToolName` through tool/sandbox plumbing, flattening only at

legacy boundaries such as hook payloads, telemetry tags, and Responses

tool names.

- Updates MCP normalization tests to assert `ToolName` structure instead

of rendered strings.

## Testing

- `cargo test -p codex-mcp test_normalize_tools`

- `cargo test -p codex-core unavailable_tool`

- `just fix -p codex-protocol`

- `just fix -p codex-mcp`

- `just fix -p codex-core`

## Why

The earlier PRs add stdio transport support and the config-backed

environment provider, but the feature remains inert until normal Codex

entrypoints construct `EnvironmentManager` with enough context to

discover `CODEX_HOME/environments.toml`. This final stack PR activates

the provider while preserving the legacy `CODEX_EXEC_SERVER_URL`

fallback when no environments file exists.

**Stack position:** this is PR 5 of 5. It is the product wiring PR that

activates the configured environment provider added in PR 4.

## What Changed

- Thread `codex_home` into `EnvironmentManagerArgs`.

- Change `EnvironmentManager::new(...)` to load the provider from

`CODEX_HOME`.

- Preserve legacy behavior by falling back to

`DefaultEnvironmentProvider::from_env()` when `environments.toml` is

absent.

- Make `environments.toml`-backed managers start new threads with all

configured environments, default first, while keeping the legacy env-var

path single-default.

- Update the app-server, TUI, exec, MCP server, connector, prompt-debug,

and thread-manager-sample callsites to pass `codex_home` and handle

provider-loading errors.

## Self-Review Notes

- The multi-environment startup path is intentionally tied to the

`environments.toml` provider. Using `>1` configured environment as the

only signal would also expand the legacy `CODEX_EXEC_SERVER_URL`

provider because it keeps `local` addressable alongside `remote`.

- The startup environment list is still derived inside

`EnvironmentManager`; the provider only says whether its snapshot should

start new threads with all configured environments.

- The thread-manager sample was updated to pass the current

`ThreadManager::new(...)` installation id argument so the stack compiles

under Bazel.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- 4. https://github.com/openai/codex/pull/20666 - Add CODEX_HOME

environments TOML provider

- **5. This PR:** https://github.com/openai/codex/pull/20667 - Load

configured environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

- `just fmt`

- `git diff --check`

- `bazel build --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/thread-manager-sample:codex-thread-manager-sample`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/exec-server:exec-server-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=default_thread_environment_selections_use_manager_default_id

//codex-rs/core:core-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=start_thread_uses_all_default_environments_from_codex_home

//codex-rs/core:core-unit-tests`

## Documentation

This activates `CODEX_HOME/environments.toml`; user-facing documentation

should be added before this stack is treated as a documented public

workflow.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`/fast` was wired as a one-off slash command even though model metadata

now exposes service tiers as catalog data. That meant adding another

tier, such as a slower/cheaper tier, would require more hardcoded TUI

plumbing instead of letting the model catalog drive the available

commands.

This change makes service-tier commands data-driven: each advertised

`service_tiers` entry becomes a `/name` command using the catalog

description, while the request path sends the tier `id` only when the

selected model supports it.

## What Changed

- Removed the hardcoded `/fast` slash-command variant and introduced

dynamic service-tier command items in the composer and command popup.

- Added toggle behavior for service-tier commands: invoking `/name`

selects that tier, and invoking it again clears the selection.

- Preserved the existing Fast-mode keybinding/status affordances by

resolving the current model tier whose name is `fast`, while still

sending the tier request value such as `priority`.

- Persisted service-tier selections as raw request strings so non-fast

tiers can round-trip through config.

- Updated the Bedrock catalog entry to advertise fast support through

`service_tiers` with `id: "priority"` and `name: "fast"`.

- Added defensive filtering in core so unsupported selected service

tiers are omitted from `/responses` requests.

## Validation

- Added/updated coverage for dynamic service-tier slash command lookup,

popup descriptions, composer dispatch, TUI fast toggling, and

unsupported-tier omission in core request construction.

- Local tests were not run per request.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

Some consumers expect conventional hyphenated HTTP headers. Codex

already sends the session and thread IDs on outbound Responses requests,

but it only uses the underscore spellings today, which makes those IDs

harder to consume in systems that normalize or reject underscore header

names.

Full context here:

https://openai.slack.com/archives/C08KCGLSPSQ/p1778248578422369

## What changed

- `build_session_headers` now emits both `session_id` and `session-id`

when a session ID is present.

- It does the same for `thread_id` and `thread-id`.

- Added regression coverage in `codex-api/tests/clients.rs` and

`core/tests/suite/client.rs` so both the lower-level client tests and

the end-to-end request tests assert the two header spellings are

present.

## Test plan

- Added header assertions in `codex-api/tests/clients.rs`.

- Added request-header assertions in `core/tests/suite/client.rs` for

both the `/v1/responses` and `/api/codex/responses` request paths.

## Summary

- enable `apply_patch_freeform` by default in the feature registry

## Why

- make the freeform `apply_patch` tool available by default when model

metadata does not explicitly opt into another mode

## Validation

- `just fmt`

- did not run tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`service_tier` in `config.toml` and profile config was still modeled as

an enum, which blocked newer or experimental service tier IDs even

though the runtime paths already carry string IDs.

This change makes the TOML-facing config accept string service tier IDs

directly while keeping the legacy `fast` alias behavior by normalizing

it to the request value `priority`.

## What Changed

- change the TOML-facing `service_tier` fields in global and profile

config to `Option<String>`

- keep config-load normalization so legacy `fast` still resolves to

`priority`

- persist resolved service tier strings directly in config locks so

arbitrary IDs round-trip cleanly

- regenerate the config schema and add config coverage for arbitrary

string IDs plus legacy `fast` normalization

## Verification

- added config tests for arbitrary string service tiers and legacy

`fast` normalization

- ran `just write-config-schema`

- CI

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

API-key-auth remote compaction requests should not inherit

`service_tier` from normal `/responses` turns. This path needs to match

API auth expectations, while ChatGPT-auth remote compaction should keep

reusing the shared request fields that still apply there.

This change keeps the decision inline in

`codex-rs/core/src/compact_remote.rs` only. Under API key auth, the

classic remote `/responses/compact` path now omits `service_tier`; under

ChatGPT auth, it keeps reusing the configured tier.

`codex-rs/core/src/compact_remote_v2.rs` is unchanged. The remote

compaction parity coverage and snapshots were updated to assert the

API-key omission and preserve the ChatGPT-auth behavior.

## Testing

- Updated remote compaction parity coverage in

`codex-rs/core/tests/suite/compact_remote.rs` and the corresponding

snapshots.

## Why

We want terminal tool review analytics, but the reducer should not stamp

review timing from its own wall clock.

This PR plumbs review timing through the real protocol and app-server

seams so downstream analytics can consume the emitter's timestamps

directly. Guardian reviews keep their enriched `started_at` /

`completed_at` analytics fields by deriving those legacy second-based

values from the same protocol-native millisecond lifecycle timestamps,

rather than sampling a separate analytics clock.

## What changed

- add `started_at_ms` to user approval request payloads

- add `started_at_ms` / `completed_at_ms` to guardian review

notifications

- preserve Guardian review `started_at` / `completed_at` enrichment from

the protocol-native timing source

- stamp typed `ServerResponse` analytics facts with app-server-observed

`completed_at_ms`

- thread the new timing fields through core, protocol, app-server, TUI,

and analytics fixtures

## Verification

- `cargo test -p codex-app-server outgoing_message --manifest-path

codex-rs/Cargo.toml`

- `cargo test -p codex-app-server-protocol guardian --manifest-path

codex-rs/Cargo.toml`

- `cargo test -p codex-tui guardian --manifest-path codex-rs/Cargo.toml`

- `cargo test -p codex-analytics analytics_client_tests --manifest-path

codex-rs/Cargo.toml`

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/21434).

* #18748

* __->__ #21434

* #18747

* #17090

* #17089

* #20514

## Why

Remote compaction v2 consumes a normal Responses stream, but that

compaction-specific stream consumer dropped the `response.completed` id.

As a result, the `responses_websocket_response_processed` lifecycle

notification was emitted for normal turn sampling but not after a v2

remote compaction response was fully processed.

## What changed

- Return the completed response id alongside the v2 `context_compaction`

output item.

- After v2 compacted history is installed, send `response.processed`

through the same websocket session when the feature is enabled.

- Add websocket regression coverage for a remote compaction v2 request

followed by `response.processed`.

## Verification

- `cargo test -p codex-core --test all

responses_websocket_sends_response_processed_after_remote_compaction_v2

-- --nocapture`

- `cargo test -p codex-core

collect_context_compaction_output_accepts_additional_output_items --

--nocapture`

## Why

After stdio transports and provider-owned defaults exist, Codex needs a

config-backed provider that can describe more than the single legacy

`CODEX_EXEC_SERVER_URL` remote. This PR adds that provider without

activating it in product entrypoints yet, keeping parser/validation

review separate from runtime wiring.

**Stack position:** this is PR 4 of 5. It builds on PR 3's

provider/default model and adds the `environments.toml` provider used by

PR 5.

## What Changed

- Add `environment_toml.rs` as the TOML-specific home for parsing,

validation, and provider construction.

- Keep the TOML schema/provider structs private; the public constructor

added here is `EnvironmentManager::from_codex_home(...)`.

- Add `TomlEnvironmentProvider`, including validation for:

- reserved ids such as `local` and `none`

- duplicate ids

- unknown explicit defaults

- empty programs or URLs

- exactly one of `url` or `program` per configured environment

- Support websocket environments with `url = "ws://..."` / `wss://...`.

- Support stdio-command environments with `program = "..."`.

- Add helpers to load `environments.toml` from `CODEX_HOME`, but do not

wire entrypoints to call them yet.

- Add the `toml` dependency for parsing.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- **4. This PR:** https://github.com/openai/codex/pull/20666 - Add

CODEX_HOME environments TOML provider

- 5. https://github.com/openai/codex/pull/20667 - Load configured

environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

Not run locally; this was split out of the original draft stack.

## Documentation

This introduces the config shape for `environments.toml`; user-facing

documentation should be added before this stack is treated as a

documented public workflow.

---------

Co-authored-by: Codex <noreply@openai.com>

Route view_image through selected environments so image reads use the selected turn environment and cwd, with schema exposure limited to multi-environment toolsets.\n\nCo-authored-by: Codex <noreply@openai.com>

Remove the remote thread-store backend and checked-in protobuf

artifacts. We've moved these into another crate that link against this

one.

Also remove the config settings for thread store backend selection,

since we'll instead pass an instantiated thread store into the core-api

crate's main entrypoint.

Add Codex config for static trace span attributes and structured W3C

tracestate field upserts. The config flows through OtelSettings so

callers can attach trace metadata without touching every span call site.

Apply span attributes with an SDK span processor so every exported

trace span carries the configured metadata. Model tracestate as nested

member fields so configured keys can be upserted while unrelated

propagated state in the same member is preserved.

Validate configured tracestate before installing provider-global state,

including header-unsafe values the SDK does not reject by itself. This

keeps Codex from propagating malformed trace context from config.

Update the config schema, public docs, and OTLP loopback coverage for

config parsing, span export, propagation, and invalid-header rejection.

## Summary

`cargo test` has entails both running standard Rust tests and doctests.

It turns out that the doctest discovery is fairly slow, and it's a cost

you pay even for crates that don't include any doctests.

This PR disables doctests with `doctest = false` for crates that lack

any doctests.

For the collection of crates below, this speeds up test execution by

>4x.

E.g., before this PR:

```

Benchmark 1: cargo test -p codex-utils-absolute-path -p codex-utils-cache -p codex-utils-cli -p codex-utils-home-dir -p codex-utils-output-truncation -p codex-utils-path -p codex-utils-string -p codex-utils-template -p codex-utils-elapsed -p codex-utils-json-to-toml

Time (mean ± σ): 1.849 s ± 4.455 s [User: 0.752 s, System: 1.367 s]

Range (min … max): 0.418 s … 14.529 s 10 runs

```

And after:

```

Benchmark 1: cargo test -p codex-utils-absolute-path -p codex-utils-cache -p codex-utils-cli -p codex-utils-home-dir -p codex-utils-output-truncation -p codex-utils-path -p codex-utils-string -p codex-utils-template -p codex-utils-elapsed -p codex-utils-json-to-toml

Time (mean ± σ): 428.6 ms ± 6.9 ms [User: 187.7 ms, System: 219.7 ms]

Range (min … max): 418.0 ms … 436.8 ms 10 runs

```

For a single crate, with >2x speedup, before:

```

Benchmark 1: cargo test -p codex-utils-string

Time (mean ± σ): 491.1 ms ± 9.0 ms [User: 229.8 ms, System: 234.9 ms]

Range (min … max): 480.9 ms … 512.0 ms 10 runs

```

And after:

```

Benchmark 1: cargo test -p codex-utils-string

Time (mean ± σ): 213.9 ms ± 4.3 ms [User: 112.8 ms, System: 84.0 ms]

Range (min … max): 206.8 ms … 221.0 ms 13 runs

```

Co-authored-by: Codex <noreply@openai.com>

- Route ThreadManager rollout-path resume/fork through ThreadStore

history reads.

- Add in-memory store coverage proving path-addressed reads are used.

This isn't strictly necessary for the ThreadStore migration, since these

ThreadManager methods _only_ work for path-based lookups, but I'm trying

to migrate all the rollout recorder callsites to use the threadstore

were possible for consistency.

- Route live thread renames through `ThreadStore` metadata updates.

- Read resumed thread names from store metadata with legacy local

fallback preserved in the store.

## Why

This is the next stacked step after deleting the tool-handler kind

indirection. Specs should come from the registered handlers themselves

so registry construction has a single source of truth for handler

behavior and exposed tool definitions.

## What changed

- Added `ToolHandler::spec()` plus handler-provided parallel/code-mode

metadata, and made `ToolRegistryBuilder::register_handler` automatically

collect specs from registered handlers.

- Moved builtin tool spec construction into the corresponding handlers

and their adjacent `_spec` modules, including shell, unified exec, apply

patch, view image, request plugin install, tool search, MCP resource,

goals, planning, permissions, agent jobs, and multi-agent tools.

- Reworked configurable handlers to receive their tool-building options

through constructors, with non-optional handler options where the

handler is always spec-backed. Shell fallback handlers keep an explicit

no-spec mode because they are also registered as hidden dispatch

aliases.

- Kept `CodeModeExecuteHandler` on the explicit configured wrapper so

the code-mode exec spec can still be built from the nested registry.

## Verification

- `cargo check -p codex-core`

- `cargo test -p codex-core tools::spec_plan::tests`

- `cargo test -p codex-core tools::spec::tests`

- `cargo test -p codex-core tools::handlers::multi_agents_spec::tests`

- `RUST_MIN_STACK=16777216 cargo test -p codex-core

tools::handlers::multi_agents::tests`

- `cargo test -p codex-core tools::handlers::apply_patch::tests`

- `cargo test -p codex-core tools::handlers::unified_exec::tests`

- `just fix -p codex-core`

- `git diff --check`

## Why

App-server config writes were leaving existing threads partially stale.

After a config mutation, the app-server told each live thread to run

`Op::ReloadUserConfig`, but that path only re-read the user

`config.toml` layer. Settings that came from the app-server's

materialized config snapshot did not propagate to existing threads until

restart.

This change prevent a FS access from `core` for CCA.

## What changed

- add `CodexThread::refresh_runtime_config()` and

`Session::refresh_runtime_config()` so the app-server can push a freshly

rebuilt config snapshot into a live thread

- rebuild the latest config with each thread's `cwd` after config

mutations, then refresh the thread from that snapshot instead of asking

it to reload only `config.toml`

- keep session-static settings unchanged during refresh, while updating

runtime-refreshable state such as the config layer stack,

`tool_suggest`, and derived hook/plugin/skill state

- keep `reload_user_config_layer()` as the file-backed fallback for

legacy local reload flows, but route the shared refresh logic through

the new runtime refresh path

## Testing

- add a session test that verifies `refresh_runtime_config()` rebuilds

hooks from refreshed config

- add a session test that verifies runtime-refreshable fields update

while session-static settings like `model` and `notify` stay unchanged

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

This PR removes the synthetic `HashMap<String, ToolInfo>` keys from MCP

tool discovery. `McpConnectionManager::list_all_tools()` now returns

normalized `Vec<ToolInfo>`, and downstream code derives identity from

`ToolInfo::canonical_tool_name()`.

The motivation is to keep model-visible tool identity on

`ToolName`/`ToolInfo` instead of parallel string map keys, so future

namespace changes do not have to preserve otherwise-unused lookup keys.

## Changes

- Rename the MCP normalization path from `qualify_tools` to

`normalize_tools_for_model` and return tool values directly.

- Flow MCP tool lists through connectors, plugin injection, router/spec

building, code mode, and tool search as vectors/slices.

- Keep direct/deferred subtraction local to `mcp_tool_exposure`, using

`ToolName` values.

- Update tests to compare `ToolName` instances where MCP identity

matters.

## Validation

- `cargo test -p codex-mcp test_normalize_tools`

- `cargo test -p codex-core mcp_tool_exposure`

- `cargo test -p codex-core

direct_mcp_tools_register_namespaced_handlers`

- `cargo test -p codex-core

search_tool_registers_namespaced_mcp_tool_aliases`

- `just fix -p codex-mcp`

- `just fix -p codex-core`

## Why

Follow-up to #21180: turn diffs are operation-backed now, but a failed

`apply_patch` can still leave exact filesystem mutations behind. For

example, a move can write the destination file before failing to remove

the source. Treating the whole call as unknowable then drops a change

that Codex actually knows happened, so the emitted turn diff can drift

from the workspace.

## What changed

-

[`apply-patch`](f55724e027/codex-rs/apply-patch/src/lib.rs (L248-L345))

now returns `ApplyPatchFailure` with the exact committed prefix

accumulated before an error. If a write failure may already have mutated

the target, the delta is marked inexact instead of being reused blindly.

- Move handling now records the destination write before attempting

source removal, so a partially failed move can still report the

destination file that definitely landed

([code](f55724e027/codex-rs/apply-patch/src/lib.rs (L463-L521))).

-

[`ApplyPatchRuntime`](f55724e027/codex-rs/core/src/tools/runtimes/apply_patch.rs (L49-L67))

now accumulates committed deltas across attempts and forwards them even

when the visible tool result is failed or sandbox-denied ([runtime

path](f55724e027/codex-rs/core/src/tools/runtimes/apply_patch.rs (L223-L250)),

[event

path](f55724e027/codex-rs/core/src/tools/events.rs (L215-L225))).

- `TurnDiffTracker` now consumes committed exact deltas rather than only

fully successful patches; exact-empty failures leave the aggregate

unchanged, while inexact deltas still invalidate it.

## Verification

- Added a regression test covering a failed move that still emits the

committed destination diff:

[`apply_patch_failed_move_preserves_committed_destination_diff`](f55724e027/codex-rs/core/tests/suite/apply_patch_cli.rs (L1517-L1586)).

- Kept explicit coverage that an inexact delta clears the aggregate

instead of publishing a guessed diff:

[`apply_patch_clears_aggregated_diff_after_inexact_delta`](f55724e027/codex-rs/core/tests/suite/apply_patch_cli.rs (L1589-L1655)).

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

- replace filesystem-based turn diff tracking with an operation-backed

accumulator

- preserve enough verified apply_patch state to render move-overwrite

cases correctly

- keep the turn/diff/updated contract intact while removing remote-only

turn-diff test skips

This takes the assumption that no 3P services rely on the output format

of `apply_patch`

## Why

For the CCA file system isolation push

---------

Co-authored-by: Codex <noreply@openai.com>

## DISCLAIMER

This is experimental and no production service must rely on this

## Why

Built-in MCPs are product-owned runtime capabilities, but they were

previously flattened into the same config-backed stdio path as

user-configured servers. That made them depend on a hidden `codex

builtin-mcp` re-exec path, exposed them through config-oriented CLI

flows, and erased distinctions the runtime needs to preserve—most

notably whether an MCP call should count as external context for

memory-mode pollution.

## What changed

- Model product-owned built-ins separately from config-backed MCP

servers via `BuiltinMcpServer` and `EffectiveMcpServer`.

- Launch built-ins in process through a reusable async transport instead

of the hidden `builtin-mcp` stdio subcommand.

- Keep config-oriented CLI operations such as `codex mcp

list/get/login/logout` scoped to configured servers, while merging

built-ins only into the effective runtime server set.

- Retain server metadata after launch so parallel-tool support and

context classification come from the live server set; built-in

`memories` is now classified as local Codex state rather than external

context.

## Test plan

- `cargo test -p codex-mcp`

- `cargo test -p codex-core --test suite

builtin_memories_mcp_call_does_not_mark_thread_memory_mode_polluted_when_configured`

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

Reverts #20689 to restore the previous optional state DB plumbing. The

conflict resolution keeps the newer installation ID and session/thread

identity changes that landed after #20689, while removing the mandatory

state DB and agent graph store dependency from ThreadManager

construction.

## What changed

- Restored `Option<StateDbHandle>` through app-server, MCP server,

prompt debug, and test entry points.

- Removed the `codex-core` dependency on `codex-agent-graph-store` and

reverted descendant lookup back to the existing state DB path when

available.

- Kept newer `installation_id` forwarding by passing it beside the

optional DB handle.

- Kept local thread-name updates working when the optional state DB

handle is absent.

## Validation

- `git diff --check`

- `cargo test -p codex-thread-store`

- `cargo test -p codex-state -p codex-rollout -p

codex-app-server-protocol`

- Attempted `env CARGO_INCREMENTAL=0 cargo test -p codex-core -p

codex-app-server -p codex-app-server-client -p codex-mcp-server -p

codex-thread-manager-sample -p codex-tui`; blocked locally by a rustc

ICE while compiling `v8 v146.4.0` with `rustc 1.93.0 (254b59607

2026-01-19)` on `aarch64-apple-darwin`.

## Summary

Add `openai-developers@openai-curated` to

`TOOL_SUGGEST_DISCOVERABLE_PLUGIN_ALLOWLIST` so the OpenAI Developers

plugin can be surfaced through tool suggestions once it is available in

the Built by OpenAI marketplace.

Update the discoverable plugin test fixture to assert the plugin is

returned from the curated marketplace allowlist path.

## Validation

- `cargo fmt --check` passed; rustfmt emitted the existing

stable-channel warnings about `imports_granularity`.

- `cargo test -p codex-core

list_tool_suggest_discoverable_plugins_returns_uninstalled_curated_plugins`

passed.

## Why

The spec split in the parent PR still left an intermediate registry plan

that recorded `ToolHandlerKind` values and translated them into concrete

handlers later. That kept tool registration dependent on static enum

bookkeeping instead of registering handlers from the same code that

assembles their specs.

## What Changed

- Make `build_tool_registry_builder` register concrete handlers directly

while adding specs.

- Add small `ToolRegistryBuilder` helpers for spec augmentation and

nested code-mode inspection.

- Remove `ToolHandlerKind`, `ToolHandlerSpec`, and `ToolRegistryPlan`.

- Update spec-plan tests to assert against the built `ToolRegistry`

instead of static handler descriptors.

## Validation

- `cargo check -p codex-core`

- `cargo test -p codex-core tools::spec_plan::tests`

- `cargo test -p codex-core tools::spec::tests`

- `just fix -p codex-core`

Based on work from Vincent K -

https://github.com/openai/codex/pull/19060

<img width="1836" height="642" alt="CleanShot 2026-04-29 at 20 47 40@2x"

src="https://github.com/user-attachments/assets/b647bb89-65fe-40c8-80b0-7a6b7c984634"

/>

## Why

Compaction rewrites the conversation context that future model turns

receive, but hooks currently have no deterministic lifecycle point

around that rewrite. This adds compact lifecycle hooks so users can

audit manual and automatic compaction, surface hook messages in the UI,

and run post-compaction follow-up without overloading tool or prompt

hooks.

## What Changed

- Added `PreCompact` and `PostCompact` hook events across hook config,

discovery, dispatch, generated schemas, app-server notifications,

analytics, and TUI hook rendering.

- Added trigger matching for compact hooks with the documented `manual`

and `auto` matcher values.

- Wired `PreCompact` before both local and remote compaction, and

`PostCompact` after successful local or remote compaction.

- Kept compact hook command input to lifecycle metadata: session id,

Codex turn id, transcript path, cwd, hook event name, model, and

trigger.

- Made compact stdout handling consistent with other hooks: plain stdout

is ignored as debug output, while malformed JSON-looking stdout is

reported as failed hook output.

- Added integration coverage for compact hook dispatch, trigger

matching, post-compact execution, and the audited behavior that

`decision:"block"` does not block compaction.

## Out of Scope

- Hook-specific compaction blocking is not implemented;

`decision:"block"` and exit-code-2 blocking semantics are intentionally

unsupported for `PreCompact`.

- Custom compaction instructions are not exposed to compact hooks in

this PR.

- Compact summaries, summary character counts, and summary previews are

not exposed to compact hooks in this PR.

## Verification

- `cargo test -p codex-hooks`

- `cargo test -p codex-core

manual_pre_compact_block_decision_does_not_block_compaction`

- `cargo test -p codex-app-server hooks_list`

- `cargo test -p codex-core config_schema_matches_fixture`

- `cargo test -p codex-tui hooks_browser`

## Docs

The developer documentation for Codex hooks should be updated alongside

this feature to document `PreCompact` and `PostCompact`, the

`manual`/`auto` matcher values, and the compact hook payload fields.

---------

Co-authored-by: Vincent Koc <vincentkoc@ieee.org>

## Why

This is the first mechanical slice of moving tool spec ownership toward

the handlers. `codex-tools` should keep shared primitives and conversion

helpers, while builtin tool specs and registration planning live in

`codex-core` with the handlers that own those tools.

Keeping this PR to relocation and import updates isolates the copy/move

review from the later logic change that wires specs through registered

handlers.

## What changed

- Moved builtin tool spec constructors from `codex-rs/tools/src` into

`codex-rs/core/src/tools/handlers/*_spec.rs` or nearby core tool

modules.

- Moved the registry planning code into

`codex-rs/core/src/tools/spec_plan.rs` and its associated types/tests

into core.

- Kept shared primitives in `codex-tools`, including `ToolSpec`,

schema/types, discovery/config primitives, dynamic/MCP conversion

helpers, and code-mode collection helpers.

- Updated handlers that referenced moved argument types or tool-name

constants to use the core spec modules.

- Moved spec tests next to the moved spec modules.

## Verification

- `cargo check -p codex-tools`

- `cargo check -p codex-core`

- `cargo test -p codex-tools`

- `cargo test -p codex-core _spec::tests`

- `cargo test -p codex-core tools::spec_plan::tests`

- `just fix -p codex-tools`

- `just fix -p codex-core`

Note: I also tried the broader `cargo test -p codex-core tools::`; it

reached the moved spec-plan/spec tests successfully, then aborted with a

stack overflow in

`tools::handlers::multi_agents::tests::tool_handlers_cascade_close_and_resume_and_keep_explicitly_closed_subtrees_closed`,

which is outside this spec relocation.

## Why

Skills update notifications are app-server API behavior, but the watcher

lived in `codex-core` and surfaced through

`EventMsg::SkillsUpdateAvailable`. Moving the watcher out keeps core

focused on thread execution and lets app-server own both cache

invalidation and the `skills/changed` notification.

## What changed

- Added an app-server-owned skills watcher that watches local skill

roots, clears the shared skills cache, and emits `skills/changed`

directly.

- Registers skill watches from the common app-server thread listener

attach path, including direct starts, resumes, and app-server-observed

child or forked threads.

- Stores the `WatchRegistration` on `ThreadState`, so listener

replacement, thread teardown, idle unload, and app-server shutdown

deregister by dropping the RAII guard.

- Removed `EventMsg::SkillsUpdateAvailable`, the core watcher, and the

old core live-reload test.

- Extended the app-server skills change test to verify a cached skills

list is refreshed after a filesystem change without forcing reload.

## Validation

- `cargo check -p codex-core -p codex-app-server -p codex-mcp-server -p

codex-rollout -p codex-rollout-trace`

- `cargo test -p codex-app-server

skills_changed_notification_is_emitted_after_skill_change`

## Summary

- document that commit attribution for generated git commit messages is

gated by the `codex_git_commit` feature flag

- add an example `config.toml` snippet showing `commit_attribution` with

`[features].codex_git_commit = true`

- update the config schema description so the reference docs explain

that `commit_attribution` only takes effect when the feature is enabled

Fixes#19799.

## Validation

- `cargo run -p codex-core --bin codex-write-config-schema`

- `cargo test -p codex-config`

- `cargo test -p codex-features`

- `cargo fmt --check`

- `git diff --check`

## Notes

- `cargo test -p codex-core config_schema_matches_fixture` currently

fails before reaching the schema test because `core_test_support`

imports `similar` without a linked crate in this checkout. The narrower

package checks above avoid that unrelated test-support build failure.

## Why

Several tool handler modules still bundled multiple `ToolHandler`

implementations in one file. That made the handler directory harder to

navigate and made otherwise local handler edits land in large shared

modules.

## What

- Split grouped tool handlers into one handler file each for agent jobs,

goals, MCP resources, shell tools, and unified exec.

- Kept shared parsing, payload, and runtime helpers in the existing

parent modules, with re-exports preserving the existing handler import

paths.

- Updated the shell handler tests to construct `ShellCommandHandler`

through the existing `ShellCommandBackendConfig` conversion now that the

backend detail lives with the shell-command handler.

## Validation

- `cargo check -p codex-core`

- `cargo clippy -p codex-core --lib -- -D warnings`

- `git diff --check -- codex-rs/core/src/tools/handlers`

Targeted `codex-core` handler tests did not run locally because

`core_test_support` currently fails to compile before reaching these

tests due to an unresolved `similar` import.

## Summary

- make resolved turn environment shell metadata optional instead of

hard-coding bash

- render environment context shells from explicit environment metadata

when present, falling back to the existing session shell

- update environment context tests for inherited PowerShell-style

fallback and explicit per-environment shell override

## Testing

- Not run (not requested; formatted with `just fmt`).

Co-authored-by: Codex <noreply@openai.com>

`codex exec` should not print OpenTelemetry exporter self-diagnostics to

stderr by default. Suppress the SDK and OTLP exporter targets unless

callers

explicitly opt in with `RUST_LOG`.

Also stop defaulting the trace exporter to the log exporter, since OTLP

HTTP

endpoints are signal-specific and a logs endpoint is not valid for

spans.

Co-authored-by: Codex <noreply@openai.com>

Co-authored-by: Codex <noreply@openai.com>

# Motivation

Browser Use origin-access prompts are MCP elicitations, not direct

tool-call approval prompts, so they were bypassing the Guardian approval

path. We need a generic opt-in that lets eligible MCP elicitations use

Guardian when the current turn already routes approvals there.

# Description

Add a generic elicitation reviewer hook in codex-mcp and wire codex-core

to pass a Guardian reviewer callback when creating the MCP connection

manager. The reviewer validates explicit mcp_tool_call opt-in metadata,

builds a Guardian MCP tool-call review request from

server/tool/connector metadata and tool params, and maps Guardian

approval, denial, timeout, and cancellation decisions back to MCP

elicitation responses.

The new option to trigger this in the `_meta` object is:

```

"codex_request_type": "approval_request",

```

# Testing

- RUST_MIN_STACK=8388608 NEXTEST_STATUS_LEVEL=leak cargo nextest run

--no-fail-fast --cargo-profile ci-test --test-threads 2

- cargo clippy --tests -- -D warnings

- cargo fmt -- --config imports_granularity=Item --check

- cargo shear

- pnpm run format

- python3 .github/scripts/verify_cargo_workspace_manifests.py

- python3 .github/scripts/verify_tui_core_boundary.py

- python3 .github/scripts/verify_bazel_clippy_lints.py

- git diff --check

## Why

The core `Op::ListMcpTools` request path is no longer needed. Keeping it

around left a dead request/response surface alongside the app-server MCP

inventory APIs that own current server status listing.

## What Changed

- Removed `Op::ListMcpTools`, `EventMsg::McpListToolsResponse`, and the

core handler that built the MCP snapshot response.

- Removed the now-unused `codex-mcp` snapshot wrapper/export and passive

event handling arms in rollout and MCP-server consumers.

- Updated tests that used the old op as a synchronization hook to wait

on existing startup/skills events, and deleted the plugin test that only

exercised the removed listing op.

## Validation

- `cargo test -p codex-protocol`

- `cargo test -p codex-mcp`

- `cargo test -p codex-rollout -p codex-rollout-trace -p

codex-mcp-server`

- `cargo test -p codex-core --test all

pending_input::queued_inter_agent_mail`

- `cargo test -p codex-core --test all

rmcp_client::stdio_mcp_tool_call_includes_sandbox_state_meta`

- `cargo test -p codex-core --test all

rmcp_client::stdio_image_responses`

- `just fix -p codex-core -p codex-protocol -p codex-mcp -p

codex-rollout -p codex-rollout-trace -p codex-mcp-server`

## Summary

- Add a `response.processed` websocket request payload and sender for

Responses API websockets.

- Send `response.processed` from `try_run_sampling_request` after a

response completes, local turn processing succeeds, and the

session-owned feature flag is enabled.

- Add websocket coverage for both enabled and disabled feature-flag

behavior.

## Validation

- `just fmt`

- `cargo test -p codex-core response_processed`

- `cargo test -p codex-api responses_websocket`

- `cargo test -p codex-features

responses_websocket_response_processed_is_under_development`

- `git diff --check`

- `just fix -p codex-api -p codex-core -p codex-features`

- `git diff --check origin/main...HEAD`

## Why

Message history was implemented inside `codex-core` and surfaced through

core protocol ops and `SessionConfiguredEvent` fields even though the

current consumer is TUI-local prompt recall. That made core own UI

history persistence and exposed `history_log_id` / `history_entry_count`

through surfaces that app-server and other clients do not need.

This change moves message history persistence out of core and keeps the

recall plumbing local to the TUI.

## What changed

- Added a new `codex-message-history` crate for appending, looking up,

trimming, and reading metadata from `history.jsonl`.

- Removed core protocol history ops/events: `AddToHistory`,

`GetHistoryEntryRequest`, and `GetHistoryEntryResponse`.

- Removed `history_log_id` and `history_entry_count` from

`SessionConfiguredEvent` and updated exec/MCP/test fixtures accordingly.

- Updated the TUI to dispatch local app events for message-history

append/lookup and keep its persistent-history metadata in TUI session

state.

## Validation

- `cargo test -p codex-message-history -p codex-protocol`

- `cargo test -p codex-exec event_processor_with_json_output`

- `cargo test -p codex-mcp-server outgoing_message`

- `cargo test -p codex-tui`

- `just fix -p codex-message-history -p codex-protocol -p codex-core -p

codex-tui -p codex-exec -p codex-mcp-server`

## Summary

- resolve or inject the installation ID before core startup and pass it

through `ThreadManager`, `CodexSpawnArgs`, and `Session` as a plain

`String`

- keep child sessions on the parent installation ID instead of

rediscovering it inside core

- propagate installation ID startup failures in `mcp-server` instead of

panicking

## Why

Core was still touching the filesystem on the session startup path to

discover `installation_id`. This moves that work to the outer host

boundary so core no longer depends on `codex_home` reads during session

construction.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`/responses/compact` should preserve the request-affinity fields that

apply to the active auth mode. ChatGPT-auth compact requests need the

effective `service_tier`, and compact requests for every auth mode need

the stable `prompt_cache_key`, so compaction does not quietly lose

routing or cache behavior that normal sampling already has.

This follows the request-parity direction from #20719, but keeps the net

change focused on the compact payload fields needed here.

## What changed

- Add `service_tier` and `prompt_cache_key` to the compact endpoint

input payload.

- Build the remote compact payload from the existing responses request

builder output so `Fast` still maps to `priority` when compact sends a

service tier.

- Pass the turn service tier into remote compaction, but only include it

in compact payloads for ChatGPT-backed auth.

- Keep `prompt_cache_key` on compact payloads for all auth modes.

- Add request-body diff snapshot coverage in

`core/tests/suite/compact_remote.rs` for:

- API-key auth reusing `prompt_cache_key` while omitting `service_tier`

even when `Fast` is configured.

- ChatGPT auth reusing both `service_tier` and `prompt_cache_key`.

- Drive the snapshot coverage through five varied turns: plain text,

multi-part text, tool-call continuation, image+text input, local-shell

continuation, and final-turn reasoning output.

## Verification

- Added insta snapshots for compact request-body parity against the last

normal `/responses` request after five varied turns.

- Not run locally per repo guidance; relying on GitHub CI for test

execution.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

MCP tool calls already include `session_id` in `x-codex-turn-metadata`,

but descendant threads intentionally share that value with the root

thread. Consumers that need to correlate work at the concrete thread

level also need the current `thread_id`.

## What changed

- add `thread_id` to `x-codex-turn-metadata` while preserving

`session_id` as the shared session identity

- thread the two identities separately through normal turns and spawned

review threads

- add regression coverage for resumed sessions, reserved metadata

fields, and deferred MCP tool calls

## Verification

- added focused coverage in `core/src/session/tests.rs`,

`core/src/turn_metadata_tests.rs`, and `core/tests/suite/search_tool.rs`

## Summary

Related to

https://openai.slack.com/archives/C095U48JNL9/p1777537279707449

TLDR:

We update the meaning of session ids and thread ids:

* thread_id stays as now

* session_id become a shared id between every thread under a /root

thread (i.e. every sub-agent share the same session id)

This PR introduces an explicit `SessionId` and threads it through the

protocol/client boundary so `session_id` and `thread_id` can diverge

when they need to, while preserving compatibility for older serialized

`session_configured` events.

---------

Co-authored-by: Codex <noreply@openai.com>