This adds end-to-end coverage for `responses-api-proxy` request dumps

when Codex spawns a subagent and validates that the `x-codex-window-id`

and `x-openai-subagent` are properly set.

## Why

`provider_auth_command_supplies_bearer_token` and

`provider_auth_command_refreshes_after_401` were still flaky under

Windows Bazel because the generated fixture used `powershell.exe`, whose

startup can be slow enough to trip the provider-auth timeout in CI.

## What

Replace the generated Windows auth fixture script in

`codex-rs/core/tests/suite/client.rs` with a small `.cmd` script

executed by `cmd.exe /D /Q /C`, and advance `tokens.txt` one line at a

time so the refresh-after-401 test still gets the second token on the

second invocation.

Also align the fixture timeout with the provider-auth default (`5_000`

ms) to avoid introducing a test-only timing budget that is stricter than

production behavior.

## Testing

Left to CI, specifically the Windows Bazel

`//codex-rs/core:core-all-test` coverage for the two provider-auth

command tests.

Send pending mailbox mail after completed reasoning or commentary items

so follow-up requests can pick it up mid-turn.

---------

Co-authored-by: Codex <noreply@openai.com>

This adds an `include_environment_context` config/profile flag that

defaults on, and guards both initial injection and later environment

updates to allow skipping injection of `<environment_context>`.

This PR adds root and profile config switches to omit the generated

`<permissions instructions>` and `<apps_instructions>` prompt blocks

while keeping both enabled by default, and it gates both the initial

developer-context injection and later permissions diff injection so

turning the permissions block off stays effective across turn-context

overrides.

Also added a prompt debug tool that can be used as `codex debug

prompt-input "hello"` and dumps the constructed items list.

The `OPENAI_BASE_URL` environment variable has been a significant

support issue, so we decided to deprecate it in favor of an

`openai_base_url` config key. We've had the deprecation warning in place

for about a month, so users have had time to migrate to the new

mechanism. This PR removes support for `OPENAI_BASE_URL` entirely.

- Keep only parent system/developer/user messages plus assistant

final-answer messages in forked child history.

- Strip parent tool/reasoning items and remove the unmatched synthetic

spawn output.

## Why

We were seeing failures in the following tests as part of trying to get

all the tests running under Bazel on Windows in CI

(https://github.com/openai/codex/pull/16528):

```

suite::shell_command::unicode_output::with_login

suite::shell_command::unicode_output::without_login

```

Certainly `PATHEXT` should have been included in the extra `CORE_VARS`

list, so we fix that up here, but also take things a step further for

now by forcibly ensuring it is set on Windows in the return value of

`create_env()`. Once we get the Windows Bazel build working reliably

(i.e., after #16528 is merged), we should come back to this and confirm

we can remove the special case in `create_env()`.

## What

- Split core env inheritance into `COMMON_CORE_VARS` plus

platform-specific allowlists for Windows and Unix in

[`exec_env.rs`](1b55c88fbf/codex-rs/core/src/exec_env.rs (L45-L81)).

- Preserve `PATHEXT`, `USERNAME`, and `USERPROFILE` on Windows, and

`HOME` / locale vars on Unix.

- Backfill a default `PATHEXT` in `create_env()` on Windows if the

parent env does not provide one, so child process launch still works in

stripped-down Bazel environments.

- Extend the Windows exec-env test to assert mixed-case `PathExt`

survives case-insensitive core filtering, and document why the

shell-command Unicode test goes through a child process.

## Verification

- `cargo test -p codex-core exec_env::tests`

Stacked on #16508.

This removes the temporary `codex-core` / `codex-login` re-export shims

from the ownership split and rewrites callsites to import directly from

`codex-model-provider-info`, `codex-models-manager`, `codex-api`,

`codex-protocol`, `codex-feedback`, and `codex-response-debug-context`.

No behavior change intended; this is the mechanical import cleanup layer

split out from the ownership move.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

This is a follow-up to #16665. The Windows `unicode_output` test should

still exercise a child process so it verifies PowerShell's UTF-8 output

configuration, but `$env:COMSPEC` depends on that environment variable

surviving the curated Bazel test environment.

Using `cmd.exe` keeps the child-process coverage while avoiding both

bare `cmd` + `PATHEXT` lookup and `$env:COMSPEC` env passthrough

assumptions.

## What

- Run `cmd.exe /c echo naïve_café` in the Windows branch of

`unicode_output`.

## Verification

- `cargo test -p codex-core unicode_output`

## Why

Windows Bazel shell tests launch PowerShell with a curated environment,

so `PATHEXT` may be absent. The existing `unicode_output` test invokes

bare `cmd`, which can fail before the test exercises UTF-8 child-process

output.

## What

- Use `$env:COMSPEC /c echo naïve_café` in the Windows branch of

`unicode_output`.

- Preserve the external child-process path instead of switching the test

to a PowerShell builtin.

## Verification

- `cargo test -p codex-core unicode_output`

https://github.com/openai/codex/pull/16460 was a large PR created by

Codex to try to get the tests to pass under Bazel on Windows. Indeed, it

successfully ran all of the tests under `//codex-rs/core:` with its

changes to `codex-rs/core/`, though the full set of changes seems to be

too broad.

This PR tries to port the key changes, which are:

- Under Bazel, the `USERNAME` environment variable is not guaranteed to

be set on Windows, so for tests that need a non-empty env var as a

convenient substitute for an env var containing an API key, just use

`PATH`. Note that `PATH` is unlikely to contain characters that are not

allowed in an HTTP header value.

- Specify `"powershell.exe"` instead of just `"powershell"` in case the

`PATHEXT` env var gets lost in the shuffle.

## Summary

- split `models-manager` out of `core` and add `ModelsManagerConfig`

plus `Config::to_models_manager_config()` so model metadata paths stop

depending on `core::Config`

- move login-owned/auth-owned code out of `core` into `codex-login`,

move model provider config into `codex-model-provider-info`, move API

bridge mapping into `codex-api`, move protocol-owned types/impls into

`codex-protocol`, and move response debug helpers into a dedicated

`response-debug-context` crate

- move feedback tag emission into `codex-feedback`, relocate tests to

the crates that now own the code, and keep broad temporary re-exports so

this PR avoids a giant import-only rewrite

## Major moves and decisions

- created `codex-models-manager` as the owner for model

cache/catalog/config/model info logic, including the new

`ModelsManagerConfig` struct

- created `codex-model-provider-info` as the owner for provider config

parsing/defaults and kept temporary `codex-login`/`codex-core`

re-exports for old import paths

- moved `api_bridge` error mapping + `CoreAuthProvider` into

`codex-api`, while `codex-login::api_bridge` temporarily re-exports

those symbols and keeps the `auth_provider_from_auth` wrapper

- moved `auth_env_telemetry` and `provider_auth` ownership to

`codex-login`

- moved `CodexErr` ownership to `codex-protocol::error`, plus

`StreamOutput`, `bytes_to_string_smart`, and network policy helpers to

protocol-owned modules

- created `codex-response-debug-context` for

`extract_response_debug_context`, `telemetry_transport_error_message`,

and related response-debug plumbing instead of leaving that behavior in

`core`

- moved `FeedbackRequestTags`, `emit_feedback_request_tags`, and

`emit_feedback_request_tags_with_auth_env` to `codex-feedback`

- deferred removal of temporary re-exports and the mechanical import

rewrites to a stacked follow-up PR so this PR stays reviewable

## Test moves

- moved auth refresh coverage from `core/tests/suite/auth_refresh.rs` to

`login/tests/suite/auth_refresh.rs`

- moved text encoding coverage from

`core/tests/suite/text_encoding_fix.rs` to

`protocol/src/exec_output_tests.rs`

- moved model info override coverage from

`core/tests/suite/model_info_overrides.rs` to

`models-manager/src/model_info_overrides_tests.rs`

---------

Co-authored-by: Codex <noreply@openai.com>

In https://github.com/openai/codex/pull/16528, I am trying to get tests

running under Bazel on Windows, but currently I see:

```

thread 'suite::user_shell_cmd::user_shell_command_does_not_set_network_sandbox_env_var' (10220) panicked at core/tests\suite\user_shell_cmd.rs:358:5:

assertion failed: `(left == right)`

Diff < left / right > :

<1

>0

```

This PR updates the `assert_eq!()` to provide more information to help

diagnose the failure.

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/16606).

* #16608

* __->__ #16606

## Why

This finishes the config-type move out of `codex-core` by removing the

temporary compatibility shim in `codex_core::config::types`. Callers now

depend on `codex-config` directly, which keeps these config model types

owned by the config crate instead of re-expanding `codex-core` as a

transitive API surface.

## What Changed

- Removed the `codex-rs/core/src/config/types.rs` re-export shim and the

`core::config::ApprovalsReviewer` re-export.

- Updated `codex-core`, `codex-cli`, `codex-tui`, `codex-app-server`,

`codex-mcp-server`, and `codex-linux-sandbox` call sites to import

`codex_config::types` directly.

- Added explicit `codex-config` dependencies to downstream crates that

previously relied on the `codex-core` re-export.

- Regenerated `codex-rs/core/config.schema.json` after updating the

config docs path reference.

## Why

`codex-core` was re-exporting APIs owned by sibling `codex-*` crates,

which made downstream crates depend on `codex-core` as a proxy module

instead of the actual owner crate.

Removing those forwards makes crate boundaries explicit and lets leaf

crates drop unnecessary `codex-core` dependencies. In this PR, this

reduces the dependency on `codex-core` to `codex-login` in the following

files:

```

codex-rs/backend-client/Cargo.toml

codex-rs/mcp-server/tests/common/Cargo.toml

```

## What

- Remove `codex-rs/core/src/lib.rs` re-exports for symbols owned by

`codex-login`, `codex-mcp`, `codex-rollout`, `codex-analytics`,

`codex-protocol`, `codex-shell-command`, `codex-sandboxing`,

`codex-tools`, and `codex-utils-path`.

- Delete the `default_client` forwarding shim in `codex-rs/core`.

- Update in-crate and downstream callsites to import directly from the

owning `codex-*` crate.

- Add direct Cargo dependencies where callsites now target the owner

crate, and remove `codex-core` from `codex-rs/backend-client`.

## Why

Follow-up to #16288: the new dynamic provider auth token flow currently

defaults `refresh_interval_ms` to a non-zero value and rejects `0`

entirely.

For command-backed bearer auth, `0` should mean "never auto-refresh".

That lets callers keep using the cached token until the backend actually

returns `401 Unauthorized`, at which point Codex can rerun the auth

command as part of the existing retry path.

## What changed

- changed `ModelProviderAuthInfo.refresh_interval_ms` to accept `0` and

documented that value as disabling proactive refresh

- updated the external bearer token refresher to treat

`refresh_interval_ms = 0` as an indefinitely reusable cached token,

while still rerunning the auth command during unauthorized recovery

- regenerated `core/config.schema.json` so the schema minimum is `0` and

the new behavior is described in the field docs

- added coverage for both config deserialization and the no-auto-refresh

plus `401` recovery behavior

## How tested

- `cargo test -p codex-protocol`

- `cargo test -p codex-login`

- `cargo test -p codex-core test_deserialize_provider_auth_config_`

## Why

https://github.com/openai/codex/pull/16287 introduced a change to

`codex-rs/login/src/auth/auth_tests.rs` that uses a PowerShell helper to

read the next token from `tokens.txt` and rewrite the remainder back to

disk. On Windows, `Get-Content` can return a scalar when the file has

only one remaining line, so `$lines[0]` reads the first character

instead of the full token. That breaks the external bearer refresh test

once the token list is nearly exhausted.

https://github.com/openai/codex/pull/16288 introduced similar changes to

`codex-rs/core/src/models_manager/manager_tests.rs` and

`codex-rs/core/tests/suite/client.rs`.

These went unnoticed because the failures showed up when the test was

run via Cargo on Windows, but not in our Bazel harness. Figuring out

that Cargo-vs-Bazel delta will happen in a follow-up PR.

## Verification

On my Windows machine, I verified `cargo test` passes when run in

`codex-rs/login` and `codex-rs/core`. Once this PR is merged, I will

keep an eye on

https://github.com/openai/codex/actions/workflows/rust-ci-full.yml to

verify it goes green.

## What changed

- Wrap `Get-Content -Path tokens.txt` in `@(...)` so the script always

gets array semantics before counting, indexing, and rewriting the

remaining lines.

I noticed that

https://github.com/openai/codex/actions/workflows/rust-ci-full.yml

started failing on my own PR,

https://github.com/openai/codex/pull/16288, even though CI was green

when I merged it.

Apparently, it introduced a lint violation that was [correctly!] caught

by our Cargo-based clippy runner, but not our Bazel-based one.

My next step is to figure out the reason for the delta between the two

setups, but I wanted to get us green again quickly, first.

## Summary

Fixes#15189.

Custom model providers that set `requires_openai_auth = false` could

only use static credentials via `env_key` or

`experimental_bearer_token`. That is not enough for providers that mint

short-lived bearer tokens, because Codex had no way to run a command to

obtain a bearer token, cache it briefly in memory, and retry with a

refreshed token after a `401`.

This PR adds that provider config and wires it through the existing auth

design: request paths still go through `AuthManager.auth()` and

`UnauthorizedRecovery`, with `core` only choosing when to use a

provider-backed bearer-only `AuthManager`.

## Scope

To keep this PR reviewable, `/models` only uses provider auth for the

initial request in this change. It does **not** add a dedicated `401`

retry path for `/models`; that can be follow-up work if we still need it

after landing the main provider-token support.

## Example Usage

```toml

model_provider = "corp-openai"

[model_providers.corp-openai]

name = "Corp OpenAI"

base_url = "https://gateway.example.com/openai"

requires_openai_auth = false

[model_providers.corp-openai.auth]

command = "gcloud"

args = ["auth", "print-access-token"]

timeout_ms = 5000

refresh_interval_ms = 300000

```

The command contract is intentionally small:

- write the bearer token to `stdout`

- exit `0`

- any leading or trailing whitespace is trimmed before the token is used

## What Changed

- add `model_providers.<id>.auth` to the config model and generated

schema

- validate that command-backed provider auth is mutually exclusive with

`env_key`, `experimental_bearer_token`, and `requires_openai_auth`

- build a bearer-only `AuthManager` for `ModelClient` and

`ModelsManager` when a provider configures `auth`

- let normal Responses requests and realtime websocket connects use the

provider-backed bearer source through the same `AuthManager.auth()` path

- allow `/models` online refresh for command-auth providers and attach

the provider token to the initial `/models` request

- keep `auth.cwd` available as an advanced escape hatch and include it

in the generated config schema

## Testing

- `cargo test -p codex-core provider_auth_command`

- `cargo test -p codex-core

refresh_available_models_uses_provider_auth_token`

- `cargo test -p codex-core

test_deserialize_provider_auth_config_defaults`

## Docs

- `developers.openai.com/codex` should document the new

`[model_providers.<id>.auth]` block and the token-command contract

## Why

`argument-comment-lint` was green in CI even though the repo still had

many uncommented literal arguments. The main gap was target coverage:

the repo wrapper did not force Cargo to inspect test-only call sites, so

examples like the `latest_session_lookup_params(true, ...)` tests in

`codex-rs/tui_app_server/src/lib.rs` never entered the blocking CI path.

This change cleans up the existing backlog, makes the default repo lint

path cover all Cargo targets, and starts rolling that stricter CI

enforcement out on the platform where it is currently validated.

## What changed

- mechanically fixed existing `argument-comment-lint` violations across

the `codex-rs` workspace, including tests, examples, and benches

- updated `tools/argument-comment-lint/run-prebuilt-linter.sh` and

`tools/argument-comment-lint/run.sh` so non-`--fix` runs default to

`--all-targets` unless the caller explicitly narrows the target set

- fixed both wrappers so forwarded cargo arguments after `--` are

preserved with a single separator

- documented the new default behavior in

`tools/argument-comment-lint/README.md`

- updated `rust-ci` so the macOS lint lane keeps the plain wrapper

invocation and therefore enforces `--all-targets`, while Linux and

Windows temporarily pass `-- --lib --bins`

That temporary CI split keeps the stricter all-targets check where it is

already cleaned up, while leaving room to finish the remaining Linux-

and Windows-specific target-gated cleanup before enabling

`--all-targets` on those runners. The Linux and Windows failures on the

intermediate revision were caused by the wrapper forwarding bug, not by

additional lint findings in those lanes.

## Validation

- `bash -n tools/argument-comment-lint/run.sh`

- `bash -n tools/argument-comment-lint/run-prebuilt-linter.sh`

- shell-level wrapper forwarding check for `-- --lib --bins`

- shell-level wrapper forwarding check for `-- --tests`

- `just argument-comment-lint`

- `cargo test` in `tools/argument-comment-lint`

- `cargo test -p codex-terminal-detection`

## Follow-up

- Clean up remaining Linux-only target-gated callsites, then switch the

Linux lint lane back to the plain wrapper invocation.

- Clean up remaining Windows-only target-gated callsites, then switch

the Windows lint lane back to the plain wrapper invocation.

## Summary

- split the joined `PATH` before running system `bwrap` lookup

- keep the existing workspace-local `bwrap` skip behavior intact

- add regression tests that exercise real multi-entry search paths

## Why

The PATH-based lookup added in #15791 still wrapped the raw `PATH`

environment value as a single `PathBuf` before passing it through

`join_paths()`. On Unix, a normal multi-entry `PATH` contains `:`, so

that wrapper path is invalid as one path element and the lookup returns

`None`.

That made Codex behave as if no system `bwrap` was installed even when

`bwrap` was available on `PATH`, which is what users in #15340 were

still hitting on `0.117.0-alpha.25`.

## Impact

System `bwrap` discovery now works with normal multi-entry `PATH` values

instead of silently falling back to the vendored binary.

Fixes#15340.

## Validation

- `just fmt`

- `cargo test -p codex-sandboxing`

- `cargo test -p codex-linux-sandbox`

- `just fix -p codex-sandboxing`

- `just argument-comment-lint`

## Why

`PermissionProfile` should only describe the per-command permissions we

still want to grant dynamically. Keeping

`MacOsSeatbeltProfileExtensions` in that surface forced extra macOS-only

approval, protocol, schema, and TUI branches for a capability we no

longer want to expose.

## What changed

- Removed the macOS-specific permission-profile types from

`codex-protocol`, the app-server v2 API, and the generated

schema/TypeScript artifacts.

- Deleted the core and sandboxing plumbing that threaded

`MacOsSeatbeltProfileExtensions` through execution requests and seatbelt

construction.

- Simplified macOS seatbelt generation so it always includes the fixed

read-only preferences allowlist instead of carrying a configurable

profile extension.

- Removed the macOS additional-permissions UI/docs/test coverage and

deleted the obsolete macOS permission modules.

- Tightened `request_permissions` intersection handling so explicitly

empty requested read lists are preserved only when that field was

actually granted, avoiding zero-grant responses being stored as active

permissions.

## Why

`token_data` is owned by `codex-login`, but `codex-core` was still

re-exporting it. That let callers pull auth token types through

`codex-core`, which keeps otherwise unrelated crates coupled to

`codex-core` and makes `codex-core` more of a build-graph bottleneck.

## What changed

- remove the `codex-core` re-export of `codex_login::token_data`

- update the remaining `codex-core` internals that used

`crate::token_data` to import `codex_login::token_data` directly

- update downstream callers in `codex-rs/chatgpt`,

`codex-rs/tui_app_server`, `codex-rs/app-server/tests/common`, and

`codex-rs/core/tests` to import `codex_login::token_data` directly

- add explicit `codex-login` workspace dependencies and refresh lock

metadata for crates that now depend on it directly

## Validation

- `cargo test -p codex-chatgpt --locked`

- `just argument-comment-lint`

- `just bazel-lock-update`

- `just bazel-lock-check`

## Notes

- attempted `cargo test -p codex-core --locked` and `cargo test -p

codex-core auth_refresh --locked`, but both ran out of disk while

linking `codex-core` test binaries in the local environment

## Why

`#[large_stack_test]` made the `apply_patch_cli` tests pass by giving

them more stack, but it did not address why those tests needed the extra

stack in the first place.

The real problem is the async state built by the `apply_patch_cli`

harness path. Those tests await three helper boundaries directly:

harness construction, turn submission, and apply-patch output

collection. If those helpers inline their full child futures, the test

future grows to include the whole harness startup and request/response

path.

This change replaces the workaround from #12768 with the same basic

approach used in #13429, but keeps the fix narrower: only the helper

boundaries awaited directly by `apply_patch_cli` stay boxed.

## What Changed

- removed `#[large_stack_test]` from

`core/tests/suite/apply_patch_cli.rs`

- restored ordinary `#[tokio::test(flavor = "multi_thread",

worker_threads = 2)]` annotations in that suite

- deleted the now-unused `codex-test-macros` crate and removed its

workspace wiring

- boxed only the three helper boundaries that the suite awaits directly:

- `apply_patch_harness_with(...)`

- `TestCodexHarness::submit(...)`

- `TestCodexHarness::apply_patch_output(...)`

- added comments at those boxed boundaries explaining why they remain

boxed

## Testing

- `cargo test -p codex-core --test all suite::apply_patch_cli --

--nocapture`

## References

- #12768

- #13429

Fixes#15283.

## Summary

Older system bubblewrap builds reject `--argv0`, which makes our Linux

sandbox fail before the helper can re-exec. This PR keeps using system

`/usr/bin/bwrap` whenever it exists and only falls back to vendored

bwrap when the system binary is missing. That matters on stricter

AppArmor hosts, where the distro bwrap package also provides the policy

setup needed for user namespaces.

For old system bwrap, we avoid `--argv0` instead of switching binaries:

- pass the sandbox helper a full-path `argv0`,

- keep the existing `current_exe() + --argv0` path when the selected

launcher supports it,

- otherwise omit `--argv0` and re-exec through the helper's own

`argv[0]` path, whose basename still dispatches as

`codex-linux-sandbox`.

Also updates the launcher/warning tests and docs so they match the new

behavior: present-but-old system bwrap uses the compatibility path, and

only absent system bwrap falls back to vendored.

### Validation

1. Install Ubuntu 20.04 in a VM

2. Compile codex and run without bubblewrap installed - see a warning

about falling back to the vendored bwrap

3. Install bwrap and verify version is 0.4.0 without `argv0` support

4. run codex and use apply_patch tool without errors

<img width="802" height="631" alt="Screenshot 2026-03-25 at 11 48 36 PM"

src="https://github.com/user-attachments/assets/77248a29-aa38-4d7c-9833-496ec6a458b8"

/>

<img width="807" height="634" alt="Screenshot 2026-03-25 at 11 47 32 PM"

src="https://github.com/user-attachments/assets/5af8b850-a466-489b-95a6-455b76b5050f"

/>

<img width="812" height="635" alt="Screenshot 2026-03-25 at 11 45 45 PM"

src="https://github.com/user-attachments/assets/438074f0-8435-4274-a667-332efdd5cb57"

/>

<img width="801" height="623" alt="Screenshot 2026-03-25 at 11 43 56 PM"

src="https://github.com/user-attachments/assets/0dc8d3f5-e8cf-4218-b4b4-a4f7d9bf02e3"

/>

---------

Co-authored-by: Michael Bolin <mbolin@openai.com>

CHAINED PR - note that base is eternal/hooks-pretooluse-bash, not main

-- so the following PR should be first

Matching post-tool hook to the pre-tool functionality here:

https://github.com/openai/codex/pull/15211

So, PreToolUse calls for plain shell calls, allows blocking. This

PostToolUse call runs after the command executed

example run:

```

› as a test, run in parallel the following commands:

- echo 'one'

- echo '[block-pre-tool-use]'

- echo '[block-post-tool-use]'

⚠ MCP startup incomplete (failed: notion, linear)

• Cruising through those three commands in parallel now, and I’ll share the exact outputs right after

they land.

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

PreToolUse hook (blocked)

warning: wizard-tower PreToolUse demo blocked a Bash command on purpose.

feedback: PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo 'one'

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo '[block-post-tool-use]'

• Ran echo '[block-post-tool-use]'

└ [block-post-tool-use]

• Ran echo 'one'

└ one

• Running PostToolUse hook: reading the observatory aftermath

• Running PostToolUse hook: reading the observatory aftermath

PostToolUse hook (blocked)

warning: wizard-tower PostToolUse demo blocked the Bash follow-through on purpose.

feedback: PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to

continue.

PostToolUse hook (completed)

warning: wizard-tower PostToolUse demo inspected Bash: echo 'one'

hook context: PostToolUse demo saw the Bash result. Response preview: one

──────────────────────────────────────────────────────────────────────────────────────────────────────

• Ran them in parallel. Little wave report:

- echo 'one' completed and returned one.

- echo '[block-pre-tool-use]' was blocked before execution by the PreToolUse hook.

- echo '[block-post-tool-use]' ran, but its result was blocked afterward by the PostToolUse hook.

The hook messages were:

- PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

- PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to continue.

› for the blocked messages (correctly blocked in this test) did the tool output land back into

context?

• Yeah, from this run:

- PreToolUse block: the command itself did not execute, so no command output landed in context. Only

the block message did.

- PostToolUse block: the command did execute, but the actual stdout did not come back into context.

Only the post-tool block message did.

So the blocked notifications landed in context, but the blocked command payloads themselves did not.

Nice clean guardrail, aloha.

```

Add environment manager that is a singleton and is created early in

app-server (before skill manager, before config loading).

Use an environment variable to point to a running exec server.

Migrate `cwd` and related session/config state to `AbsolutePathBuf` so

downstream consumers consistently see absolute working directories.

Add test-only `.abs()` helpers for `Path`, `PathBuf`, and `TempDir`, and

update branch-local tests to use them instead of

`AbsolutePathBuf::try_from(...)`.

For the remaining TUI/app-server snapshot coverage that renders absolute

cwd values, keep the snapshots unchanged and skip the Windows-only cases

where the platform-specific absolute path layout differs.

# External (non-OpenAI) Pull Request Requirements

Before opening this Pull Request, please read the dedicated

"Contributing" markdown file or your PR may be closed:

https://github.com/openai/codex/blob/main/docs/contributing.md

If your PR conforms to our contribution guidelines, replace this text

with a detailed and high quality description of your changes.

Include a link to a bug report or enhancement request.

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

- remove the fork-startup `build_initial_context` injection

- keep the reconstructed `reference_context_item` as the fork baseline

until the first real turn

- update fork-history tests and the request snapshot, and add a

`TODO(ccunningham)` for remaining nondiffable initial-context inputs

## Why

Fork startup was appending current-session initial context immediately

after reconstructing the parent rollout, then the first real turn could

emit context updates again. That duplicated model-visible context in the

child rollout.

## Impact

Forked sessions now behave like resume for context seeding: startup

reconstructs history and preserves the prior baseline, and the first

real turn handles any current-session context emission.

---------

Co-authored-by: Codex <noreply@openai.com>

- create `codex-git-utils` and move the shared git helpers into it with

file moves preserved for diff readability

- move the `GitInfo` helpers out of `core` so stacked rollout work can

depend on the shared crate without carrying its own git info module

---------

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

## Summary

- trim contiguous developer/contextual-user pre-turn updates when

rollback cuts back to a user turn

- add a focused history regression test for the trim behavior

- update the rollback request-boundary snapshots to show the fixed

non-duplicating context shape

---------

Co-authored-by: Codex <noreply@openai.com>

built from #14256. PR description from @etraut-openai:

This PR addresses a hole in [PR

11802](https://github.com/openai/codex/pull/11802). The previous PR

assumed that app server clients would respond to token refresh failures

by presenting the user with an error ("you must log in again") and then

not making further attempts to call network endpoints using the expired

token. While they do present the user with this error, they don't

prevent further attempts to call network endpoints and can repeatedly

call `getAuthStatus(refreshToken=true)` resulting in many failed calls

to the token refresh endpoint.

There are three solutions I considered here:

1. Change the getAuthStatus app server call to return a null auth if the

caller specified "refreshToken" on input and the refresh attempt fails.

This will cause clients to immediately log out the user and return them

to the log in screen. This is a really bad user experience. It's also a

breaking change in the app server contract that could break third-party

clients.

2. Augment the getAuthStatus app server call to return an additional

field that indicates the state of "token could not be refreshed". This

is a non-breaking change to the app server API, but it requires

non-trivial changes for all clients to properly handle this new field

properly.

3. Change the getAuthStatus implementation to handle the case where a

token refresh fails by marking the AuthManager's in-memory access and

refresh tokens as "poisoned" so it they are no longer used. This is the

simplest fix that requires no client changes.

I chose option 3.

Here's Codex's explanation of this change:

When an app-server client asks `getAuthStatus(refreshToken=true)`, we

may try to refresh a stale ChatGPT access token. If that refresh fails

permanently (for example `refresh_token_reused`, expired, or revoked),

the old behavior was bad in two ways:

1. We kept the in-memory auth snapshot alive as if it were still usable.

2. Later auth checks could retry refresh again and again, creating a

storm of doomed `/oauth/token` requests and repeatedly surfacing the

same failure.

This is especially painful for app-server clients because they poll auth

status and can keep driving the refresh path without any real chance of

recovery.

This change makes permanent refresh failures terminal for the current

managed auth snapshot without changing the app-server API contract.

What changed:

- `AuthManager` now poisons the current managed auth snapshot in memory

after a permanent refresh failure, keyed to the unchanged `AuthDotJson`.

- Once poisoned, later refresh attempts for that same snapshot fail fast

locally without calling the auth service again.

- The poison is cleared automatically when auth materially changes, such

as a new login, logout, or reload of different auth state from storage.

- `getAuthStatus(includeToken=true)` now omits `authToken` after a

permanent refresh failure instead of handing out the stale cached bearer

token.

This keeps the current auth method visible to clients, avoids forcing an

immediate logout flow, and stops repeated refresh attempts for

credentials that cannot recover.

---------

Co-authored-by: Eric Traut <etraut@openai.com>

Follow up to #15357 by making proactive ChatGPT auth refresh depend on

the access token's JWT expiration instead of treating `last_refresh` age

as the primary source of truth.

## Summary

- replace the second-compaction test fixtures with a single ordered

`/responses` sequence

- assert against the real recorded request order instead of aggregating

per-mock captures

- realign the second-summary assertion to the first post-compaction user

turn where the summary actually appears

## Root cause

`compact_resume_after_second_compaction_preserves_history` collected

requests from multiple `mount_sse_once_match` recorders. Overlapping

matchers could record the same HTTP request more than once, so the test

indexed into a duplicated synthetic list rather than the true request

stream. That made the summary assertion depend on matcher evaluation

order and platform-specific behavior.

## Impact

- makes the flaky test deterministic by removing duplicate request

capture from the assertion path

- keeps the change scoped to the test only

## Validation

- `just fmt`

- `just argument-comment-lint`

- `env -u CODEX_SANDBOX_NETWORK_DISABLED cargo test -p codex-core

compact_resume_after_second_compaction_preserves_history -- --nocapture`

- repeated the same targeted test 10 times

---------

Co-authored-by: Codex <noreply@openai.com>

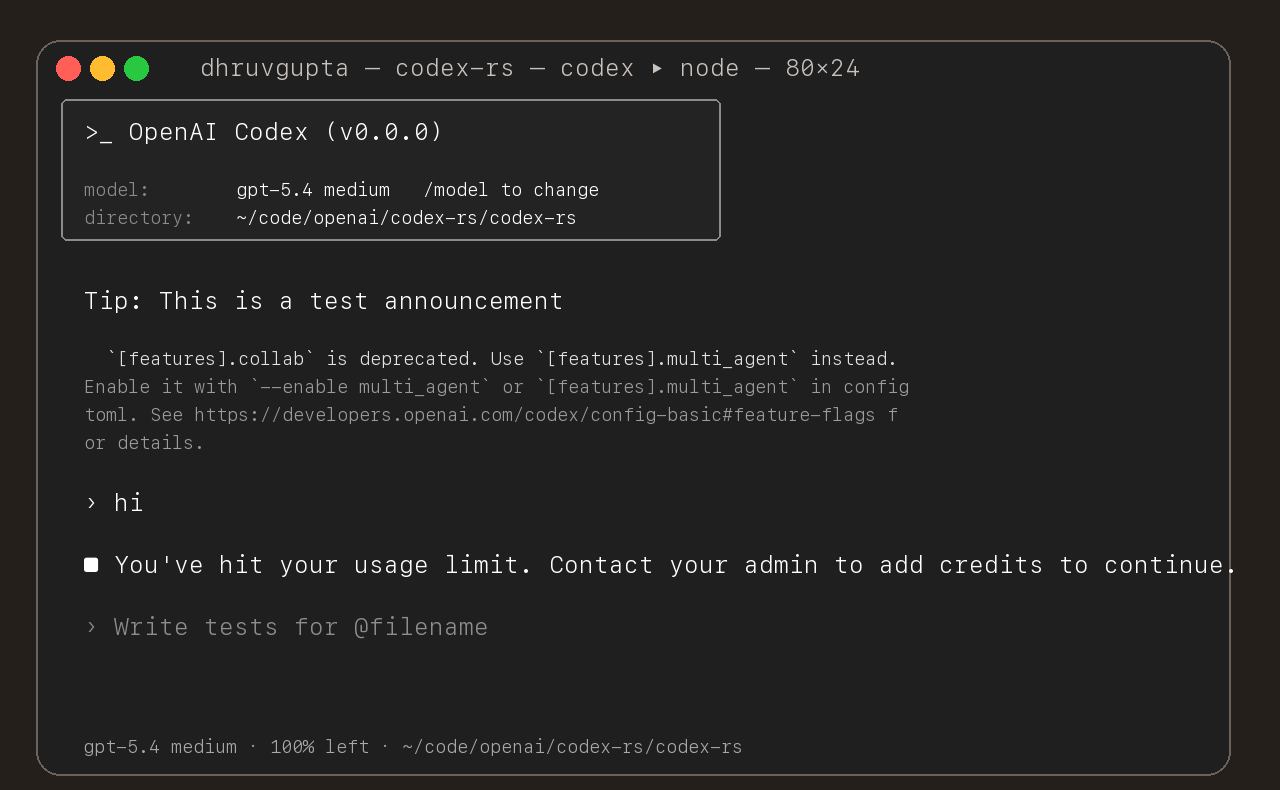

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

## Summary

- add `ForkSnapshotMode` to `ThreadManager::fork_thread` so callers can

request either a committed snapshot or an interrupted snapshot

- share the model-visible `<turn_aborted>` history marker between the

live interrupt path and interrupted forks

- update the small set of direct fork callsites to pass

`ForkSnapshotMode::Committed`

Note: this enables /btw to work similarly as Esc to interrupt (hopefully

somewhat in distribution)

---------

Co-authored-by: Codex <noreply@openai.com>

## What changed

- adds a targeted snapshot test for rollback with contextual diffs in

`codex_tests.rs`

- snapshots the exact model-visible request input before the rolled-back

turn and on the follow-up request after rollback

- shows the duplicate developer and environment context pair appearing

again before the follow-up user message

## Why

Rollback currently rewinds the reference context baseline without

rewinding the live session overrides. On the next turn, the same

contextual diff is emitted again and duplicated in the request sent to

the model.

## Impact

- makes the regression visible in a canonical snapshot test

- keeps the snapshot on the shared `context_snapshot` path without

adding new formatting helpers

- gives a direct repro for future fixes to rollback/context

reconstruction

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

Adds support for approvals_reviewer to `Op::UserTurn` so we can migrate

`[CodexMessageProcessor::turn_start]` to use Op::UserTurn

## Testing

- [x] Adds quick test for the new field

Co-authored-by: Codex <noreply@openai.com>