## Why

`thread/shellCommand` executes the raw command string through the

current user shell, which is PowerShell on Windows. The two v2

app-server tests in `app-server/tests/suite/v2/thread_shell_command.rs`

used POSIX `printf`, so Bazel CI on Windows failed with `printf` not

being recognized as a PowerShell command.

For reference, the user-shell task wraps commands with the active shell

before execution:

[`core/src/tasks/user_shell.rs`](7a3eec6fdb/codex-rs/core/src/tasks/user_shell.rs (L120-L126)).

## What Changed

Added a test-local helper that builds a shell-appropriate output command

and expected newline sequence from `default_user_shell()`:

- PowerShell: `Write-Output '...'` with `\r\n`

- Cmd: `echo ...` with `\r\n`

- POSIX shells: `printf '%s\n' ...` with `\n`

Both `thread_shell_command_runs_as_standalone_turn_and_persists_history`

and `thread_shell_command_uses_existing_active_turn` now use that

helper.

## Verification

- `cargo test -p codex-app-server thread_shell_command`

- Persist trusted cwd state during thread/start when the resolved

sandbox is elevated.

- Add app-server coverage for trusted root resolution and confirm

turn/start does not mutate trust.

Addresses #16560

Problem: `/status` stopped showing the source thread id in forked TUI

sessions after the app-server migration.

Solution: Carry fork source ids through app-server v2 thread data and

the TUI session adapter, and update TUI fixtures so `/status` matches

the old TUI behavior.

## Why

This finishes the config-type move out of `codex-core` by removing the

temporary compatibility shim in `codex_core::config::types`. Callers now

depend on `codex-config` directly, which keeps these config model types

owned by the config crate instead of re-expanding `codex-core` as a

transitive API surface.

## What Changed

- Removed the `codex-rs/core/src/config/types.rs` re-export shim and the

`core::config::ApprovalsReviewer` re-export.

- Updated `codex-core`, `codex-cli`, `codex-tui`, `codex-app-server`,

`codex-mcp-server`, and `codex-linux-sandbox` call sites to import

`codex_config::types` directly.

- Added explicit `codex-config` dependencies to downstream crates that

previously relied on the `codex-core` re-export.

- Regenerated `codex-rs/core/config.schema.json` after updating the

config docs path reference.

## Why

`codex-core` was re-exporting APIs owned by sibling `codex-*` crates,

which made downstream crates depend on `codex-core` as a proxy module

instead of the actual owner crate.

Removing those forwards makes crate boundaries explicit and lets leaf

crates drop unnecessary `codex-core` dependencies. In this PR, this

reduces the dependency on `codex-core` to `codex-login` in the following

files:

```

codex-rs/backend-client/Cargo.toml

codex-rs/mcp-server/tests/common/Cargo.toml

```

## What

- Remove `codex-rs/core/src/lib.rs` re-exports for symbols owned by

`codex-login`, `codex-mcp`, `codex-rollout`, `codex-analytics`,

`codex-protocol`, `codex-shell-command`, `codex-sandboxing`,

`codex-tools`, and `codex-utils-path`.

- Delete the `default_client` forwarding shim in `codex-rs/core`.

- Update in-crate and downstream callsites to import directly from the

owning `codex-*` crate.

- Add direct Cargo dependencies where callsites now target the owner

crate, and remove `codex-core` from `codex-rs/backend-client`.

## Why

`argument-comment-lint` was green in CI even though the repo still had

many uncommented literal arguments. The main gap was target coverage:

the repo wrapper did not force Cargo to inspect test-only call sites, so

examples like the `latest_session_lookup_params(true, ...)` tests in

`codex-rs/tui_app_server/src/lib.rs` never entered the blocking CI path.

This change cleans up the existing backlog, makes the default repo lint

path cover all Cargo targets, and starts rolling that stricter CI

enforcement out on the platform where it is currently validated.

## What changed

- mechanically fixed existing `argument-comment-lint` violations across

the `codex-rs` workspace, including tests, examples, and benches

- updated `tools/argument-comment-lint/run-prebuilt-linter.sh` and

`tools/argument-comment-lint/run.sh` so non-`--fix` runs default to

`--all-targets` unless the caller explicitly narrows the target set

- fixed both wrappers so forwarded cargo arguments after `--` are

preserved with a single separator

- documented the new default behavior in

`tools/argument-comment-lint/README.md`

- updated `rust-ci` so the macOS lint lane keeps the plain wrapper

invocation and therefore enforces `--all-targets`, while Linux and

Windows temporarily pass `-- --lib --bins`

That temporary CI split keeps the stricter all-targets check where it is

already cleaned up, while leaving room to finish the remaining Linux-

and Windows-specific target-gated cleanup before enabling

`--all-targets` on those runners. The Linux and Windows failures on the

intermediate revision were caused by the wrapper forwarding bug, not by

additional lint findings in those lanes.

## Validation

- `bash -n tools/argument-comment-lint/run.sh`

- `bash -n tools/argument-comment-lint/run-prebuilt-linter.sh`

- shell-level wrapper forwarding check for `-- --lib --bins`

- shell-level wrapper forwarding check for `-- --tests`

- `just argument-comment-lint`

- `cargo test` in `tools/argument-comment-lint`

- `cargo test -p codex-terminal-detection`

## Follow-up

- Clean up remaining Linux-only target-gated callsites, then switch the

Linux lint lane back to the plain wrapper invocation.

- Clean up remaining Windows-only target-gated callsites, then switch

the Windows lint lane back to the plain wrapper invocation.

## Problem

App-server clients could only initiate ChatGPT login through the browser

callback flow, even though the shared login crate already supports

device-code auth. That left VS Code, Codex App, and other app-server

clients without a first-class way to use the existing device-code

backend when browser redirects are brittle or when the client UX wants

to own the login ceremony.

## Mental model

This change adds a second ChatGPT login start path to app-server:

clients can now call `account/login/start` with `type:

"chatgptDeviceCode"`. App-server immediately returns a `loginId` plus

the device-code UX payload (`verificationUrl` and `userCode`), then

completes the login asynchronously in the background using the existing

`codex_login` polling flow. Successful device-code login still resolves

to ordinary `chatgpt` auth, and completion continues to flow through the

existing `account/login/completed` and `account/updated` notifications.

## Non-goals

This does not introduce a new auth mode, a new account shape, or a

device-code eligibility discovery API. It also does not add automatic

fallback to browser login in core; clients remain responsible for

choosing when to request device code and whether to retry with a

different UX if the backend/admin policy rejects it.

## Tradeoffs

We intentionally keep `login_chatgpt_common` as a local validation

helper instead of turning it into a capability probe. Device-code

eligibility is checked by actually calling `request_device_code`, which

means policy-disabled cases surface as an immediate request error rather

than an async completion event. We also keep the active-login state

machine minimal: browser and device-code logins share the same public

cancel contract, but device-code cancellation is implemented with a

local cancel token rather than a larger cross-crate refactor.

## Architecture

The protocol grows a new `chatgptDeviceCode` request/response variant in

app-server v2. On the server side, the new handler reuses the existing

ChatGPT login precondition checks, calls `request_device_code`, returns

the device-code payload, and then spawns a background task that waits on

either cancellation or `complete_device_code_login`. On success, it

reuses the existing auth reload and cloud-requirements refresh path

before emitting `account/login/completed` success and `account/updated`.

On failure or cancellation, it emits only `account/login/completed`

failure. The existing `account/login/cancel { loginId }` contract

remains unchanged and now works for both browser and device-code

attempts.

## Tests

Added protocol serialization coverage for the new request/response

variant, plus app-server tests for device-code success, failure, cancel,

and start-time rejection behavior. Existing browser ChatGPT login

coverage remains in place to show that the callback-based flow is

unchanged.

## Summary

This change adds websocket authentication at the app-server transport

boundary and enforces it before JSON-RPC `initialize`, so authenticated

deployments reject unauthenticated clients during the websocket

handshake rather than after a connection has already been admitted.

During rollout, websocket auth is opt-in for non-loopback listeners so

we do not break existing remote clients. If `--ws-auth ...` is

configured, the server enforces auth during websocket upgrade. If auth

is not configured, non-loopback listeners still start, but app-server

logs a warning and the startup banner calls out that auth should be

configured before real remote use.

The server supports two auth modes: a file-backed capability token, and

a standard HMAC-signed JWT/JWS bearer token verified with the

`jsonwebtoken` crate, with optional issuer, audience, and clock-skew

validation. Capability tokens are normalized, hashed, and compared in

constant time. Short shared secrets for signed bearer tokens are

rejected at startup. Requests carrying an `Origin` header are rejected

with `403` by transport middleware, and authenticated clients present

credentials as `Authorization: Bearer <token>` during websocket upgrade.

## Validation

- `cargo test -p codex-app-server transport::auth`

- `cargo test -p codex-cli app_server_`

- `cargo clippy -p codex-app-server --all-targets -- -D warnings`

- `just bazel-lock-check`

Note: in the broad `cargo test -p codex-app-server

connection_handling_websocket` run, the touched websocket auth cases

passed, but unrelated Unix shutdown tests failed with a timeout in this

environment.

---------

Co-authored-by: Eric Traut <etraut@openai.com>

### Summary

Add the v2 app-server filesystem watch RPCs and notifications, wire them

through the message processor, and implement connection-scoped watches

with notify-backed change delivery. This also updates the schema

fixtures, app-server documentation, and the v2 integration coverage for

watch and unwatch behavior.

This allows clients to efficiently watch for filesystem updates, e.g. to

react on branch changes.

### Testing

- exercise watch lifecycles for directory changes, atomic file

replacement, missing-file targets, and unwatch cleanup

- create `codex-git-utils` and move the shared git helpers into it with

file moves preserved for diff readability

- move the `GitInfo` helpers out of `core` so stacked rollout work can

depend on the shared crate without carrying its own git info module

---------

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

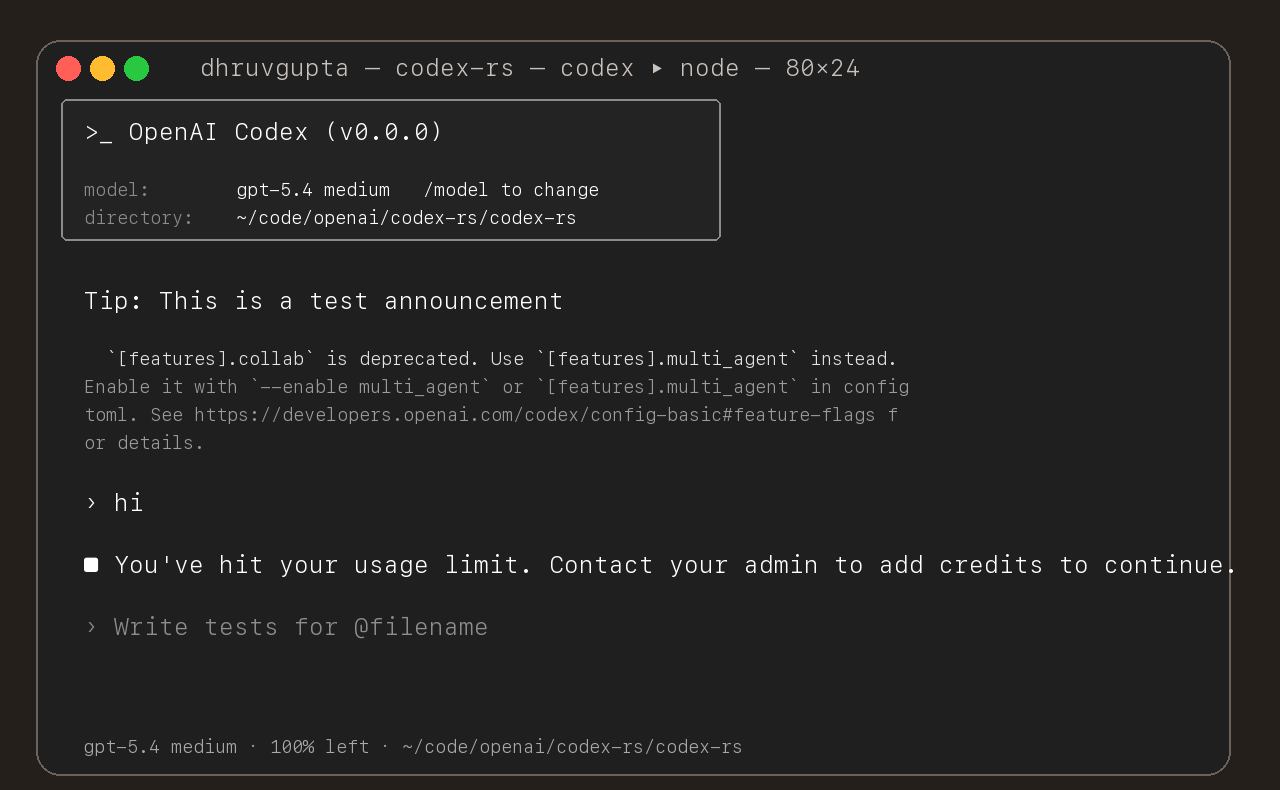

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

## Summary

- add `ForkSnapshotMode` to `ThreadManager::fork_thread` so callers can

request either a committed snapshot or an interrupted snapshot

- share the model-visible `<turn_aborted>` history marker between the

live interrupt path and interrupted forks

- update the small set of direct fork callsites to pass

`ForkSnapshotMode::Committed`

Note: this enables /btw to work similarly as Esc to interrupt (hopefully

somewhat in distribution)

---------

Co-authored-by: Codex <noreply@openai.com>

- emit a typed `thread/realtime/transcriptUpdated` notification from

live realtime transcript deltas

- expose that notification as flat `threadId`, `role`, and `text` fields

instead of a nested transcript array

- continue forwarding raw `handoff_request` items on

`thread/realtime/itemAdded`, including the accumulated

`active_transcript`

- update app-server docs, tests, and generated protocol schema artifacts

to match the delta-based payloads

---------

Co-authored-by: Codex <noreply@openai.com>

This PR add an URI-based system to reference agents within a tree. This

comes from a sync between research and engineering.

The main agent (the one manually spawned by a user) is always called

`/root`. Any sub-agent spawned by it will be `/root/agent_1` for example

where `agent_1` is chosen by the model.

Any agent can contact any agents using the path.

Paths can be used either in absolute or relative to the calling agents

Resume is not supported for now on this new path

- Split the feature system into a new `codex-features` crate.

- Cut `codex-core` and workspace consumers over to the new config and

warning APIs.

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

## Summary

Persist Stop-hook continuation prompts as `user` messages instead of

hidden `developer` messages + some requested integration tests

This is a followup to @pakrym 's comment in

https://github.com/openai/codex/pull/14532 to make sure stop-block

continuation prompts match training for turn loops

- Stop continuation now writes `<hook_prompt hook_run_id="...">stop

hook's user prompt<hook_prompt>`

- Introduces quick-xml dependency, though we already indirectly depended

on it anyway via syntect

- This PR only has about 500 lines of actual logic changes, the rest is

tests/schema

## Testing

Example run (with a sessionstart hook and 3 stop hooks) - this shows

context added by session start, then two stop hooks sending their own

additional prompts in a new turn. The model responds with a single

message addressing both. Then when that turn ends, the hooks detect that

they just ran using `stop_hook_active` and decide not to infinite loop

test files for this (unzip, move codex -> .codex):

[codex.zip](https://github.com/user-attachments/files/26075806/codex.zip)

```

› cats

• Running SessionStart hook: lighting the observatory

SessionStart hook (completed)

warning: Hi, I'm a session start hook for wizard-tower (startup).

hook context: A wimboltine stonpet is an exotic cuisine from hyperspace

• Cats are tiny zen wizards, my friend: equal parts nap, mystery, and chaos. If you want, we can talk

cat facts, cat breeds, cat names, or build something cat-themed in this repo.

• Running Stop hook: checking the tower wards

• Running Stop hook: sacking the guards

• Running Stop hook: hiring the guards

Stop hook (completed)

warning: Wizard Tower Stop hook reviewed the completed reply (177 chars).

Stop hook (blocked)

warning: Wizard Tower Stop hook continuing conversation

feedback: cook the stonpet

Stop hook (blocked)

warning: Wizard Tower Stop hook continuing conversation

feedback: eat the cooked stonpet

• Stonpet’s cooked, aloha style: flash-seared over a blue quasiflame, glazed with nebula salt, and

rested until the hyperspace juices settle.

Now we eat with gratitude, my friend. One mindful bite in, and the flavor is pure cosmic surf:

smoky, bright, and totally out of this dimension.

• Running Stop hook: checking the tower wards

• Running Stop hook: sacking the guards

• Running Stop hook: hiring the guards

Stop hook (completed)

warning: Wizard Tower Stop hook reviewed the completed reply (285 chars).

Stop hook (completed)

warning: Wizard Tower Stop hook saw a second pass and stayed calm to avoid a loop.

Stop hook (completed)

warning: Wizard Tower Stop hook saw a second pass and stayed calm to avoid a loop.

```

1. Added SessionSource::Custom(String) and --session-source.

2. Enforced plugin and skill products by session_source.

3. Applied the same filtering to curated background refresh.

This PR adds a new `thread/shellCommand` app server API so clients can

implement `!` shell commands. These commands are executed within the

sandbox, and the command text and output are visible to the model.

The internal implementation mirrors the current TUI `!` behavior.

- persist shell command execution as `CommandExecution` thread items,

including source and formatted output metadata

- bridge live and replayed app-server command execution events back into

the existing `tui_app_server` exec rendering path

This PR also wires `tui_app_server` to submit `!` commands through the

new API.

Resubmit https://github.com/openai/codex/pull/15020 with correct

content.

1. Use requirement-resolved config.features as the plugin gate.

2. Guard plugin/list, plugin/read, and related flows behind that gate.

3. Skip bad marketplace.json files instead of failing the whole list.

4. Simplify plugin state and caching.

1. Use requirement-resolved config.features as the plugin gate.

2. Guard plugin/list, plugin/read, and related flows behind that gate.

3. Skip bad marketplace.json files instead of failing the whole list.

4. Simplify plugin state and caching.

- Add shared Product support to marketplace plugin policy and skill

policy (no enforced yet).

- Move marketplace installation/authentication under policy and model it

as MarketplacePluginPolicy.

- Rename plugin/marketplace local manifest types to separate raw serde

shapes from resolved in-memory models.

- route realtime startup, input, and transport failures through a single

shutdown path

- emit one realtime error/closed lifecycle while clearing session state

once

---------

Co-authored-by: Codex <noreply@openai.com>

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

- thread the realtime version into conversation start and app-server

notifications

- keep playback-aware mic gating and playback interruption behavior on

v2 only, leaving v1 on the legacy path

## Stack Position

2/4. Built on top of #14828.

## Base

- #14828

## Unblocks

- #14829

- #14827

## Scope

- Port the realtime v2 wire parsing, session, app-server, and

conversation runtime behavior onto the split websocket-method base.

- Branch runtime behavior directly on the current realtime session kind

instead of parser-derived flow flags.

- Keep regression coverage in the existing e2e suites.

---------

Co-authored-by: Codex <noreply@openai.com>

- Added forceRemoteSync to plugin/install and plugin/uninstall.

- With forceRemoteSync=true, we update the remote plugin status first,

then apply the local change only if the backend call succeeds.

- Kept plugin/list(forceRemoteSync=true) as the main recon path, and for

now it treats remote enabled=false as uninstall. We

will eventually migrate to plugin/installed for more precise state

handling.

This extends dynamic_tool_calls to allow us to hide a tool from the

model context but still use it as part of the general tool calling

runtime (for ex from js_repl/code_mode)

make plugins' `defaultPrompt` an array, but keep backcompat for strings.

the array is limited by app-server to 3 entries of up to 128 chars

(drops extra entries, `None`s-out ones that are too long) without

erroring if those invariants are violating.

added tests, tested locally.

We regularly get bug reports from users who mistakenly have the

`OPENAI_BASE_URL` environment variable set. This PR deprecates this

environment variable in favor of a top-level config key

`openai_base_url` that is used for the same purpose. By making it a

config key, it will be more visible to users. It will also participate

in all of the infrastructure we've added for layered and managed

configs.

Summary

- introduce the `openai_base_url` top-level config key, update

schema/tests, and route the built-in openai provider through it while

- fall back to deprecated `OPENAI_BASE_URL` env var but warn user of

deprecation when no `openai_base_url` config key is present

- update CLI, SDK, and TUI code to prefer the new config path (with a

deprecated env-var fallback) and document the SDK behavior change