## Summary

- reduce public module visibility across Rust crates, preferring private

or crate-private modules with explicit crate-root public exports

- update external call sites and tests to use the intended public crate

APIs instead of reaching through module trees

- add the module visibility guideline to AGENTS.md

## Validation

- `cargo check --workspace --all-targets --message-format=short` passed

before the final fix/format pass

- `just fix` completed successfully

- `just fmt` completed successfully

- `git diff --check` passed

# Why this PR exists

This PR is trying to fix a coverage gap in the Windows Bazel Rust test

lane.

Before this change, the Windows `bazel test //...` job was nominally

part of PR CI, but a non-trivial set of `//codex-rs/...` Rust test

targets did not actually contribute test signal on Windows. In

particular, targets such as `//codex-rs/core:core-unit-tests`,

`//codex-rs/core:core-all-test`, and `//codex-rs/login:login-unit-tests`

were incompatible during Bazel analysis on the Windows gnullvm platform,

so they never reached test execution there. That is why the

Cargo-powered Windows CI job could surface Windows-only failures that

the Bazel-powered job did not report: Cargo was executing those tests,

while Bazel was silently dropping them from the runnable target set.

The main goal of this PR is to make the Windows Bazel test lane execute

those Rust test targets instead of skipping them during analysis, while

still preserving `windows-gnullvm` as the target configuration for the

code under test. In other words: use an MSVC host/exec toolchain where

Bazel helper binaries and build scripts need it, but continue compiling

the actual crate targets with the Windows gnullvm cfgs that our current

Bazel matrix is supposed to exercise.

# Important scope note

This branch intentionally removes the non-resource-loading `.rs` test

and production-code changes from the earlier

`codex/windows-bazel-rust-test-coverage` branch. The only Rust source

changes kept here are runfiles/resource-loading fixes in TUI tests:

- `codex-rs/tui/src/chatwidget/tests.rs`

- `codex-rs/tui/tests/manager_dependency_regression.rs`

That is deliberate. Since the corresponding tests already pass under

Cargo, this PR is meant to test whether Bazel infrastructure/toolchain

fixes alone are enough to get a healthy Windows Bazel test signal,

without changing test behavior for Windows timing, shell output, or

SQLite file-locking.

# How this PR changes the Windows Bazel setup

## 1. Split Windows host/exec and target concerns in the Bazel test lane

The core change is that the Windows Bazel test job now opts into an MSVC

host platform for Bazel execution-time tools, but only for `bazel test`,

not for the Bazel clippy build.

Files:

- `.github/workflows/bazel.yml`

- `.github/scripts/run-bazel-ci.sh`

- `MODULE.bazel`

What changed:

- `run-bazel-ci.sh` now accepts `--windows-msvc-host-platform`.

- When that flag is present on Windows, the wrapper appends

`--host_platform=//:local_windows_msvc` unless the caller already

provided an explicit `--host_platform`.

- `bazel.yml` passes that wrapper flag only for the Windows `bazel test

//...` job.

- The Bazel clippy job intentionally does **not** pass that flag, so

clippy stays on the default Windows gnullvm host/exec path and continues

linting against the target cfgs we care about.

- `run-bazel-ci.sh` also now forwards `CODEX_JS_REPL_NODE_PATH` on

Windows and normalizes the `node` executable path with `cygpath -w`, so

tests that need Node resolve the runner's Node installation correctly

under the Windows Bazel test environment.

Why this helps:

- The original incompatibility chain was mostly on the **exec/tool**

side of the graph, not in the Rust test code itself. Moving host tools

to MSVC lets Bazel resolve helper binaries and generators that were not

viable on the gnullvm exec platform.

- Keeping the target platform on gnullvm preserves cfg coverage for the

crates under test, which is important because some Windows behavior

differs between `msvc` and `gnullvm`.

## 2. Teach the repo's Bazel Rust macro about Windows link flags and

integration-test knobs

Files:

- `defs.bzl`

- `codex-rs/core/BUILD.bazel`

- `codex-rs/otel/BUILD.bazel`

- `codex-rs/tui/BUILD.bazel`

What changed:

- Replaced the old gnullvm-only linker flag block with

`WINDOWS_RUSTC_LINK_FLAGS`, which now handles both Windows ABIs:

- gnullvm gets `-C link-arg=-Wl,--stack,8388608`

- MSVC gets `-C link-arg=/STACK:8388608`, `-C

link-arg=/NODEFAULTLIB:libucrt.lib`, and `-C link-arg=ucrt.lib`

- Threaded those Windows link flags into generated `rust_binary`,

unit-test binaries, and integration-test binaries.

- Extended `codex_rust_crate(...)` with:

- `integration_test_args`

- `integration_test_timeout`

- Used those new knobs to:

- mark `//codex-rs/core:core-all-test` as a long-running integration

test

- serialize `//codex-rs/otel:otel-all-test` with `--test-threads=1`

- Added `src/**/*.rs` to `codex-rs/tui` test runfiles, because one

regression test scans source files at runtime and Bazel does not expose

source-tree directories unless they are declared as data.

Why this helps:

- Once host-side MSVC tools are available, we still need the generated

Rust test binaries to link correctly on Windows. The MSVC-side

stack/UCRT flags make those binaries behave more like their Cargo-built

equivalents.

- The integration-test macro knobs avoid hardcoding one-off test

behavior in ad hoc BUILD rules and make the generated test targets more

expressive where Bazel and Cargo have different runtime defaults.

## 3. Patch `rules_rs` / `rules_rust` so Windows MSVC exec-side Rust and

build scripts are actually usable

Files:

- `MODULE.bazel`

- `patches/rules_rs_windows_exec_linker.patch`

- `patches/rules_rust_windows_bootstrap_process_wrapper_linker.patch`

- `patches/rules_rust_windows_build_script_runner_paths.patch`

- `patches/rules_rust_windows_exec_msvc_build_script_env.patch`

- `patches/rules_rust_windows_msvc_direct_link_args.patch`

- `patches/rules_rust_windows_process_wrapper_skip_temp_outputs.patch`

- `patches/BUILD.bazel`

What these patches do:

- `rules_rs_windows_exec_linker.patch`

- Adds a `rust-lld` filegroup for Windows Rust toolchain repos,

symlinked to `lld-link.exe` from `PATH`.

- Marks Windows toolchains as using a direct linker driver.

- Supplies Windows stdlib link flags for both gnullvm and MSVC.

- `rules_rust_windows_bootstrap_process_wrapper_linker.patch`

- For Windows MSVC Rust targets, prefers the Rust toolchain linker over

an inherited C++ linker path like `clang++`.

- This specifically avoids the broken mixed-mode command line where

rustc emits MSVC-style `/NOLOGO` / `/LIBPATH:` / `/OUT:` arguments but

Bazel still invokes `clang++.exe`.

- `rules_rust_windows_build_script_runner_paths.patch`

- Normalizes forward-slash execroot-relative paths into Windows path

separators before joining them on Windows.

- Uses short Windows paths for `RUSTC`, `OUT_DIR`, and the build-script

working directory to avoid path-length and quoting issues in third-party

build scripts.

- Exposes `RULES_RUST_BAZEL_BUILD_SCRIPT_RUNNER=1` to build scripts so

crate-local patches can detect "this is running under Bazel's

build-script runner".

- Fixes the Windows runfiles cleanup filter so generated files with

retained suffixes are actually retained.

- `rules_rust_windows_exec_msvc_build_script_env.patch`

- For exec-side Windows MSVC build scripts, stops force-injecting

Bazel's `CC`, `CXX`, `LD`, `CFLAGS`, and `CXXFLAGS` when that would send

GNU-flavored tool paths/flags into MSVC-oriented Cargo build scripts.

- Rewrites or strips GNU-only `--sysroot`, MinGW include/library paths,

stack-protector, and `_FORTIFY_SOURCE` flags on the MSVC exec path.

- The practical effect is that build scripts can fall back to the Visual

Studio toolchain environment already exported by CI instead of crashing

inside Bazel's hermetic `clang.exe` setup.

- `rules_rust_windows_msvc_direct_link_args.patch`

- When using a direct linker on Windows, stops forwarding GNU driver

flags such as `-L...` and `--sysroot=...` that `lld-link.exe` does not

understand.

- Passes non-`.lib` native artifacts as explicit `-Clink-arg=<path>`

entries when needed.

- Filters C++ runtime libraries to `.lib` artifacts on the Windows

direct-driver path.

- `rules_rust_windows_process_wrapper_skip_temp_outputs.patch`

- Excludes transient `*.tmp*` and `*.rcgu.o` files from process-wrapper

dependency search-path consolidation, so unstable compiler outputs do

not get treated as real link search-path inputs.

Why this helps:

- The host-platform split alone was not enough. Once Bazel started

analyzing/running previously incompatible Rust tests on Windows, the

next failures were in toolchain plumbing:

- MSVC-targeted Rust tests were being linked through `clang++` with

MSVC-style arguments.

- Cargo build scripts running under Bazel's Windows MSVC exec platform

were handed Unix/GNU-flavored path and flag shapes.

- Some generated paths were too long or had path-separator forms that

third-party Windows build scripts did not tolerate.

- These patches make that mixed Bazel/Cargo/Rust/MSVC path workable

enough for the test lane to actually build and run the affected crates.

## 4. Patch third-party crate build scripts that were not robust under

Bazel's Windows MSVC build-script path

Files:

- `MODULE.bazel`

- `patches/aws-lc-sys_windows_msvc_prebuilt_nasm.patch`

- `patches/ring_windows_msvc_include_dirs.patch`

- `patches/zstd-sys_windows_msvc_include_dirs.patch`

What changed:

- `aws-lc-sys`

- Detects Bazel's Windows MSVC build-script runner via

`RULES_RUST_BAZEL_BUILD_SCRIPT_RUNNER` or a `bazel-out` manifest-dir

path.

- Uses `clang-cl` for Bazel Windows MSVC builds when no explicit

`CC`/`CXX` is set.

- Allows prebuilt NASM on the Bazel Windows MSVC path even when `nasm`

is not available directly in the runner environment.

- Avoids canonicalizing `CARGO_MANIFEST_DIR` in the Bazel Windows MSVC

case, because that path may point into Bazel output/runfiles state where

preserving the given path is more reliable than forcing a local

filesystem canonicalization.

- `ring`

- Under the Bazel Windows MSVC build-script runner, copies the

pregenerated source tree into `OUT_DIR` and uses that as the

generated-source root.

- Adds include paths needed by MSVC compilation for

Fiat/curve25519/P-256 generated headers.

- Rewrites a few relative includes in C sources so the added include

directories are sufficient.

- `zstd-sys`

- Adds MSVC-only include directories for `compress`, `decompress`, and

feature-gated dictionary/legacy/seekable sources.

- Skips `-fvisibility=hidden` on MSVC targets, where that

GCC/Clang-style flag is not the right mechanism.

Why this helps:

- After the `rules_rust` plumbing started running build scripts on the

Windows MSVC exec path, some third-party crates still failed for

crate-local reasons: wrong compiler choice, missing include directories,

build-script assumptions about manifest paths, or Unix-only C compiler

flags.

- These crate patches address those crate-local assumptions so the

larger toolchain change can actually reach first-party Rust test

execution.

## 5. Keep the only `.rs` test changes to Bazel/Cargo runfiles parity

Files:

- `codex-rs/tui/src/chatwidget/tests.rs`

- `codex-rs/tui/tests/manager_dependency_regression.rs`

What changed:

- Instead of asking `find_resource!` for a directory runfile like

`src/chatwidget/snapshots` or `src`, these tests now resolve one known

file runfile first and then walk to its parent directory.

Why this helps:

- Bazel runfiles are more reliable for explicitly declared files than

for source-tree directories that happen to exist in a Cargo checkout.

- This keeps the tests working under both Cargo and Bazel without

changing their actual assertions.

# What we tried before landing on this shape, and why those attempts did

not work

## Attempt 1: Force `--host_platform=//:local_windows_msvc` for all

Windows Bazel jobs

This did make the previously incompatible test targets show up during

analysis, but it also pushed the Bazel clippy job and some unrelated

build actions onto the MSVC exec path.

Why that was bad:

- Windows clippy started running third-party Cargo build scripts with

Bazel's MSVC exec settings and crashed in crates such as `tree-sitter`

and `libsqlite3-sys`.

- That was a regression in a job that was previously giving useful

gnullvm-targeted lint signal.

What this PR does instead:

- The wrapper flag is opt-in, and `bazel.yml` uses it only for the

Windows `bazel test` lane.

- The clippy lane stays on the default Windows gnullvm host/exec

configuration.

## Attempt 2: Broaden the `rules_rust` linker override to all Windows

Rust actions

This fixed the MSVC test-lane failure where normal `rust_test` targets

were linked through `clang++` with MSVC-style arguments, but it broke

the default gnullvm path.

Why that was bad:

-

`@@rules_rs++rules_rust+rules_rust//util/process_wrapper:process_wrapper`

on the gnullvm exec platform started linking with `lld-link.exe` and

then failed to resolve MinGW-style libraries such as `-lkernel32`,

`-luser32`, and `-lmingw32`.

What this PR does instead:

- The linker override is restricted to Windows MSVC targets only.

- The gnullvm path keeps its original linker behavior, while MSVC uses

the direct Windows linker.

## Attempt 3: Keep everything on pure Windows gnullvm and patch the V8 /

Python incompatibility chain instead

This would have preserved a single Windows ABI everywhere, but it is a

much larger project than this PR.

Why that was not the practical first step:

- The original incompatibility chain ran through exec-side generators

and helper tools, not only through crate code.

- `third_party/v8` is already special-cased on Windows gnullvm because

`rusty_v8` only publishes Windows prebuilts under MSVC names.

- Fixing that path likely means deeper changes in

V8/rules_python/rules_rust toolchain resolution and generator execution,

not just one local CI flag.

What this PR does instead:

- Keep gnullvm for the target cfgs we want to exercise.

- Move only the Windows test lane's host/exec platform to MSVC, then

patch the build-script/linker boundary enough for that split

configuration to work.

## Attempt 4: Validate compatibility with `bazel test --nobuild ...`

This turned out to be a misleading local validation command.

Why:

- `bazel test --nobuild ...` can successfully analyze targets and then

still exit 1 with "Couldn't start the build. Unable to run tests"

because there are no runnable test actions after `--nobuild`.

Better local check:

```powershell

bazel build --nobuild --keep_going --host_platform=//:local_windows_msvc //codex-rs/login:login-unit-tests //codex-rs/core:core-unit-tests //codex-rs/core:core-all-test

```

# Which patches probably deserve upstream follow-up

My rough take is that the `rules_rs` / `rules_rust` patches are the

highest-value upstream candidates, because they are fixing generic

Windows host/exec + MSVC direct-linker behavior rather than

Codex-specific test logic.

Strong upstream candidates:

- `patches/rules_rs_windows_exec_linker.patch`

- `patches/rules_rust_windows_bootstrap_process_wrapper_linker.patch`

- `patches/rules_rust_windows_build_script_runner_paths.patch`

- `patches/rules_rust_windows_exec_msvc_build_script_env.patch`

- `patches/rules_rust_windows_msvc_direct_link_args.patch`

- `patches/rules_rust_windows_process_wrapper_skip_temp_outputs.patch`

Why these seem upstreamable:

- They address general-purpose problems in the Windows MSVC exec path:

- missing direct-linker exposure for Rust toolchains

- wrong linker selection when rustc emits MSVC-style args

- Windows path normalization/short-path issues in the build-script

runner

- forwarding GNU-flavored CC/link flags into MSVC Cargo build scripts

- unstable temp outputs polluting process-wrapper search-path state

Potentially upstreamable crate patches, but likely with more care:

- `patches/zstd-sys_windows_msvc_include_dirs.patch`

- `patches/ring_windows_msvc_include_dirs.patch`

- `patches/aws-lc-sys_windows_msvc_prebuilt_nasm.patch`

Notes on those:

- The `zstd-sys` and `ring` include-path fixes look fairly generic for

MSVC/Bazel build-script environments and may be straightforward to

propose upstream after we confirm CI stability.

- The `aws-lc-sys` patch is useful, but it includes a Bazel-specific

environment probe and CI-specific compiler fallback behavior. That

probably needs a cleaner upstream-facing shape before sending it out, so

upstream maintainers are not forced to adopt Codex's exact CI

assumptions.

Probably not worth upstreaming as-is:

- The repo-local Starlark/test target changes in `defs.bzl`,

`codex-rs/*/BUILD.bazel`, and `.github/scripts/run-bazel-ci.sh` are

mostly Codex-specific policy and CI wiring, not generic rules changes.

# Validation notes for reviewers

On this branch, I ran the following local checks after dropping the

non-resource-loading Rust edits:

```powershell

cargo test -p codex-tui

just --shell 'C:\Program Files\Git\bin\bash.exe' --shell-arg -lc -- fix -p codex-tui

python .\tools\argument-comment-lint\run-prebuilt-linter.py -p codex-tui

just --shell 'C:\Program Files\Git\bin\bash.exe' --shell-arg -lc fmt

```

One local caveat:

- `just argument-comment-lint` still fails on this Windows machine for

an unrelated Bazel toolchain-resolution issue in

`//codex-rs/exec:exec-all-test`, so I used the direct prebuilt linter

for `codex-tui` as the local fallback.

# Expected reviewer takeaway

If this PR goes green, the important conclusion is that the Windows

Bazel test coverage gap was primarily a Bazel host/exec toolchain

problem, not a need to make the Rust tests themselves Windows-specific.

That would be a strong signal that the deleted non-resource-loading Rust

test edits from the earlier branch should stay out, and that future work

should focus on upstreaming the generic `rules_rs` / `rules_rust`

Windows fixes and reducing the crate-local patch surface.

The `OPENAI_BASE_URL` environment variable has been a significant

support issue, so we decided to deprecate it in favor of an

`openai_base_url` config key. We've had the deprecation warning in place

for about a month, so users have had time to migrate to the new

mechanism. This PR removes support for `OPENAI_BASE_URL` entirely.

Stacked on #16508.

This removes the temporary `codex-core` / `codex-login` re-export shims

from the ownership split and rewrites callsites to import directly from

`codex-model-provider-info`, `codex-models-manager`, `codex-api`,

`codex-protocol`, `codex-feedback`, and `codex-response-debug-context`.

No behavior change intended; this is the mechanical import cleanup layer

split out from the ownership move.

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

- split `models-manager` out of `core` and add `ModelsManagerConfig`

plus `Config::to_models_manager_config()` so model metadata paths stop

depending on `core::Config`

- move login-owned/auth-owned code out of `core` into `codex-login`,

move model provider config into `codex-model-provider-info`, move API

bridge mapping into `codex-api`, move protocol-owned types/impls into

`codex-protocol`, and move response debug helpers into a dedicated

`response-debug-context` crate

- move feedback tag emission into `codex-feedback`, relocate tests to

the crates that now own the code, and keep broad temporary re-exports so

this PR avoids a giant import-only rewrite

## Major moves and decisions

- created `codex-models-manager` as the owner for model

cache/catalog/config/model info logic, including the new

`ModelsManagerConfig` struct

- created `codex-model-provider-info` as the owner for provider config

parsing/defaults and kept temporary `codex-login`/`codex-core`

re-exports for old import paths

- moved `api_bridge` error mapping + `CoreAuthProvider` into

`codex-api`, while `codex-login::api_bridge` temporarily re-exports

those symbols and keeps the `auth_provider_from_auth` wrapper

- moved `auth_env_telemetry` and `provider_auth` ownership to

`codex-login`

- moved `CodexErr` ownership to `codex-protocol::error`, plus

`StreamOutput`, `bytes_to_string_smart`, and network policy helpers to

protocol-owned modules

- created `codex-response-debug-context` for

`extract_response_debug_context`, `telemetry_transport_error_message`,

and related response-debug plumbing instead of leaving that behavior in

`core`

- moved `FeedbackRequestTags`, `emit_feedback_request_tags`, and

`emit_feedback_request_tags_with_auth_env` to `codex-feedback`

- deferred removal of temporary re-exports and the mechanical import

rewrites to a stacked follow-up PR so this PR stays reviewable

## Test moves

- moved auth refresh coverage from `core/tests/suite/auth_refresh.rs` to

`login/tests/suite/auth_refresh.rs`

- moved text encoding coverage from

`core/tests/suite/text_encoding_fix.rs` to

`protocol/src/exec_output_tests.rs`

- moved model info override coverage from

`core/tests/suite/model_info_overrides.rs` to

`models-manager/src/model_info_overrides_tests.rs`

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

The remaining `vt100-tests` and `debug-logs` features in `codex-tui`

were only gating test-only and debug-only behavior. Those feature

toggles add Cargo and Bazel permutations without buying anything, and

they make it easier for more crate features to linger in the workspace.

## What changed

- delete `vt100-tests` and `debug-logs` from `codex-tui`

- always compile the VT100 integration tests in the TUI test target

instead of hiding them behind a Cargo feature

- remove the unused textarea debug logging branch instead of replacing

it with another gate

- add the required argument-comment annotations in the VT100 tests now

that Bazel sees those callsites during linting

- shrink the manifest verifier allowlist again so only the remaining

real feature exceptions stay permitted

## How tested

- `cargo test -p codex-tui`

- `just argument-comment-lint -p codex-tui`

This is a follow-up to https://github.com/openai/codex/pull/15922. That

previous PR deleted the old `tui` directory and left the new

`tui_app_server` directory in place. This PR renames `tui_app_server` to

`tui` and fixes up all references.

This is the part 1 of 2 PRs that will delete the `tui` /

`tui_app_server` split. This part simply deletes the existing `tui`

directory and marks the `tui_app_server` feature flag as removed. I left

the `tui_app_server` feature flag in place for now so its presence

doesn't result in an error. It is simply ignored.

Part 2 will rename the `tui_app_server` directory `tui`. I did this as

two parts to reduce visible code churn.

CHAINED PR - note that base is eternal/hooks-pretooluse-bash, not main

-- so the following PR should be first

Matching post-tool hook to the pre-tool functionality here:

https://github.com/openai/codex/pull/15211

So, PreToolUse calls for plain shell calls, allows blocking. This

PostToolUse call runs after the command executed

example run:

```

› as a test, run in parallel the following commands:

- echo 'one'

- echo '[block-pre-tool-use]'

- echo '[block-post-tool-use]'

⚠ MCP startup incomplete (failed: notion, linear)

• Cruising through those three commands in parallel now, and I’ll share the exact outputs right after

they land.

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

PreToolUse hook (blocked)

warning: wizard-tower PreToolUse demo blocked a Bash command on purpose.

feedback: PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo 'one'

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo '[block-post-tool-use]'

• Ran echo '[block-post-tool-use]'

└ [block-post-tool-use]

• Ran echo 'one'

└ one

• Running PostToolUse hook: reading the observatory aftermath

• Running PostToolUse hook: reading the observatory aftermath

PostToolUse hook (blocked)

warning: wizard-tower PostToolUse demo blocked the Bash follow-through on purpose.

feedback: PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to

continue.

PostToolUse hook (completed)

warning: wizard-tower PostToolUse demo inspected Bash: echo 'one'

hook context: PostToolUse demo saw the Bash result. Response preview: one

──────────────────────────────────────────────────────────────────────────────────────────────────────

• Ran them in parallel. Little wave report:

- echo 'one' completed and returned one.

- echo '[block-pre-tool-use]' was blocked before execution by the PreToolUse hook.

- echo '[block-post-tool-use]' ran, but its result was blocked afterward by the PostToolUse hook.

The hook messages were:

- PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

- PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to continue.

› for the blocked messages (correctly blocked in this test) did the tool output land back into

context?

• Yeah, from this run:

- PreToolUse block: the command itself did not execute, so no command output landed in context. Only

the block message did.

- PostToolUse block: the command did execute, but the actual stdout did not come back into context.

Only the post-tool block message did.

So the blocked notifications landed in context, but the blocked command payloads themselves did not.

Nice clean guardrail, aloha.

```

This PR completes the conversion of non-interactive `codex exec` to use

app server rather than directly using core events and methods.

### Summary

- move `codex-exec` off exec-owned `AuthManager` and `ThreadManager`

state

- route exec bootstrap, resume, and auth refresh through existing

app-server paths

- replace legacy `codex/event/*` decoding in exec with typed app-server

notification handling

- update human and JSONL exec output adapters to translate existing

app-server notifications only

- clean up "app server client" layer by eliminating support for legacy

notifications; this is no longer needed

- remove exposure of `authManager` and `threadManager` from "app server

client" layer

### Testing

- `exec` has pretty extensive unit and integration tests already, and

these all pass

- In addition, I asked Codex to put together a comprehensive manual set

of tests to cover all of the `codex exec` functionality (including

command-line options), and it successfully generated and ran these tests

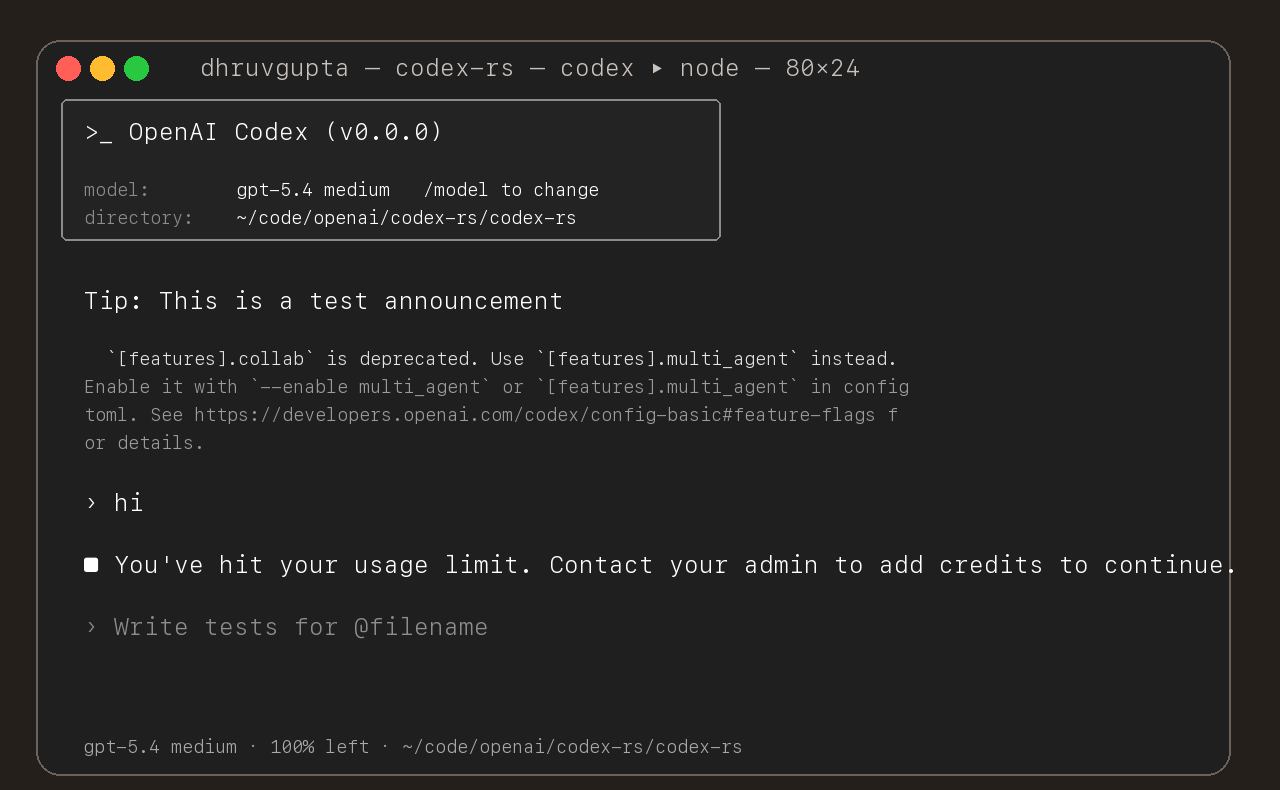

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

Enhance pty utils:

* Support closing stdin

* Separate stderr and stdout streams to allow consumers differentiate them

* Provide compatibility helper to merge both streams back into combined one

* Support specifying terminal size for pty, including on-demand resizes while process is already running

* Support terminating the process while still consuming its outputs

- override startup tooltips with model availability NUX and persist

per-model show counts in config

- stop showing each model after four exposures and fall back to normal

tooltips

## Problem

Codex’s TUI quit behavior has historically been easy to trigger

accidentally and hard to reason

about.

- `Ctrl+C`/`Ctrl+D` could terminate the UI immediately, which is a

common key to press while trying

to dismiss a modal, cancel a command, or recover from a stuck state.

- “Quit” and “shutdown” were not consistently separated, so some exit

paths could bypass the

shutdown/cleanup work that should run before the process terminates.

This PR makes quitting both safer (harder to do by accident) and more

uniform across quit

gestures, while keeping the shutdown-first semantics explicit.

## Mental model

After this change, the system treats quitting as a UI request that is

coordinated by the app

layer.

- The UI requests exit via `AppEvent::Exit(ExitMode)`.

- `ExitMode::ShutdownFirst` is the normal user path: the app triggers

`Op::Shutdown`, continues

rendering while shutdown runs, and only ends the UI loop once shutdown

has completed.

- `ExitMode::Immediate` exists as an escape hatch (and as the

post-shutdown “now actually exit”

signal); it bypasses cleanup and should not be the default for

user-triggered quits.

User-facing quit gestures are intentionally “two-step” for safety:

- `Ctrl+C` and `Ctrl+D` no longer exit immediately.

- The first press arms a 1-second window and shows a footer hint (“ctrl

+ <key> again to quit”).

- Pressing the same key again within the window requests a

shutdown-first quit; otherwise the

hint expires and the next press starts a fresh window.

Key routing remains modal-first:

- A modal/popup gets first chance to consume `Ctrl+C`.

- If a modal handles `Ctrl+C`, any armed quit shortcut is cleared so

dismissing a modal cannot

prime a subsequent `Ctrl+C` to quit.

- `Ctrl+D` only participates in quitting when the composer is empty and

no modal/popup is active.

The design doc `docs/exit-confirmation-prompt-design.md` captures the

intended routing and the

invariants the UI should maintain.

## Non-goals

- This does not attempt to redesign modal UX or make modals uniformly

dismissible via `Ctrl+C`.

It only ensures modals get priority and that quit arming does not leak

across modal handling.

- This does not introduce a persistent confirmation prompt/menu for

quitting; the goal is to keep

the exit gesture lightweight and consistent.

- This does not change the semantics of core shutdown itself; it changes

how the UI requests and

sequences it.

## Tradeoffs

- Quitting via `Ctrl+C`/`Ctrl+D` now requires a deliberate second

keypress, which adds friction for

users who relied on the old “instant quit” behavior.

- The UI now maintains a small time-bounded state machine for the armed

shortcut, which increases

complexity and introduces timing-dependent behavior.

This design was chosen over alternatives (a modal confirmation prompt or

a long-lived “are you

sure” state) because it provides an explicit safety barrier while

keeping the flow fast and

keyboard-native.

## Architecture

- `ChatWidget` owns the quit-shortcut state machine and decides when a

quit gesture is allowed

(idle vs cancellable work, composer state, etc.).

- `BottomPane` owns rendering and local input routing for modals/popups.

It is responsible for

consuming cancellation keys when a view is active and for

showing/expiring the footer hint.

- `App` owns shutdown sequencing: translating

`AppEvent::Exit(ShutdownFirst)` into `Op::Shutdown`

and only terminating the UI loop when exit is safe.

This keeps “what should happen” decisions (quit vs interrupt vs ignore)

in the chat/widget layer,

while keeping “how it looks and which view gets the key” in the

bottom-pane layer.

## Observability

You can tell this is working by running the TUIs and exercising the quit

gestures:

- While idle: pressing `Ctrl+C` (or `Ctrl+D` with an empty composer and

no modal) shows a footer

hint for ~1 second; pressing again within that window exits via

shutdown-first.

- While streaming/tools/review are active: `Ctrl+C` interrupts work

rather than quitting.

- With a modal/popup open: `Ctrl+C` dismisses/handles the modal (if it

chooses to) and does not

arm a quit shortcut; a subsequent quick `Ctrl+C` should not quit unless

the user re-arms it.

Failure modes are visible as:

- Quits that happen immediately (no hint window) from `Ctrl+C`/`Ctrl+D`.

- Quits that occur while a modal is open and consuming `Ctrl+C`.

- UI termination before shutdown completes (cleanup skipped).

## Tests

- Updated/added unit and snapshot coverage in `codex-tui` and

`codex-tui2` to validate:

- The quit hint appears and expires on the expected key.

- Double-press within the window triggers a shutdown-first quit request.

- Modal-first routing prevents quit bypass and clears any armed shortcut

when a modal consumes

`Ctrl+C`.

These tests focus on the UI-level invariants and rendered output; they

do not attempt to validate

real terminal key-repeat timing or end-to-end process shutdown behavior.

---

Screenshot:

<img width="912" height="740" alt="Screenshot 2026-01-13 at 1 05 28 PM"

src="https://github.com/user-attachments/assets/18f3d22e-2557-47f2-a369-ae7a9531f29f"

/>

When an invalid config.toml key or value is detected, the CLI currently

just quits. This leaves the VSCE in a dead state.

This PR changes the behavior to not quit and bubble up the config error

to users to make it actionable. It also surfaces errors related to

"rules" parsing.

This allows us to surface these errors to users in the VSCE, like this:

<img width="342" height="129" alt="Screenshot 2026-01-13 at 4 29 22 PM"

src="https://github.com/user-attachments/assets/a79ffbe7-7604-400c-a304-c5165b6eebc4"

/>

<img width="346" height="244" alt="Screenshot 2026-01-13 at 4 45 06 PM"

src="https://github.com/user-attachments/assets/de874f7c-16a2-4a95-8c6d-15f10482e67b"

/>

Adds an integration test for the new behavior introduced in

https://github.com/openai/codex/pull/9011. The work to create the test

setup was substantial enough that I thought it merited a separate PR.

This integration test spawns `codex` in TUI mode, which requires

spawning a PTY to run successfully, so I had to introduce quite a bit of

scaffolding in `run_codex_cli()`. I was surprised to discover that we

have not done this in our codebase before, so perhaps this should get

moved to a common location so it can be reused.

The test itself verifies that a malformed `rules` in `$CODEX_HOME`

prints a human-readable error message and exits nonzero.

We're running into quite a bit of drag maintaining this test, since

every time we add fields to an EventMsg that happened to be dumped into

the `binary-size-log.jsonl` fixture, this test starts to fail. The fix

is usually to either manually update the `binary-size-log.jsonl` fixture

file, or update the `upgrade_event_payload_for_tests` function to map

the data in that file into something workable.

Eason says it's fine to delete this test, so let's just delete it

## What?

Fixed error handling in `insert_history_lines_to_writer` where all

terminal operations were silently ignoring errors via `.ok()`.

## Why?

Silent I/O failures could leave the terminal in an inconsistent state

(e.g., scroll region not reset) with no way to debug. This violates Rust

error handling best practices.

## How?

- Changed function signature to return `io::Result<()>`

- Replaced all `.ok()` calls with `?` operator to propagate errors

- Added `tracing::warn!` in wrapper function for backward compatibility

- Updated 15 test call sites to handle Result with `.expect()`

## Testing

- ✅ Pass all tests

## Type of Change

- [x] Bug fix (non-breaking change)

---------

Signed-off-by: Huaiwu Li <lhwzds@gmail.com>

Co-authored-by: Eric Traut <etraut@openai.com>

This adds `parsed_cmd: Vec<ParsedCommand>` to `ExecApprovalRequestEvent`

in the core protocol (`protocol/src/protocol.rs`), which is also what

this field is named on `ExecCommandBeginEvent`. Honestly, I don't love

the name (it sounds like a single command, but it is actually a list of

them), but I don't want to get distracted by a naming discussion right

now.

This also adds `parsed_cmd` to `ExecCommandApprovalParams` in

`codex-rs/app-server-protocol/src/protocol.rs`, so it will be available

via `codex app-server`, as well.

For consistency, I also updated `ExecApprovalElicitRequestParams` in

`codex-rs/mcp-server/src/exec_approval.rs` to include this field under

the name `codex_parsed_cmd`, as that struct already has a number of

special `codex_*` fields. Note this is the code for when Codex is used

as an MCP _server_ and therefore has to conform to the official spec for

an MCP elicitation type.

`ClientRequest::NewConversation` picks up the reasoning level from the user's defaults in `config.toml`, so it should be reported in `NewConversationResponse`.

Adding the `rollout_path` to the `NewConversationResponse` makes it so a

client can perform subsequent operations on a `(ConversationId,

PathBuf)` pair. #3353 will introduce support for `ArchiveConversation`.

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/3352).

* #3353

* __->__ #3352

this dramatically improves time to run `cargo test -p codex-core` (~25x

speedup).

before:

```

cargo test -p codex-core 35.96s user 68.63s system 19% cpu 8:49.80 total

```

after:

```

cargo test -p codex-core 5.51s user 8.16s system 63% cpu 21.407 total

```

both tests measured "hot", i.e. on a 2nd run with no filesystem changes,

to exclude compile times.

approach inspired by [Delete Cargo Integration

Tests](https://matklad.github.io/2021/02/27/delete-cargo-integration-tests.html),

we move all test cases in tests/ into a single suite in order to have a

single binary, as there is significant overhead for each test binary

executed, and because test execution is only parallelized with a single

binary.

We want to send an aggregated output of stderr and stdout so we don't

have to aggregate it stderr+stdout as we lose order sometimes.

---------

Co-authored-by: Gabriel Peal <gpeal@users.noreply.github.com>

Codex created this PR from the following prompt:

> upgrade this entire repo to Rust 1.89. Note that this requires

updating codex-rs/rust-toolchain.toml as well as the workflows in

.github/. Make sure that things are "clippy clean" as this change will

likely uncover new Clippy errors. `just fmt` and `cargo clippy --tests`

are sufficient to check for correctness

Note this modifies a lot of lines because it folds nested `if`

statements using `&&`.

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/2465).

* #2467

* __->__ #2465

Wait for newlines, then render markdown on a line by line basis. Word wrap it for the current terminal size and then spit it out line by line into the UI. Also adds tests and fixes some UI regressions.

We wait until we have an entire newline, then format it with markdown and stream in to the UI. This reduces time to first token but is the right thing to do with our current rendering model IMO. Also lets us add word wrapping!

Stream models thoughts and responses instead of waiting for the whole

thing to come through. Very rough right now, but I'm making the risk call to push through.

As stated in `codex-rs/README.md`:

Today, Codex CLI is written in TypeScript and requires Node.js 22+ to

run it. For a number of users, this runtime requirement inhibits

adoption: they would be better served by a standalone executable. As

maintainers, we want Codex to run efficiently in a wide range of

environments with minimal overhead. We also want to take advantage of

operating system-specific APIs to provide better sandboxing, where

possible.

To that end, we are moving forward with a Rust implementation of Codex

CLI contained in this folder, which has the following benefits:

- The CLI compiles to small, standalone, platform-specific binaries.

- Can make direct, native calls to

[seccomp](https://man7.org/linux/man-pages/man2/seccomp.2.html) and

[landlock](https://man7.org/linux/man-pages/man7/landlock.7.html) in

order to support sandboxing on Linux.

- No runtime garbage collection, resulting in lower memory consumption

and better, more predictable performance.

Currently, the Rust implementation is materially behind the TypeScript

implementation in functionality, so continue to use the TypeScript

implmentation for the time being. We will publish native executables via

GitHub Releases as soon as we feel the Rust version is usable.