This is a follow-up to https://github.com/openai/codex/pull/15922. That

previous PR deleted the old `tui` directory and left the new

`tui_app_server` directory in place. This PR renames `tui_app_server` to

`tui` and fixes up all references.

This is the part 1 of 2 PRs that will delete the `tui` /

`tui_app_server` split. This part simply deletes the existing `tui`

directory and marks the `tui_app_server` feature flag as removed. I left

the `tui_app_server` feature flag in place for now so its presence

doesn't result in an error. It is simply ignored.

Part 2 will rename the `tui_app_server` directory `tui`. I did this as

two parts to reduce visible code churn.

CHAINED PR - note that base is eternal/hooks-pretooluse-bash, not main

-- so the following PR should be first

Matching post-tool hook to the pre-tool functionality here:

https://github.com/openai/codex/pull/15211

So, PreToolUse calls for plain shell calls, allows blocking. This

PostToolUse call runs after the command executed

example run:

```

› as a test, run in parallel the following commands:

- echo 'one'

- echo '[block-pre-tool-use]'

- echo '[block-post-tool-use]'

⚠ MCP startup incomplete (failed: notion, linear)

• Cruising through those three commands in parallel now, and I’ll share the exact outputs right after

they land.

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

PreToolUse hook (blocked)

warning: wizard-tower PreToolUse demo blocked a Bash command on purpose.

feedback: PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo 'one'

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo '[block-post-tool-use]'

• Ran echo '[block-post-tool-use]'

└ [block-post-tool-use]

• Ran echo 'one'

└ one

• Running PostToolUse hook: reading the observatory aftermath

• Running PostToolUse hook: reading the observatory aftermath

PostToolUse hook (blocked)

warning: wizard-tower PostToolUse demo blocked the Bash follow-through on purpose.

feedback: PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to

continue.

PostToolUse hook (completed)

warning: wizard-tower PostToolUse demo inspected Bash: echo 'one'

hook context: PostToolUse demo saw the Bash result. Response preview: one

──────────────────────────────────────────────────────────────────────────────────────────────────────

• Ran them in parallel. Little wave report:

- echo 'one' completed and returned one.

- echo '[block-pre-tool-use]' was blocked before execution by the PreToolUse hook.

- echo '[block-post-tool-use]' ran, but its result was blocked afterward by the PostToolUse hook.

The hook messages were:

- PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

- PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to continue.

› for the blocked messages (correctly blocked in this test) did the tool output land back into

context?

• Yeah, from this run:

- PreToolUse block: the command itself did not execute, so no command output landed in context. Only

the block message did.

- PostToolUse block: the command did execute, but the actual stdout did not come back into context.

Only the post-tool block message did.

So the blocked notifications landed in context, but the blocked command payloads themselves did not.

Nice clean guardrail, aloha.

```

This PR completes the conversion of non-interactive `codex exec` to use

app server rather than directly using core events and methods.

### Summary

- move `codex-exec` off exec-owned `AuthManager` and `ThreadManager`

state

- route exec bootstrap, resume, and auth refresh through existing

app-server paths

- replace legacy `codex/event/*` decoding in exec with typed app-server

notification handling

- update human and JSONL exec output adapters to translate existing

app-server notifications only

- clean up "app server client" layer by eliminating support for legacy

notifications; this is no longer needed

- remove exposure of `authManager` and `threadManager` from "app server

client" layer

### Testing

- `exec` has pretty extensive unit and integration tests already, and

these all pass

- In addition, I asked Codex to put together a comprehensive manual set

of tests to cover all of the `codex exec` functionality (including

command-line options), and it successfully generated and ran these tests

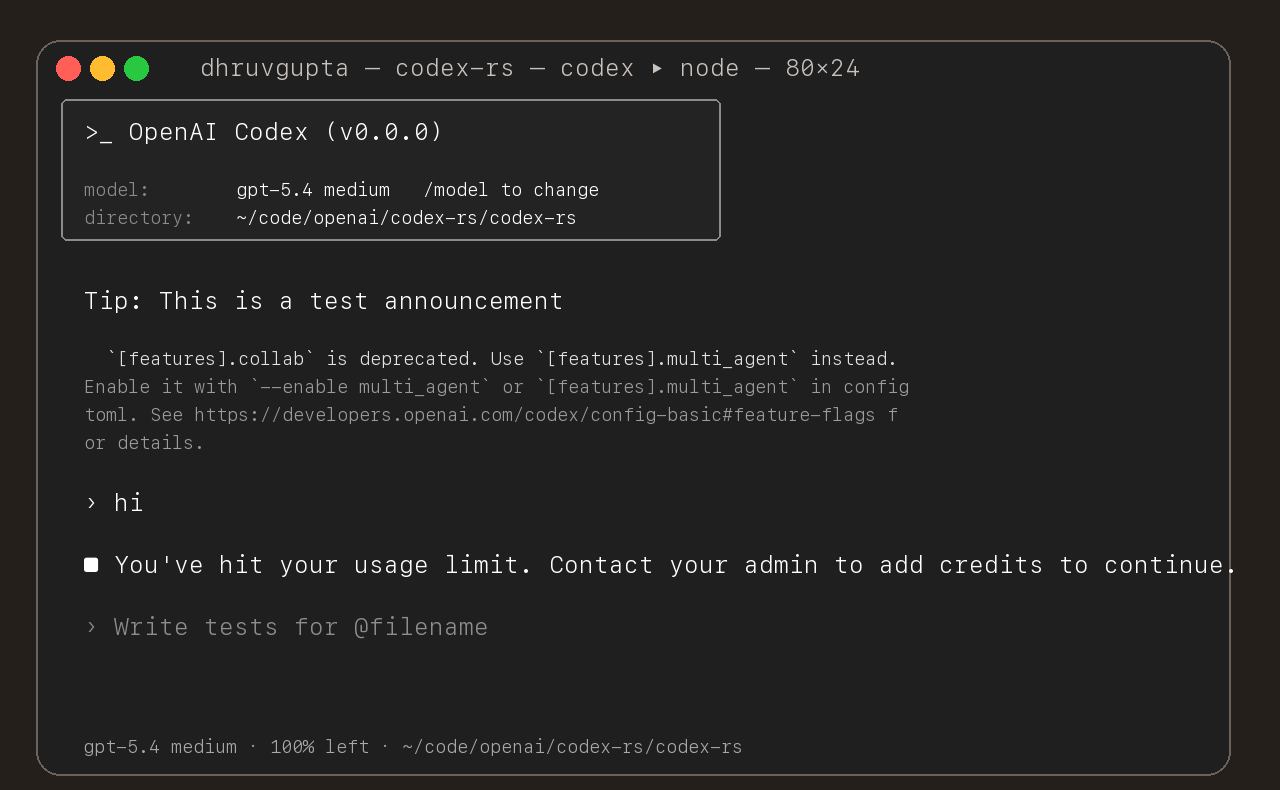

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

Enhance pty utils:

* Support closing stdin

* Separate stderr and stdout streams to allow consumers differentiate them

* Provide compatibility helper to merge both streams back into combined one

* Support specifying terminal size for pty, including on-demand resizes while process is already running

* Support terminating the process while still consuming its outputs

- override startup tooltips with model availability NUX and persist

per-model show counts in config

- stop showing each model after four exposures and fall back to normal

tooltips

When an invalid config.toml key or value is detected, the CLI currently

just quits. This leaves the VSCE in a dead state.

This PR changes the behavior to not quit and bubble up the config error

to users to make it actionable. It also surfaces errors related to

"rules" parsing.

This allows us to surface these errors to users in the VSCE, like this:

<img width="342" height="129" alt="Screenshot 2026-01-13 at 4 29 22 PM"

src="https://github.com/user-attachments/assets/a79ffbe7-7604-400c-a304-c5165b6eebc4"

/>

<img width="346" height="244" alt="Screenshot 2026-01-13 at 4 45 06 PM"

src="https://github.com/user-attachments/assets/de874f7c-16a2-4a95-8c6d-15f10482e67b"

/>

Adds an integration test for the new behavior introduced in

https://github.com/openai/codex/pull/9011. The work to create the test

setup was substantial enough that I thought it merited a separate PR.

This integration test spawns `codex` in TUI mode, which requires

spawning a PTY to run successfully, so I had to introduce quite a bit of

scaffolding in `run_codex_cli()`. I was surprised to discover that we

have not done this in our codebase before, so perhaps this should get

moved to a common location so it can be reused.

The test itself verifies that a malformed `rules` in `$CODEX_HOME`

prints a human-readable error message and exits nonzero.

## What?

Fixed error handling in `insert_history_lines_to_writer` where all

terminal operations were silently ignoring errors via `.ok()`.

## Why?

Silent I/O failures could leave the terminal in an inconsistent state

(e.g., scroll region not reset) with no way to debug. This violates Rust

error handling best practices.

## How?

- Changed function signature to return `io::Result<()>`

- Replaced all `.ok()` calls with `?` operator to propagate errors

- Added `tracing::warn!` in wrapper function for backward compatibility

- Updated 15 test call sites to handle Result with `.expect()`

## Testing

- ✅ Pass all tests

## Type of Change

- [x] Bug fix (non-breaking change)

---------

Signed-off-by: Huaiwu Li <lhwzds@gmail.com>

Co-authored-by: Eric Traut <etraut@openai.com>

this dramatically improves time to run `cargo test -p codex-core` (~25x

speedup).

before:

```

cargo test -p codex-core 35.96s user 68.63s system 19% cpu 8:49.80 total

```

after:

```

cargo test -p codex-core 5.51s user 8.16s system 63% cpu 21.407 total

```

both tests measured "hot", i.e. on a 2nd run with no filesystem changes,

to exclude compile times.

approach inspired by [Delete Cargo Integration

Tests](https://matklad.github.io/2021/02/27/delete-cargo-integration-tests.html),

we move all test cases in tests/ into a single suite in order to have a

single binary, as there is significant overhead for each test binary

executed, and because test execution is only parallelized with a single

binary.