## Why

`token_data` is owned by `codex-login`, but `codex-core` was still

re-exporting it. That let callers pull auth token types through

`codex-core`, which keeps otherwise unrelated crates coupled to

`codex-core` and makes `codex-core` more of a build-graph bottleneck.

## What changed

- remove the `codex-core` re-export of `codex_login::token_data`

- update the remaining `codex-core` internals that used

`crate::token_data` to import `codex_login::token_data` directly

- update downstream callers in `codex-rs/chatgpt`,

`codex-rs/tui_app_server`, `codex-rs/app-server/tests/common`, and

`codex-rs/core/tests` to import `codex_login::token_data` directly

- add explicit `codex-login` workspace dependencies and refresh lock

metadata for crates that now depend on it directly

## Validation

- `cargo test -p codex-chatgpt --locked`

- `just argument-comment-lint`

- `just bazel-lock-update`

- `just bazel-lock-check`

## Notes

- attempted `cargo test -p codex-core --locked` and `cargo test -p

codex-core auth_refresh --locked`, but both ran out of disk while

linking `codex-core` test binaries in the local environment

## Why

Skill metadata accepted a `permissions` block and stored the result on

`SkillMetadata`, but that data was never consumed by runtime behavior.

Leaving the dead parsing path in place makes it look like skills can

widen or otherwise influence execution permissions when, in practice,

declared skill permissions are ignored.

This change removes that misleading surface area so the skill metadata

model matches what the system actually uses.

## What changed

- removed `permission_profile` and `managed_network_override` from

`core-skills::SkillMetadata`

- stopped parsing `permissions` from skill metadata in

`core-skills/src/loader.rs`

- deleted the loader tests that only exercised the removed permissions

parsing path

- cleaned up dependent `SkillMetadata` constructors in tests and TUI

code that were only carrying `None` for those fields

## Testing

- `cargo test -p codex-core-skills`

- `cargo test -p codex-tui

submission_prefers_selected_duplicate_skill_path`

- `just argument-comment-lint`

## TR;DR

Replicates the `/title` command from `tui` to `tui_app_server`.

## Problem

The classic `tui` crate supports customizing the terminal window/tab

title via `/title`, but the `tui_app_server` crate does not. Users on

the app-server path have no way to configure what their terminal title

shows (project name, status, spinner, thread, etc.), making it harder to

identify Codex sessions across tabs or windows.

## Mental model

The terminal title is a *status surface* -- conceptually parallel to the

footer status line. Both surfaces are configurable lists of items, both

share expensive inputs (git branch lookup, project root discovery), and

both must be refreshed at the same lifecycle points. This change ports

the classic `tui`'s design verbatim:

1. **`terminal_title.rs`** owns the low-level OSC write path and input

sanitization. It strips control characters and bidi/invisible codepoints

before placing untrusted text (model output, thread names, project

paths) inside an escape sequence.

2. **`title_setup.rs`** defines `TerminalTitleItem` (the 8 configurable

items) and `TerminalTitleSetupView` (the interactive picker that wraps

`MultiSelectPicker`).

3. **`status_surfaces.rs`** is the shared refresh pipeline. It parses

both surface configs once per refresh, warns about invalid items once

per session, synchronizes the git-branch cache, then renders each

surface from the same `StatusSurfaceSelections` snapshot.

4. **`chatwidget.rs`** sets `TerminalTitleStatusKind` at each state

transition (Working, Thinking, Undoing, WaitingForBackgroundTerminal)

and calls `refresh_terminal_title()` whenever relevant state changes.

5. **`app.rs`** handles the three setup events (confirm/preview/cancel),

persists config via `ConfigEditsBuilder`, and clears the managed title

on `Drop`.

## Non-goals

- **Restoring the previous terminal title on exit.** There is no

portable way to read the terminal's current title, so `Drop` clears the

managed title rather than restoring it.

- **Sharing code between `tui` and `tui_app_server`.** The

implementation is a parallel copy, matching the existing pattern for the

status-line feature. Extracting a shared crate is future work.

## Tradeoffs

- **Duplicate code across crates.** The three core files

(`terminal_title.rs`, `title_setup.rs`, `status_surfaces.rs`) are

byte-for-byte copies from the classic `tui`. This was chosen for

consistency with the existing status-line port and to avoid coupling the

two crates at the dependency level. Future changes must be applied in

both places.

- **`status_surfaces.rs` is large (~660 lines).** It absorbs logic that

previously lived inline in `chatwidget.rs` (status-line refresh, git

branch management, project root discovery) plus all new terminal-title

logic. This consolidation trades file size for a single place where both

surfaces are coordinated.

- **Spinner scheduling on every refresh.** The terminal title spinner

(when active) schedules a frame every 100ms. This is the same pattern

the status-indicator spinner already uses; the overhead is a timer

registration, not a redraw.

## Architecture

```

/title command

-> SlashCommand::Title

-> open_terminal_title_setup()

-> TerminalTitleSetupView (MultiSelectPicker)

-> on_change: AppEvent::TerminalTitleSetupPreview -> preview_terminal_title()

-> on_confirm: AppEvent::TerminalTitleSetup -> ConfigEditsBuilder + setup_terminal_title()

-> on_cancel: AppEvent::TerminalTitleSetupCancelled -> cancel_terminal_title_setup()

Runtime title refresh:

state change (turn start, reasoning, undo, plan update, thread rename, ...)

-> set terminal_title_status_kind

-> refresh_terminal_title()

-> status_surface_selections() (parse configs, collect invalids)

-> refresh_terminal_title_from_selections()

-> terminal_title_value_for_item() for each configured item

-> assemble title string with separators

-> skip if identical to last_terminal_title (dedup OSC writes)

-> set_terminal_title() (sanitize + OSC 0 write)

-> schedule spinner frame if animating

Widget replacement:

replace_chat_widget_with_app_server_thread()

-> transfer last_terminal_title from old widget to new

-> avoids redundant OSC clear+rewrite on session switch

```

## Observability

- Invalid terminal-title item IDs in config emit a one-per-session

warning via `on_warning()` (gated by

`terminal_title_invalid_items_warned` `AtomicBool`).

- OSC write failures are logged at `tracing::debug` level.

- Config persistence failures are logged at `tracing::error` and

surfaced to the user via `add_error_message()`.

## Tests

- `terminal_title.rs`: 4 unit tests covering sanitization (control

chars, bidi codepoints, truncation) and OSC output format.

- `title_setup.rs`: 3 tests covering setup view snapshot rendering,

parse order preservation, and invalid-ID rejection.

- `chatwidget/tests.rs`: Updated test helpers with new fields; existing

tests continue to pass.

---------

Co-authored-by: Eric Traut <etraut@openai.com>

## Symptoms

When `/review` ran through `tui_app_server`, the TUI could show

duplicate review content:

- the `>> Code review started: ... <<` banner appeared twice

- the final review body could also appear twice

## Problem

`tui_app_server` was treating review lifecycle items as renderable

content on more than one delivery path.

Specifically:

- `EnteredReviewMode` was rendered both when the item started and again

when it completed

- `ExitedReviewMode` rendered the review text itself, even though the

same review text was also delivered later as the assistant message item

That meant the same logical review event was committed into history

multiple times.

## Solution

Make review lifecycle items control state transitions only once, and

keep the final review body sourced from the assistant message item:

- render the review-start banner from the live `ItemStarted` path, while

still allowing replay to restore it once

- treat `ExitedReviewMode` as a mode-exit/finish-banner event instead of

rendering the review body from it

- preserve the existing assistant-message rendering path as the single

source of final review text

Fixes#15283.

## Summary

Older system bubblewrap builds reject `--argv0`, which makes our Linux

sandbox fail before the helper can re-exec. This PR keeps using system

`/usr/bin/bwrap` whenever it exists and only falls back to vendored

bwrap when the system binary is missing. That matters on stricter

AppArmor hosts, where the distro bwrap package also provides the policy

setup needed for user namespaces.

For old system bwrap, we avoid `--argv0` instead of switching binaries:

- pass the sandbox helper a full-path `argv0`,

- keep the existing `current_exe() + --argv0` path when the selected

launcher supports it,

- otherwise omit `--argv0` and re-exec through the helper's own

`argv[0]` path, whose basename still dispatches as

`codex-linux-sandbox`.

Also updates the launcher/warning tests and docs so they match the new

behavior: present-but-old system bwrap uses the compatibility path, and

only absent system bwrap falls back to vendored.

### Validation

1. Install Ubuntu 20.04 in a VM

2. Compile codex and run without bubblewrap installed - see a warning

about falling back to the vendored bwrap

3. Install bwrap and verify version is 0.4.0 without `argv0` support

4. run codex and use apply_patch tool without errors

<img width="802" height="631" alt="Screenshot 2026-03-25 at 11 48 36 PM"

src="https://github.com/user-attachments/assets/77248a29-aa38-4d7c-9833-496ec6a458b8"

/>

<img width="807" height="634" alt="Screenshot 2026-03-25 at 11 47 32 PM"

src="https://github.com/user-attachments/assets/5af8b850-a466-489b-95a6-455b76b5050f"

/>

<img width="812" height="635" alt="Screenshot 2026-03-25 at 11 45 45 PM"

src="https://github.com/user-attachments/assets/438074f0-8435-4274-a667-332efdd5cb57"

/>

<img width="801" height="623" alt="Screenshot 2026-03-25 at 11 43 56 PM"

src="https://github.com/user-attachments/assets/0dc8d3f5-e8cf-4218-b4b4-a4f7d9bf02e3"

/>

---------

Co-authored-by: Michael Bolin <mbolin@openai.com>

For app-server websocket auth, support the two server-side mechanisms

from

PR #14847:

- `--ws-auth capability-token --ws-token-file /abs/path`

- `--ws-auth signed-bearer-token --ws-shared-secret-file /abs/path`

with optional `--ws-issuer`, `--ws-audience`, and

`--ws-max-clock-skew-seconds`

On the client side, add interactive remote support via:

- `--remote ws://host:port` or `--remote wss://host:port`

- `--remote-auth-token-env <ENV_VAR>`

Codex reads the bearer token from the named environment variable and

sends it

as `Authorization: Bearer <token>` during the websocket handshake.

Remote auth

tokens are only allowed for `wss://` URLs or loopback `ws://` URLs.

Testing:

- tested both auth methods manually to confirm connection success and

rejection for both auth types

When `tui_app_server` is enabled, shell commands in the transcript

render as fully quoted invocations like `/bin/zsh -lc "..."`. The

non-app-server TUI correctly shows the parsed command body.

Root cause:

The app-server stores `ThreadItem::CommandExecution.command` as a

shell-quoted string. When `tui_app_server` bridges that item back into

the exec renderer, it was passing `vec![command]` unchanged instead of

splitting the string back into argv. That prevented

`strip_bash_lc_and_escape()` from recognizing the shell wrapper, so the

renderer displayed the wrapper literally.

Solution:

Add a shared command-string splitter that round-trips shell-quoted

commands back into argv when it is safe to do so, while preserving

non-roundtrippable inputs as a single string. Use that helper everywhere

`tui_app_server` reconstructs exec commands from app-server payloads,

including live command-execution items, replayed thread items, and exec

approval requests. This restores the same command display behavior as

the direct TUI path without breaking Windows-style commands that cannot

be safely round-tripped.

CHAINED PR - note that base is eternal/hooks-pretooluse-bash, not main

-- so the following PR should be first

Matching post-tool hook to the pre-tool functionality here:

https://github.com/openai/codex/pull/15211

So, PreToolUse calls for plain shell calls, allows blocking. This

PostToolUse call runs after the command executed

example run:

```

› as a test, run in parallel the following commands:

- echo 'one'

- echo '[block-pre-tool-use]'

- echo '[block-post-tool-use]'

⚠ MCP startup incomplete (failed: notion, linear)

• Cruising through those three commands in parallel now, and I’ll share the exact outputs right after

they land.

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

• Running PreToolUse hook: checking the observatory runes

PreToolUse hook (blocked)

warning: wizard-tower PreToolUse demo blocked a Bash command on purpose.

feedback: PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo 'one'

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo '[block-post-tool-use]'

• Ran echo '[block-post-tool-use]'

└ [block-post-tool-use]

• Ran echo 'one'

└ one

• Running PostToolUse hook: reading the observatory aftermath

• Running PostToolUse hook: reading the observatory aftermath

PostToolUse hook (blocked)

warning: wizard-tower PostToolUse demo blocked the Bash follow-through on purpose.

feedback: PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to

continue.

PostToolUse hook (completed)

warning: wizard-tower PostToolUse demo inspected Bash: echo 'one'

hook context: PostToolUse demo saw the Bash result. Response preview: one

──────────────────────────────────────────────────────────────────────────────────────────────────────

• Ran them in parallel. Little wave report:

- echo 'one' completed and returned one.

- echo '[block-pre-tool-use]' was blocked before execution by the PreToolUse hook.

- echo '[block-post-tool-use]' ran, but its result was blocked afterward by the PostToolUse hook.

The hook messages were:

- PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

- PostToolUse demo blocked the result after execution. Remove [block-post-tool-use] to continue.

› for the blocked messages (correctly blocked in this test) did the tool output land back into

context?

• Yeah, from this run:

- PreToolUse block: the command itself did not execute, so no command output landed in context. Only

the block message did.

- PostToolUse block: the command did execute, but the actual stdout did not come back into context.

Only the post-tool block message did.

So the blocked notifications landed in context, but the blocked command payloads themselves did not.

Nice clean guardrail, aloha.

```

## TL;DR

This PR changes the `tui_app_server` _path_ in the following ways:

- add missing feature to show agent names (shows only UUIDs today)

- add `Cmd/Alt+Arrows` navigation between agent conversations

## Problem

When the TUI connects to a remote app server, collab agent tool-call

items (spawn, wait, delegate, etc.) render thread UUIDs instead of

human-readable agent names because the `ChatWidget` never receives

nickname/role metadata for receiver threads. Separately, keyboard

next/previous agent navigation silently does nothing when the local

`AgentNavigationState` cache has not yet been populated with subagent

threads that the remote server already knows about.

Both issues share a root cause: in the remote (app-server) code path the

TUI never proactively fetches thread metadata. In the local code path

this metadata arrives naturally via spawn events the TUI itself

orchestrates, but in the remote path those events were processed by a

different client and the TUI only sees the resulting collab tool-call

notifications.

## Mental model

Collab agent tool-call notifications reference receiver threads by id,

but carry no nickname or role. The TUI needs that metadata in two

places:

1. **Rendering** -- `ChatWidget` converts `CollabAgentToolCall` items

into history cells. Without metadata, agent status lines show raw UUIDs.

2. **Navigation** -- `AgentNavigationState` tracks known threads for the

`/agent` picker and keyboard cycling. Without entries for remote

subagents, next/previous has nowhere to go.

This change closes the gap with two complementary strategies:

- **Eager hydration**: when any notification carries

`receiver_thread_ids`, the TUI fetches metadata (`thread/read`) for

threads it has not yet cached before the notification is rendered.

- **Backfill on thread switch**: when the user resumes, forks, or starts

a new app-server thread, the TUI fetches the full `thread/loaded/list`,

walks the parent-child spawn tree, and registers every descendant

subagent in both the navigation cache and the `ChatWidget` metadata map.

A new `collab_agent_metadata` side-table in `ChatWidget` stores

nickname/role keyed by `ThreadId`, kept in sync by `App` whenever it

calls `upsert_agent_picker_thread`. The `replace_chat_widget` helper

re-seeds this map from `AgentNavigationState` so that thread switches

(which reconstruct the widget) do not lose previously discovered

metadata.

## Non-goals

- This change does not alter the local (non-app-server) collab code

path. That path already receives metadata via spawn events and is

unaffected.

- No new protocol messages are introduced. The change uses existing

`thread/read` and `thread/loaded/list` RPCs.

- No changes to how `AgentNavigationState` orders or cycles through

threads. The traversal logic is unchanged; only the population of

entries is extended.

## Tradeoffs

- **Extra RPCs on notification path**:

`hydrate_collab_agent_metadata_for_notification` issues a `thread/read`

for each unknown receiver thread before the notification is forwarded to

rendering. This adds latency on the notification path but only fires

once per thread (the result is cached). The alternative -- rendering

first and backfilling names later -- would cause visible flicker as

UUIDs are replaced with names.

- **Backfill fetches all loaded threads**:

`backfill_loaded_subagent_threads` fetches the full loaded-thread list

and walks the spawn tree even when the user may only care about one

subagent. This is simple and correct but O(loaded_threads) per thread

switch. For typical session sizes this is negligible; it could become a

concern for sessions with hundreds of subagents.

- **Metadata duplication**: agent nickname/role is now stored in both

`AgentNavigationState` (for picker/label) and

`ChatWidget::collab_agent_metadata` (for rendering). The two are kept in

sync through `upsert_agent_picker_thread` and `replace_chat_widget`, but

there is no compile-time enforcement of this coupling.

## Architecture

### New module: `app::loaded_threads`

Pure function `find_loaded_subagent_threads_for_primary` that takes a

flat list of `Thread` objects and a primary thread id, then walks the

`SessionSource::SubAgent` parent-child edges to collect all transitive

descendants. Returns a sorted vec of `LoadedSubagentThread` (thread_id +

nickname + role). No async, no side effects -- designed for unit

testing.

### New methods on `App`

| Method | Purpose |

|--------|---------|

| `collab_receiver_thread_ids` | Extracts `receiver_thread_ids` from

`ItemStarted` / `ItemCompleted` collab notifications |

| `hydrate_collab_agent_metadata_for_notification` | Fetches and caches

metadata for unknown receiver threads before a notification is rendered

|

| `backfill_loaded_subagent_threads` | Bulk-fetches all loaded threads

and registers descendants of the primary thread |

| `adjacent_thread_id_with_backfill` | Attempts navigation, falls back

to backfill if the cache has no adjacent entry |

| `replace_chat_widget` | Replaces the widget and re-seeds its metadata

map from `AgentNavigationState` |

### New state in `ChatWidget`

`collab_agent_metadata: HashMap<ThreadId, CollabAgentMetadata>` -- a

lookup table that rendering functions consult to attach human-readable

names to collab tool-call items. Populated externally by `App` via

`set_collab_agent_metadata`.

### New method on `AppServerSession`

`thread_loaded_list` -- thin wrapper around

`ClientRequest::ThreadLoadedList`.

## Observability

- `tracing::warn` on invalid thread ids during hydration and backfill.

- `tracing::warn` on failed `thread/read` or `thread/loaded/list` RPCs

(with thread id and error).

- No new metrics or feature flags.

## Tests

-

**`loaded_threads::tests::finds_loaded_subagent_tree_for_primary_thread`**

-- unit test for the spawn-tree walk: verifies child and grandchild are

included, unrelated threads are excluded, and metadata is carried

through.

-

**`app::tests::replace_chat_widget_reseeds_collab_agent_metadata_for_replay`**

-- integration test that creates a `ChatWidget`, replaces it via

`replace_chat_widget`, replays a collab wait notification, and asserts

the rendered history cell contains the agent name rather than a UUID.

- **Updated snapshot** `app_server_collab_wait_items_render_history` --

the existing collab wait rendering test now sets metadata before sending

notifications, so the snapshot shows `Robie [explorer]` / `Ada

[reviewer]` instead of raw thread ids.

---------

Co-authored-by: Eric Traut <etraut@openai.com>

## TL;DR

Fix duplicated reasoning summaries in `tui_app_server`.

<img width="1716" height="912" alt="image"

src="https://github.com/user-attachments/assets/6362f25a-ab1c-4a01-bf10-b5616c9428c2"

/>

During live turns, reasoning text is already rendered incrementally from

`ReasoningSummaryTextDelta`. When the same reasoning item later arrives

via `ItemCompleted`, we should only finalize the reasoning block, not

render the same summary again.

## What changed

- only replay rendered reasoning summaries from completed

`ThreadItem::Reasoning` items

- kept live completed reasoning items as finalize-only

- added a regression test covering the live streaming + completion path

## Why

Without this, the first reasoning summary often appears twice in the

transcript when `model_reasoning_summary = "detailed"` and

`features.tui_app_server = true`.

Migrate `cwd` and related session/config state to `AbsolutePathBuf` so

downstream consumers consistently see absolute working directories.

Add test-only `.abs()` helpers for `Path`, `PathBuf`, and `TempDir`, and

update branch-local tests to use them instead of

`AbsolutePathBuf::try_from(...)`.

For the remaining TUI/app-server snapshot coverage that renders absolute

cwd values, keep the snapshots unchanged and skip the Windows-only cases

where the platform-specific absolute path layout differs.

This PR adds code to recover from a narrow app-server timing race where

a follow-up can be sent after the previous turn has already ended but

before the TUI has observed that completion.

Instead of surfacing turn/steer failed: no active turn to steer, the

client now treats that as a stale active-turn cache and falls back to

starting a fresh turn, matching the intended submit behavior more

closely. This is similar to the strategy employed by other app server

clients (notably, the IDE extension and desktop app).

This race exists because the current app-server API makes the client

choose between two separate RPCs, turn/steer and turn/start, based on

its local view of whether a turn is still active. That view is

replicated from asynchronous notifications, so it can be stale for a

brief window. The server may already have ended the turn while the

client still believes it is in progress. Since the choice is made

client-side rather than atomically on the server, tui_app_server can

occasionally send turn/steer for a turn that no longer exists.

- Removes provenance filtering in the mentions feature for apps and

skills that were installed as part of a plugin.

- All skills and apps for a plugin are mentionable with this change.

### Summary

Add the v2 app-server filesystem watch RPCs and notifications, wire them

through the message processor, and implement connection-scoped watches

with notify-backed change delivery. This also updates the schema

fixtures, app-server documentation, and the v2 integration coverage for

watch and unwatch behavior.

This allows clients to efficiently watch for filesystem updates, e.g. to

react on branch changes.

### Testing

- exercise watch lifecycles for directory changes, atomic file

replacement, missing-file targets, and unwatch cleanup

## Summary

Fixes slow `Ctrl+C` exit from the ChatGPT browser-login screen in

`tui_app_server`.

## Root cause

Onboarding-level `Ctrl+C` quit bypassed the auth widget's cancel path.

That let the active ChatGPT login keep running, and in-process

app-server shutdown then waited on the stale login attempt before

finishing.

## Changes

- Extract a shared `cancel_active_attempt()` path in the auth widget

- Use that path from onboarding-level `Ctrl+C` before exiting the TUI

- Add focused tests for canceling browser-login and device-code attempts

- Add app-server shutdown cleanup that explicitly drops any active login

before draining background work

## Summary

Fixes ChatGPT login in `tui_app_server` so the local browser opens again

during in-process login flows.

## Root cause

The app-server backend intentionally starts ChatGPT login with browser

auto-open disabled, expecting the TUI client to open the returned

`auth_url`. The app-server TUI was not doing that, so the login URL was

shown in the UI but no browser window opened.

## Changes

- Add a helper that opens the returned ChatGPT login URL locally

- Call it from the main ChatGPT login flow

- Call it from the device-code fallback-to-browser path as well

- Limit auto-open to in-process app-server handles so remote sessions do

not try to open a browser against a remote localhost callback

## Summary

Fixes early TUI exit paths that could leave the terminal in a dirty

state and cause a stray `%` prompt marker after the app quit.

## Root cause

Both `tui` and `tui_app_server` had early returns after `tui::init()`

that did not guarantee terminal restore. When that happened, shells like

`zsh` inherited the altered terminal state.

## Changes

- Add a restore guard around `run_ratatui_app()` in both `tui` and

`tui_app_server`

- Route early exits through the guard instead of relying on scattered

manual restore calls

- Ensure terminal restore still happens on normal shutdown

- Remove marketplace from left column.

- Change `Can be installed` to `Available`

- Align right-column marketplace + selected-row hint text across states.

- Changes applied to both `tui` and `tui_app_server`.

- Update related snapshots/tests.

<img width="2142" height="590" alt="image"

src="https://github.com/user-attachments/assets/6e60b783-2bea-46d4-b353-f2fd328ac4d0"

/>

- create `codex-git-utils` and move the shared git helpers into it with

file moves preserved for diff readability

- move the `GitInfo` helpers out of `core` so stacked rollout work can

depend on the shared crate without carrying its own git info module

---------

Co-authored-by: Ahmed Ibrahim <219906144+aibrahim-oai@users.noreply.github.com>

Co-authored-by: Codex <noreply@openai.com>

## Summary

Fixes a `tui_app_server` bootstrap failure when launching the CLI while

logged out.

## Root cause

During TUI bootstrap, `tui_app_server` fetched `account/rateLimits/read`

unconditionally and treated failures as fatal. When the user was logged

out, there was no ChatGPT account available, so that RPC failed and

aborted startup with:

```

Error: account/rateLimits/read failed during TUI bootstrap

```

## Changes

- Only fetch bootstrap rate limits when OpenAI auth is required and a

ChatGPT account is present

- Treat bootstrap rate-limit fetch failures as non-fatal and fall back

to empty snapshots

- Log the fetch failure at debug level instead of aborting startup

- Remove numeric prefixes for disabled rows in shared list rendering.

These numbers are shortcuts, Ex: Pressing "2" selects option `#2`.

Disabled items can not be selected, so keeping numbers on these items is

misleading.

- Apply the same behavior in both tui and tui_app_server.

- Update affected snapshots for apps/plugins loading and plugin detail

rows.

_**This is a global change.**_

Before:

<img width="1680" height="488" alt="image"

src="https://github.com/user-attachments/assets/4bcf94ad-285f-48d3-a235-a85b58ee58e2"

/>

After:

<img width="1706" height="484" alt="image"

src="https://github.com/user-attachments/assets/76bb6107-a562-42fe-ae94-29440447ca77"

/>

Updates plugin ordering so installed plugins are listed first, with

alphabetical sorting applied within the installed and uninstalled

groups. The behavior is now consistent across both `tui` and

`tui_app_server`, and related tests/snapshots were updated.

This PR completes the conversion of non-interactive `codex exec` to use

app server rather than directly using core events and methods.

### Summary

- move `codex-exec` off exec-owned `AuthManager` and `ThreadManager`

state

- route exec bootstrap, resume, and auth refresh through existing

app-server paths

- replace legacy `codex/event/*` decoding in exec with typed app-server

notification handling

- update human and JSONL exec output adapters to translate existing

app-server notifications only

- clean up "app server client" layer by eliminating support for legacy

notifications; this is no longer needed

- remove exposure of `authManager` and `threadManager` from "app server

client" layer

### Testing

- `exec` has pretty extensive unit and integration tests already, and

these all pass

- In addition, I asked Codex to put together a comprehensive manual set

of tests to cover all of the `codex exec` functionality (including

command-line options), and it successfully generated and ran these tests

Show all plugin marketplaces in the /plugins popup by removing the

`openai-curated` marketplace filter, and update plugin popup

copy/tests/snapshots to match the new behavior in both TUI codepaths.

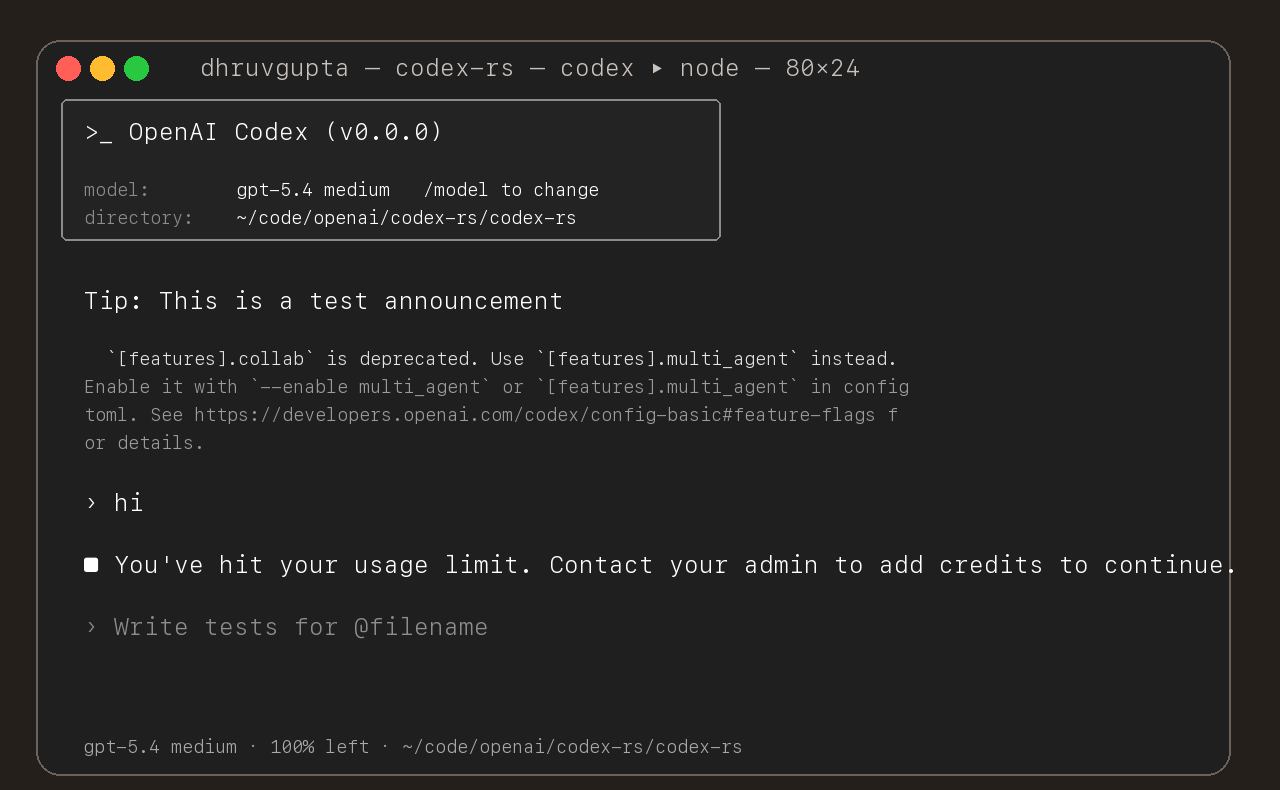

## Summary

- update the self-serve business usage-based limit message to direct

users to their admin for additional credits

- add a focused unit test for the self_serve_business_usage_based plan

branch

Added also:

If you are at a rate limit but you still have credits, codex cli would

tell you to switch the model. We shouldnt do this if you have credits so

fixed this.

## Test

- launched the source-built CLI and verified the updated message is

shown for the self-serve business usage-based plan

## Summary

Adds support for approvals_reviewer to `Op::UserTurn` so we can migrate

`[CodexMessageProcessor::turn_start]` to use Op::UserTurn

## Testing

- [x] Adds quick test for the new field

Co-authored-by: Codex <noreply@openai.com>

- add `PreToolUse` hook for bash-like tool execution only at first

- block shell execution before dispatch with deny-only hook behavior

- introduces common.rs matcher framework for matching when hooks are run

example run:

```

› run three parallel echo commands, and the second one should echo "[block-pre-tool-use]" as a test

• Running the three echo commands in parallel now and I’ll report the output directly.

• Running PreToolUse hook: name for demo pre tool use hook

• Running PreToolUse hook: name for demo pre tool use hook

• Running PreToolUse hook: name for demo pre tool use hook

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo "first parallel echo"

PreToolUse hook (blocked)

warning: wizard-tower PreToolUse demo blocked a Bash command on purpose.

feedback: PreToolUse demo blocked the command. Remove [block-pre-tool-use] to continue.

PreToolUse hook (completed)

warning: wizard-tower PreToolUse demo inspected Bash: echo "third parallel echo"

• Ran echo "first parallel echo"

└ first parallel echo

• Ran echo "third parallel echo"

└ third parallel echo

• Three little waves went out in parallel.

1. printed first parallel echo

2. was blocked before execution because it contained the exact test string [block-pre-tool-use]

3. printed third parallel echo

There was also an unrelated macOS defaults warning around the successful commands, but the echoes

themselves worked fine. If you want, I can rerun the second one with a slightly modified string so

it passes cleanly.

```

## Summary

- route /realtime, Ctrl+C, and deleted realtime meters through the same

realtime stop path

- keep generic transcription placeholder cleanup free of realtime

shutdown side effects

## Testing

- Ran

- Relied on CI for verification; did not run local tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

- queue input after the user submits `/compact` until that manual

compact turn ends

- mirror the same behavior in the app-server TUI

- add regressions for input queued before compact starts and while it is

running

Co-authored-by: Codex <noreply@openai.com>

- Duplicate app mentions are now suppressed when they’re plugin-backed

with the same display name.

- Remaining connector mentions now label category as [Plugin] when

plugin metadata is present, otherwise [App].

- Mention result lists are now capped to 8 rows after filtering.

- Updates both tui and tui_app_server with the same changes.

## Why

Fixes [#15283](https://github.com/openai/codex/issues/15283), where

sandboxed tool calls fail on older distro `bubblewrap` builds because

`/usr/bin/bwrap` does not understand `--argv0`. The upstream [bubblewrap

v0.9.0 release

notes](https://github.com/containers/bubblewrap/releases/tag/v0.9.0)

explicitly call out `Add --argv0`. Flipping `use_legacy_landlock`

globally works around that compatibility bug, but it also weakens the

default Linux sandbox and breaks proxy-routed and split-policy cases

called out in review.

The follow-up Linux CI failure was in the new launcher test rather than

the launcher logic: the fake `bwrap` helper stayed open for writing, so

Linux would not exec it. This update also closes the user-visibility gap

from review by surfacing the same startup warning when `/usr/bin/bwrap`

is present but too old for `--argv0`, not only when it is missing.

## What Changed

- keep `use_legacy_landlock` default-disabled

- teach `codex-rs/linux-sandbox/src/launcher.rs` to fall back to the

vendored bubblewrap build when `/usr/bin/bwrap` does not advertise

`--argv0` support

- add launcher tests for supported, unsupported, and missing system

`bwrap`

- write the fake `bwrap` test helper to a closed temp path so the

supported-path launcher test works on Linux too

- extend the startup warning path so Codex warns when `/usr/bin/bwrap`

is missing or too old to support `--argv0`

- mirror the warning/fallback wording across

`codex-rs/linux-sandbox/README.md` and `codex-rs/core/README.md`,

including that the fallback is the vendored bubblewrap compiled into the

binary

- cite the upstream `bubblewrap` release that introduced `--argv0`

## Verification

- `bazel test --config=remote --platforms=//:rbe

//codex-rs/linux-sandbox:linux-sandbox-unit-tests

--test_filter=launcher::tests::prefers_system_bwrap_when_help_lists_argv0

--test_output=errors`

- `cargo test -p codex-core system_bwrap_warning`

- `cargo check -p codex-exec -p codex-tui -p codex-tui-app-server -p

codex-app-server`

- `just argument-comment-lint`

## Summary

- use Shift+Left to edit the most recent queued message when running

under tmux

- mirror the same binding change in the app-server TUI

- add tmux-specific tests and snapshot coverage for the rendered

queued-message hint

## Testing

- just fmt

- cargo test -p codex-tui

- cargo test -p codex-tui-app-server

- just argument-comment-lint -p codex-tui -p codex-tui-app-server

Co-authored-by: Codex <noreply@openai.com>

## Summary

- remove `tui_app_server` handling for legacy app-server notifications

- drop the local ChatGPT auth refresh request path from `tui_app_server`

- remove the now-unused refresh response helper from local auth loading

Split out of #15106 so the `tui_app_server` cleanup can land separately

from the larger `codex-exec` app-server migration.

As part of moving the TUI onto the app server, we added some temporary

handling of some legacy events. We've confirmed that these do not need

to be supported, so this PR removes this support from the

tui_app_server, allowing for additional simplifications in follow-on

PRs. These events are needed only for very old rollouts. None of the

other app server clients (IDE extension or app) support these either.

## Summary

- stop translating legacy `codex/event/*` notifications inside

`tui_app_server`

- remove the TUI-side legacy warning and rollback buffering/replay paths

that were only fed by those notifications

- keep the lower-level app-server and app-server-client legacy event

plumbing intact so PR #15106 can rebase on top and handle the remaining

exec/lower-layer migration separately

- emit a typed `thread/realtime/transcriptUpdated` notification from

live realtime transcript deltas

- expose that notification as flat `threadId`, `role`, and `text` fields

instead of a nested transcript array

- continue forwarding raw `handoff_request` items on

`thread/realtime/itemAdded`, including the accumulated

`active_transcript`

- update app-server docs, tests, and generated protocol schema artifacts

to match the delta-based payloads

---------

Co-authored-by: Codex <noreply@openai.com>