## Summary

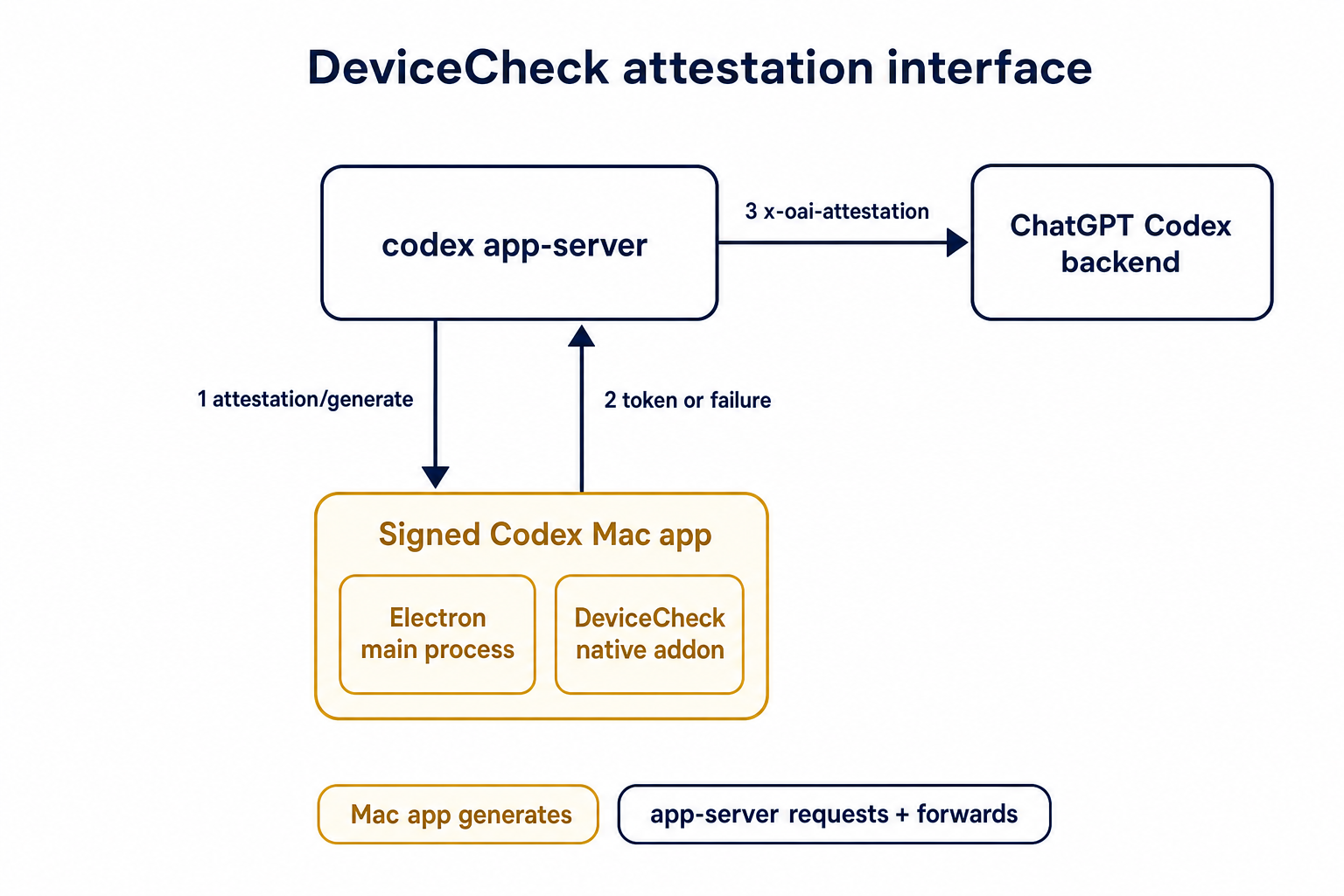

TL;DR: teaches `codex-rs` / app-server to request a desktop-provided

attestation token and attach it as `x-oai-attestation` on the scoped

ChatGPT Codex request paths.

## Details

This PR teaches the Codex app-server runtime how to request and attach

an attestation token. It does not generate DeviceCheck tokens directly;

instead, it relies on the connected desktop app to advertise that it can

generate attestation and then asks that app for a fresh header value

when needed.

The flow is:

1. The Codex desktop app connects to app-server.

2. During `initialize`, the app can advertise that it supports

`requestAttestation`.

3. Before app-server calls selected ChatGPT Codex endpoints, it sends

the internal server request `attestation/generate` to the app.

4. app-server receives a pre-encoded header value back.

5. app-server forwards that value as `x-oai-attestation` on the scoped

outbound requests.

The code in this repo is mostly protocol and runtime plumbing: it adds

the app-server request/response shape, introduces an attestation

provider in core, wires that provider into Responses / compaction /

realtime setup paths, and covers the intended scoping with tests. The

signed macOS DeviceCheck generation remains owned by the desktop app PR.

## Related PR

- Codex desktop app implementation:

https://github.com/openai/openai/pull/878649

## Validation

<details>

<summary>Tests run</summary>

```sh

cargo test -p codex-app-server-protocol

cargo test -p codex-core attestation --lib

cargo test -p codex-app-server --lib attestation

```

Also ran:

```sh

just fix -p codex-core

just fix -p codex-app-server

just fix -p codex-app-server-protocol

just fmt

just write-app-server-schema

```

</details>

<details>

<summary>E2E DeviceCheck validation</summary>

First validated the signed desktop app boundary directly: launched a

packaged signed `Codex.app`, sent `attestation/generate`, decoded the

returned `v1.` attestation header, and validated the extracted

DeviceCheck token with `personal/jm/verify_devicecheck_token.py` using

bundle ID `com.openai.codex`. Apple returned `status_code: 200` and

`is_ok: true`.

Then ran the fuller app + app-server flow. The packaged `Codex.app`

launched a current-branch app-server via `CODEX_CLI_PATH`, and a local

MITM proxy intercepted outbound `chatgpt.com` traffic. The app-server

requested `attestation/generate` from the real Electron app process, and

the intercepted `/backend-api/codex/responses` traffic included

`x-oai-attestation` on both routes:

```text

GET /backend-api/codex/responses Upgrade: websocket x-oai-attestation: present

POST /backend-api/codex/responses Upgrade: none x-oai-attestation: present

```

The captured header decoded to a DeviceCheck token that also validated

with Apple for `com.openai.codex` (`status_code: 200`, `is_ok: true`,

team `2DC432GLL2`).

</details>

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`ToolName::display()` made it too easy to flatten tool identity and

accidentally compare rendered strings. Tool identity should stay

structural until a legacy string boundary actually requires the

flattened spelling.

## What

- Removes `ToolName::display()` and relies on the existing `Display`

impl for messages and errors.

- Adds structural ordering for `ToolName` and uses it for

sorting/deduping deferred tools.

- Carries `ToolName` through tool/sandbox plumbing, flattening only at

legacy boundaries such as hook payloads, telemetry tags, and Responses

tool names.

- Updates MCP normalization tests to assert `ToolName` structure instead

of rendered strings.

## Testing

- `cargo test -p codex-mcp test_normalize_tools`

- `cargo test -p codex-core unavailable_tool`

- `just fix -p codex-protocol`

- `just fix -p codex-mcp`

- `just fix -p codex-core`

## Why

Codex assisted-code attribution needs a client-side accepted-code source

that does not upload raw code. This adds a hash-only analytics event

derived from the turn diff so downstream attribution can compare

accepted Codex lines against commit or PR diffs.

## What Changed

- Parse accepted/effective added lines from the final turn diff and emit

`codex_accepted_line_fingerprints` analytics.

- Hash repo, path, and normalized line content before upload; raw code

and raw diffs are not included in the event.

- Chunk large fingerprint payloads and send accepted-line fingerprint

events in isolated requests while preserving normal batching for other

analytics events.

- Canonicalize Git remote URLs before repo hashing so SSH/HTTPS GitHub

remotes join to the same repo hash.

- Add parser coverage for unified diff hunk lines that look like `+++`

or `---` file headers.

## Verification

- `cargo test -p codex-analytics`

- `cargo test -p codex-git-utils canonicalize_git_remote_url`

- `just fix -p codex-analytics`

- `just bazel-lock-check`

- `git diff --check`

## Why

The earlier PRs add stdio transport support and the config-backed

environment provider, but the feature remains inert until normal Codex

entrypoints construct `EnvironmentManager` with enough context to

discover `CODEX_HOME/environments.toml`. This final stack PR activates

the provider while preserving the legacy `CODEX_EXEC_SERVER_URL`

fallback when no environments file exists.

**Stack position:** this is PR 5 of 5. It is the product wiring PR that

activates the configured environment provider added in PR 4.

## What Changed

- Thread `codex_home` into `EnvironmentManagerArgs`.

- Change `EnvironmentManager::new(...)` to load the provider from

`CODEX_HOME`.

- Preserve legacy behavior by falling back to

`DefaultEnvironmentProvider::from_env()` when `environments.toml` is

absent.

- Make `environments.toml`-backed managers start new threads with all

configured environments, default first, while keeping the legacy env-var

path single-default.

- Update the app-server, TUI, exec, MCP server, connector, prompt-debug,

and thread-manager-sample callsites to pass `codex_home` and handle

provider-loading errors.

## Self-Review Notes

- The multi-environment startup path is intentionally tied to the

`environments.toml` provider. Using `>1` configured environment as the

only signal would also expand the legacy `CODEX_EXEC_SERVER_URL`

provider because it keeps `local` addressable alongside `remote`.

- The startup environment list is still derived inside

`EnvironmentManager`; the provider only says whether its snapshot should

start new threads with all configured environments.

- The thread-manager sample was updated to pass the current

`ThreadManager::new(...)` installation id argument so the stack compiles

under Bazel.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- 4. https://github.com/openai/codex/pull/20666 - Add CODEX_HOME

environments TOML provider

- **5. This PR:** https://github.com/openai/codex/pull/20667 - Load

configured environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

- `just fmt`

- `git diff --check`

- `bazel build --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/thread-manager-sample:codex-thread-manager-sample`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/exec-server:exec-server-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=default_thread_environment_selections_use_manager_default_id

//codex-rs/core:core-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=start_thread_uses_all_default_environments_from_codex_home

//codex-rs/core:core-unit-tests`

## Documentation

This activates `CODEX_HOME/environments.toml`; user-facing documentation

should be added before this stack is treated as a documented public

workflow.

---------

Co-authored-by: Codex <noreply@openai.com>

* Pass installation ID for storage on enrollments server for

deduping/grouping multiple appservers per installation

* Pass installation ID in remoteControl/status/changed events

## Why

`/fast` was wired as a one-off slash command even though model metadata

now exposes service tiers as catalog data. That meant adding another

tier, such as a slower/cheaper tier, would require more hardcoded TUI

plumbing instead of letting the model catalog drive the available

commands.

This change makes service-tier commands data-driven: each advertised

`service_tiers` entry becomes a `/name` command using the catalog

description, while the request path sends the tier `id` only when the

selected model supports it.

## What Changed

- Removed the hardcoded `/fast` slash-command variant and introduced

dynamic service-tier command items in the composer and command popup.

- Added toggle behavior for service-tier commands: invoking `/name`

selects that tier, and invoking it again clears the selection.

- Preserved the existing Fast-mode keybinding/status affordances by

resolving the current model tier whose name is `fast`, while still

sending the tier request value such as `priority`.

- Persisted service-tier selections as raw request strings so non-fast

tiers can round-trip through config.

- Updated the Bedrock catalog entry to advertise fast support through

`service_tiers` with `id: "priority"` and `name: "fast"`.

- Added defensive filtering in core so unsupported selected service

tiers are omitted from `/responses` requests.

## Validation

- Added/updated coverage for dynamic service-tier slash command lookup,

popup descriptions, composer dispatch, TUI fast toggling, and

unsupported-tier omission in core request construction.

- Local tests were not run per request.

---------

Co-authored-by: Codex <noreply@openai.com>

Since https://github.com/openai/codex/pull/21255, `rust-ci-full` has

been failing due to a missing `bwrap`.

```

thread 'main' panicked at linux-sandbox/src/launcher.rs:43:13:

bubblewrap is unavailable: no system bwrap was found on PATH and no bundled codex-resources/bwrap binary was found next to the Codex executable

```

Since the happy path is now to use the system binary, let's ensure

that's installed.

8d51826631

was necessary for the `bwrap` executable to be discoverable when the

working directory is `/`.

I ran `rust-ci-full` at

https://github.com/openai/codex/actions/runs/25528074506

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

1. Removes the broad `DARWIN_USER_CACHE_DIR` write rule from the macOS

Seatbelt network policy.

2. Removes the now unused policy parameter plumbing for that cache path.

3. Adds sandboxing coverage that keeps `com.apple.trustd.agent` for TLS

while rejecting the cache write rule.

## Why

This closes the exact cache poisoning boundary. The earlier `gh` TLS

issue is now covered by trustd access, so the cache write is no longer

needed.

## Validation

1. Rust formatting passed.

2. The sandboxing crate tests passed.

3. Local macOS Seatbelt repro with patched policy passed. `gh api`

returned `21442` without the cache write rule.

Provider initialization installs process-global OTEL state, so invalid

trace metadata needs to fail before setup begins.

Use the same span attribute validator as config loading when traces are

exported so provider startup enforces the config contract without

duplicating validation logic.

## Why

Some consumers expect conventional hyphenated HTTP headers. Codex

already sends the session and thread IDs on outbound Responses requests,

but it only uses the underscore spellings today, which makes those IDs

harder to consume in systems that normalize or reject underscore header

names.

Full context here:

https://openai.slack.com/archives/C08KCGLSPSQ/p1778248578422369

## What changed

- `build_session_headers` now emits both `session_id` and `session-id`

when a session ID is present.

- It does the same for `thread_id` and `thread-id`.

- Added regression coverage in `codex-api/tests/clients.rs` and

`core/tests/suite/client.rs` so both the lower-level client tests and

the end-to-end request tests assert the two header spellings are

present.

## Test plan

- Added header assertions in `codex-api/tests/clients.rs`.

- Added request-header assertions in `core/tests/suite/client.rs` for

both the `/v1/responses` and `/api/codex/responses` request paths.

Fixes#21665.

## Why

The TUI status line is the right place for compact, glanceable session

state. The original request was motivated by the need to see the active

permission posture without opening `/permissions` or `/status`,

especially when switching between safer and more permissive modes during

a session.

This PR intentionally separates `permissions` from `approval-mode`

instead of combining them into one status-line item. They answer related

but different questions: `permissions` describes the active

sandbox/profile shape, while `approval-mode` describes how command

approvals are handled. Keeping them separate makes each item

independently configurable and avoids long combined labels in an already

space-constrained status line.

The tradeoff is that users who want the full permission posture in the

status line need to opt into both items. In exchange, users can show

only the sandbox/profile label, only the approval behavior, or both, and

named user-defined profiles remain concise. Non-standard permission

shapes are rendered as `Custom permissions` rather than trying to

squeeze detailed profile contents into the status line; `/status`

remains the fuller explanatory surface.

## What changed

- Added a configurable `permissions` status-line item.

- Added a separate `approval-mode` status-line item, with `approval` as

an alias.

- Render standard permission states compactly as `Read Only`,

`Workspace`, or `Full Access`.

- Preserve user-defined permission profile names directly in the status

line.

- Render unnamed non-standard permission shapes as `Custom permissions`.

- Refresh status surfaces when `/permissions` updates the permission

profile, approval policy, or approval reviewer.

- Updated status-line preview snapshot coverage for the new items.

## Verification

- `cargo test -p codex-tui

status_permissions_non_default_workspace_write_uses_workspace_label`

- `cargo test -p codex-tui

permissions_selection_emits_history_cell_when_selection_changes`

- `cargo insta pending-snapshots --manifest-path tui/Cargo.toml`

## Why

The configurable `/statusline` and terminal title can display session

token usage. That display was using the raw total token count, which

includes cached input tokens, so it significantly overstated the token

usage compared with the blended token count shown elsewhere (in

`/status` and tracked in goals). This inconsistency resulted in user

confusion. We don't want to report cached tokens because we don't charge

for them and they are somewhat of an implementation detail that users

shouldn't care about.

## What changed

- Use `TokenUsage::blended_total()` for the `used-tokens` status surface

item so cached input is excluded.

- Add a brief comment to `tokens_in_context_window()` clarifying that it

returns raw `total_tokens`, whose meaning depends on whether the caller

has last-turn or accumulated usage.

## Summary

- enable `apply_patch_freeform` by default in the feature registry

## Why

- make the freeform `apply_patch` tool available by default when model

metadata does not explicitly opt into another mode

## Validation

- `just fmt`

- did not run tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`service_tier` in `config.toml` and profile config was still modeled as

an enum, which blocked newer or experimental service tier IDs even

though the runtime paths already carry string IDs.

This change makes the TOML-facing config accept string service tier IDs

directly while keeping the legacy `fast` alias behavior by normalizing

it to the request value `priority`.

## What Changed

- change the TOML-facing `service_tier` fields in global and profile

config to `Option<String>`

- keep config-load normalization so legacy `fast` still resolves to

`priority`

- persist resolved service tier strings directly in config locks so

arbitrary IDs round-trip cleanly

- regenerate the config schema and add config coverage for arbitrary

string IDs plus legacy `fast` normalization

## Verification

- added config tests for arbitrary string service tiers and legacy

`fast` normalization

- ran `just write-config-schema`

- CI

---------

Co-authored-by: Codex <noreply@openai.com>

## What changed

- rewrote `shutdown_flushes_pending_metadata_irrelevant_updated_at` to

seed an existing pending `updated_at` touch directly in

`RolloutWriterState`

- kept the shutdown test focused on draining a pending touch, leaving

the separate coalescing test to cover timing-based deferral

## Why

The old test had to complete several async operations inside the 50 ms

test-only coalescing window. When that sequence took longer, the second

flush updated `threads.updated_at` immediately and the pre-shutdown

equality assertion failed, even though shutdown behavior was correct.

## Validation

- `cargo test -p codex-rollout

shutdown_flushes_pending_metadata_irrelevant_updated_at`

- `cargo test -p codex-rollout`

Co-authored-by: Codex <noreply@openai.com>

## Summary

API-key-auth remote compaction requests should not inherit

`service_tier` from normal `/responses` turns. This path needs to match

API auth expectations, while ChatGPT-auth remote compaction should keep

reusing the shared request fields that still apply there.

This change keeps the decision inline in

`codex-rs/core/src/compact_remote.rs` only. Under API key auth, the

classic remote `/responses/compact` path now omits `service_tier`; under

ChatGPT auth, it keeps reusing the configured tier.

`codex-rs/core/src/compact_remote_v2.rs` is unchanged. The remote

compaction parity coverage and snapshots were updated to assert the

API-key omission and preserve the ChatGPT-auth behavior.

## Testing

- Updated remote compaction parity coverage in

`codex-rs/core/tests/suite/compact_remote.rs` and the corresponding

snapshots.

## Why

`codex exec` still included the stale `research preview` label in its

human-readable startup banner, which makes the CLI look older and less

current than it is.

Fixes#21444.

## What Changed

Removed the hard-coded ` (research preview)` suffix from the `OpenAI

Codex v<version>` startup banner in

`codex-rs/exec/src/event_processor_with_human_output.rs`.

## Validation

Local validation was not required for this one-line startup banner text

cleanup.

Requires discoverability on plugin/share/updateTargets so the server can

manage workspace link access consistently, including auto-adding the

workspace principal for UNLISTED.

Also rejects LISTED on share creation and blocks client-supplied

workspace principals while preserving response parsing for LISTED.

## Summary

Codex's Amazon Bedrock provider signs Mantle requests with SigV4 using

credentials resolved by the AWS SDK. That worked for standard AWS

profiles and environment credentials, but AWS CLI console-login profiles

created by `aws login` require the SDK's `credentials-login` feature to

resolve `login_session` credentials.

This change enables that credential provider so Bedrock can use AWS

console-login credentials through the existing provider-owned AWS auth

path.

While testing the console-login path, we also hit a Mantle-specific

SigV4 regression from the new split between `session_id` and

`thread_id`. Mantle does not preserve legacy OpenAI compatibility

headers that use `snake_case` before SigV4 verification, so signing

those headers can make the server reconstruct a different canonical

request. The Bedrock auth path now removes that header class before

signing, keeping preserved hyphenated Codex/AWS headers such as

`x-codex-turn-metadata` signed normally.

## Changes

- Enable `aws-config`'s `credentials-login` feature in

`codex-rs/aws-auth`.

- Add a compile-time regression test for

`aws_config::login::LoginCredentialsProvider`.

- Strip `snake_case` compatibility headers from Bedrock Mantle SigV4

requests before signing.

- Expand the Bedrock auth regression test to cover `session_id`,

`thread_id`, and future headers of the same shape.

- Refresh Cargo and Bazel lockfiles for the added `aws-sdk-signin`

dependency.

## Tests

- tested with `aws login` locally and verified that it works as

intended.

## Summary

- Remove `perCwdExtraUserRoots` / `SkillsListExtraRootsForCwd` from the

`skills/list` app-server API.

- Drop Rust app-server and `codex-core-skills` extra-root plumbing so

skill scans are keyed by the normal cwd/user/plugin roots only.

- Regenerate app-server schemas and update docs/tests that only existed

for the removed extra-roots behavior.

## Validation

- `just write-app-server-schema`

- `just fmt`

- `cargo test -p codex-app-server-protocol`

- `cargo test -p codex-core-skills`

- `just fix -p codex-app-server-protocol`

- `just fix -p codex-core-skills`

- `just fix -p codex-app-server`

- `just fix -p codex-tui`

## Notes

- `cargo test -p codex-app-server --test all skills_list` ran the edited

skills-list cases, but the full filtered run ended on existing

`skills_changed_notification_is_emitted_after_skill_change` timeout

after a websocket `401`.

- `cargo test -p codex-tui --lib` compiled the changed TUI callers, then

failed two unrelated status permission tests because local

`/etc/codex/requirements.toml` forbids `DangerFullAccess`.

- Source-truth check found the OpenAI monorepo still has

generated/app-server-kit mirror references to the removed field; those

should be cleaned up when generated app-server types are synced or in a

companion OpenAI cleanup.

## Why

We want terminal tool review analytics, but the reducer should not stamp

review timing from its own wall clock.

This PR plumbs review timing through the real protocol and app-server

seams so downstream analytics can consume the emitter's timestamps

directly. Guardian reviews keep their enriched `started_at` /

`completed_at` analytics fields by deriving those legacy second-based

values from the same protocol-native millisecond lifecycle timestamps,

rather than sampling a separate analytics clock.

## What changed

- add `started_at_ms` to user approval request payloads

- add `started_at_ms` / `completed_at_ms` to guardian review

notifications

- preserve Guardian review `started_at` / `completed_at` enrichment from

the protocol-native timing source

- stamp typed `ServerResponse` analytics facts with app-server-observed

`completed_at_ms`

- thread the new timing fields through core, protocol, app-server, TUI,

and analytics fixtures

## Verification

- `cargo test -p codex-app-server outgoing_message --manifest-path

codex-rs/Cargo.toml`

- `cargo test -p codex-app-server-protocol guardian --manifest-path

codex-rs/Cargo.toml`

- `cargo test -p codex-tui guardian --manifest-path codex-rs/Cargo.toml`

- `cargo test -p codex-analytics analytics_client_tests --manifest-path

codex-rs/Cargo.toml`

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/21434).

* #18748

* __->__ #21434

* #18747

* #17090

* #17089

* #20514

## Why

Remote compaction v2 consumes a normal Responses stream, but that

compaction-specific stream consumer dropped the `response.completed` id.

As a result, the `responses_websocket_response_processed` lifecycle

notification was emitted for normal turn sampling but not after a v2

remote compaction response was fully processed.

## What changed

- Return the completed response id alongside the v2 `context_compaction`

output item.

- After v2 compacted history is installed, send `response.processed`

through the same websocket session when the feature is enabled.

- Add websocket regression coverage for a remote compaction v2 request

followed by `response.processed`.

## Verification

- `cargo test -p codex-core --test all

responses_websocket_sends_response_processed_after_remote_compaction_v2

-- --nocapture`

- `cargo test -p codex-core

collect_context_compaction_output_accepts_additional_output_items --

--nocapture`

## Why

After stdio transports and provider-owned defaults exist, Codex needs a

config-backed provider that can describe more than the single legacy

`CODEX_EXEC_SERVER_URL` remote. This PR adds that provider without

activating it in product entrypoints yet, keeping parser/validation

review separate from runtime wiring.

**Stack position:** this is PR 4 of 5. It builds on PR 3's

provider/default model and adds the `environments.toml` provider used by

PR 5.

## What Changed

- Add `environment_toml.rs` as the TOML-specific home for parsing,

validation, and provider construction.

- Keep the TOML schema/provider structs private; the public constructor

added here is `EnvironmentManager::from_codex_home(...)`.

- Add `TomlEnvironmentProvider`, including validation for:

- reserved ids such as `local` and `none`

- duplicate ids

- unknown explicit defaults

- empty programs or URLs

- exactly one of `url` or `program` per configured environment

- Support websocket environments with `url = "ws://..."` / `wss://...`.

- Support stdio-command environments with `program = "..."`.

- Add helpers to load `environments.toml` from `CODEX_HOME`, but do not

wire entrypoints to call them yet.

- Add the `toml` dependency for parsing.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- **4. This PR:** https://github.com/openai/codex/pull/20666 - Add

CODEX_HOME environments TOML provider

- 5. https://github.com/openai/codex/pull/20667 - Load configured

environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

Not run locally; this was split out of the original draft stack.

## Documentation

This introduces the config shape for `environments.toml`; user-facing

documentation should be added before this stack is treated as a

documented public workflow.

---------

Co-authored-by: Codex <noreply@openai.com>

Route view_image through selected environments so image reads use the selected turn environment and cwd, with schema exposure limited to multi-environment toolsets.\n\nCo-authored-by: Codex <noreply@openai.com>

## Why

The next PR in this stack introduces configured environments, where the

provider knows both which environments exist and which one should be

selected by default. The existing manager derived the default internally

by checking for the legacy `remote` and `local` ids, and it treated

"remote" as equivalent to "has a websocket URL." That does not work

cleanly for stdio-command remotes because they are remote environments

without an `exec_server_url`.

**Stack position:** this is PR 3 of 5. It is the environment-model

bridge between PR 2's transport enum and PR 4's TOML provider.

## What Changed

- Add `DefaultEnvironmentSelection` to the `EnvironmentProvider`

contract:

- `Derived` preserves the old `remote`-then-`local` fallback behavior.

- `Environment(id)` lets a provider explicitly select a configured

default.

- `Disabled` lets a provider intentionally expose no default

environment.

- Move the legacy `CODEX_EXEC_SERVER_URL=none` default-disabling

behavior into `DefaultEnvironmentProvider`.

- Make `EnvironmentManager` validate explicit provider defaults and

return an error if the selected id is missing.

- Track `remote_transport` separately from `exec_server_url` so

stdio-command environments are still recognized as remote.

- Add `Environment::remote_stdio_shell_command(...)` for the TOML

provider added in the next PR.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- **3. This PR:** https://github.com/openai/codex/pull/20665 - Make

environment providers own default selection

- 4. https://github.com/openai/codex/pull/20666 - Add CODEX_HOME

environments TOML provider

- 5. https://github.com/openai/codex/pull/20667 - Load configured

environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

Not run locally; this was split out of the original draft stack.

---------

Co-authored-by: Codex <noreply@openai.com>

Remove the remote thread-store backend and checked-in protobuf

artifacts. We've moved these into another crate that link against this

one.

Also remove the config settings for thread store backend selection,

since we'll instead pass an instantiated thread store into the core-api

crate's main entrypoint.

## Why

Configured environments need to connect to exec-server instances that

are not necessarily already listening on a websocket URL. A

command-backed stdio transport lets Codex start an exec-server process,

speak JSON-RPC over its stdio streams, and clean up that child process

with the client lifetime.

**Stack position:** this is PR 2 of 5. It builds on the server-side

stdio listener from PR 1 and provides the client transport used by later

environment/config PRs.

## What Changed

- Add `ExecServerTransport` variants for websocket URLs and stdio shell

commands.

- Add stdio command connection support for `ExecServerClient`.

- Move websocket/stdio transport setup into `client_transport.rs` so

`client.rs` stays focused on shared JSON-RPC client, session, HTTP, and

notification behavior.

- Tie stdio child process cleanup to the JSON-RPC connection lifetime

with a RAII lifetime guard.

- Keep existing websocket environment behavior by adapting URL-backed

remotes to `ExecServerTransport::WebSocketUrl`.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- **2. This PR:** https://github.com/openai/codex/pull/20664 - Add stdio

exec-server client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- 4. https://github.com/openai/codex/pull/20666 - Add CODEX_HOME

environments TOML provider

- 5. https://github.com/openai/codex/pull/20667 - Load configured

environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

Not run locally; this was split out of the original draft stack and then

refactored to separate transport setup from the base client.

---------

Co-authored-by: Codex <noreply@openai.com>

Add Codex config for static trace span attributes and structured W3C

tracestate field upserts. The config flows through OtelSettings so

callers can attach trace metadata without touching every span call site.

Apply span attributes with an SDK span processor so every exported

trace span carries the configured metadata. Model tracestate as nested

member fields so configured keys can be upserted while unrelated

propagated state in the same member is preserved.

Validate configured tracestate before installing provider-global state,

including header-unsafe values the SDK does not reject by itself. This

keeps Codex from propagating malformed trace context from config.

Update the config schema, public docs, and OTLP loopback coverage for

config parsing, span export, propagation, and invalid-header rejection.

## Why

The goal of this PR is to align on app-server and `ThreadStore` API

updates for paginating through large threads.

#### app-server

##### `thread/turns/list`

- Updates `thread/turns/list` to support `itemsView?: "notLoaded" |

"summary" | "full" | null`, defaulting to `summary`.

- Implements the current `thread/turns/list` behavior over the existing

persisted rollout-history fallback:

- `notLoaded` returns turn envelopes with empty `items`.

- `summary` returns the first user message and final assistant message

when available.

- `full` preserves the existing full item behavior.

Note that this method still uses the naive approach of loading the

entire rollout file, and returns just the filtered slice of the data.

Real pagination will come later by leveraging SQLite.

##### `thread/turns/items/list`

- Adds the experimental `thread/turns/items/list` protocol, schema,

dispatcher, and processor stub. The app-server currently returns

JSON-RPC `-32601` with `thread/turns/items/list is not supported yet`.

#### ThreadStore

- Adds the experimental `thread/turns/items/list` protocol, schema,

dispatcher, and processor stub. The app-server currently returns

JSON-RPC `-32601` with `thread/turns/items/list is not supported yet`.

- Adds `ThreadStore` contract types and stubbed methods for listing

thread turns and listing items within a turn.

- Adds a typed `StoredTurnStatus` and `StoredTurnError` to avoid baking

app-server API enums or lossy string status values into the store-facing

turn contract.

- Adds a typed `StoredTurnStatus` and `StoredTurnError` to avoid baking

app-server API enums or lossy string status values into the store-facing

turn contract.

This also sketches the storage abstraction we expect to need once turns

are indexed/stored. In particular, `notLoaded` is useful only if

ThreadStore can eventually list turn metadata without loading every

persisted item for each turn.

## Validation

- Added/updated protocol serialization coverage for the new request and

response shapes.

- Added app-server integration coverage for `thread/turns/list` default

summary behavior and all three `itemsView` modes.

- Added app-server integration coverage that `thread/turns/items/list`

returns the expected unsupported JSON-RPC error when experimental APIs

are enabled.

- Added thread-store coverage that the default trait methods return

`ThreadStoreError::Unsupported`.

No developers.openai.com documentation update is needed for this

internal experimental app-server API surface.

## Summary

`cargo test` has entails both running standard Rust tests and doctests.

It turns out that the doctest discovery is fairly slow, and it's a cost

you pay even for crates that don't include any doctests.

This PR disables doctests with `doctest = false` for crates that lack

any doctests.

For the collection of crates below, this speeds up test execution by

>4x.

E.g., before this PR:

```

Benchmark 1: cargo test -p codex-utils-absolute-path -p codex-utils-cache -p codex-utils-cli -p codex-utils-home-dir -p codex-utils-output-truncation -p codex-utils-path -p codex-utils-string -p codex-utils-template -p codex-utils-elapsed -p codex-utils-json-to-toml

Time (mean ± σ): 1.849 s ± 4.455 s [User: 0.752 s, System: 1.367 s]

Range (min … max): 0.418 s … 14.529 s 10 runs

```

And after:

```

Benchmark 1: cargo test -p codex-utils-absolute-path -p codex-utils-cache -p codex-utils-cli -p codex-utils-home-dir -p codex-utils-output-truncation -p codex-utils-path -p codex-utils-string -p codex-utils-template -p codex-utils-elapsed -p codex-utils-json-to-toml

Time (mean ± σ): 428.6 ms ± 6.9 ms [User: 187.7 ms, System: 219.7 ms]

Range (min … max): 418.0 ms … 436.8 ms 10 runs

```

For a single crate, with >2x speedup, before:

```

Benchmark 1: cargo test -p codex-utils-string

Time (mean ± σ): 491.1 ms ± 9.0 ms [User: 229.8 ms, System: 234.9 ms]

Range (min … max): 480.9 ms … 512.0 ms 10 runs

```

And after:

```

Benchmark 1: cargo test -p codex-utils-string

Time (mean ± σ): 213.9 ms ± 4.3 ms [User: 112.8 ms, System: 84.0 ms]

Range (min … max): 206.8 ms … 221.0 ms 13 runs

```

Co-authored-by: Codex <noreply@openai.com>

A clean release build takes ~18m and an incremental build takes ~12m.

This is far too slow to iterate on performance related changes and the

build time is dominated by LTO.

This pull request adds a `profiling` profile for Cargo which takes ~13m

clean and ~6m incremental, the primary change is that LTO is disabled.

This matches a profile used in uv and follows the great work at

https://github.com/astral-sh/uv/pull/5955 — there's a bit of commentary

there about the trade-offs this implies.

We've found that this does not inhibit the ability to accurately

benchmark as measurements with LTO disabled are generally consistent

with the results with LTO enabled and it makes it much faster (~2x) to

rebuild after making a change.

This is motivated by my interest in improving Codex TUI performance,

which is blocked by the tragically builds right now.

I tested incremental build times by making a no-op change to the

`codex-cli` crate.

## Why

Codex desktop copies bundled Windows binaries out of `WindowsApps` into

a LocalAppData runtime cache before launching `codex.exe`. Sandboxed

commands can then need to execute helpers from that cache, but the

sandbox user group may not have read/execute access to the runtime bin

directory.

This makes the Windows sandbox refresh path repair that access directly

so the packaged desktop runtime remains usable from sandboxed sessions.

## What changed

- Added `setup_runtime_bin` to locate `%LOCALAPPDATA%\OpenAI\Codex\bin`,

matching the desktop bundled-binaries destination path, with the same

`USERPROFILE\AppData\Local` fallback shape.

- During refresh setup, check whether `CodexSandboxUsers` already has

read/execute access to the runtime bin directory.

- If access is missing, grant `CodexSandboxUsers` `OI/CI/RX` inheritance

on that directory.

- If the runtime bin directory does not exist, no-op cleanly.

## Verification

- `cargo build -p codex-windows-sandbox --bin

codex-windows-sandbox-setup`

- `cargo test -p codex-windows-sandbox --bin

codex-windows-sandbox-setup`

- Manual Windows ACL exercise against the installed packaged runtime

bin:

- existing inherited `CodexSandboxUsers:(I)(OI)(CI)(RX)` no-ops without

changing SDDL

- after disabling inheritance and removing the group ACE, setup adds

`CodexSandboxUsers:(OI)(CI)(RX)`

- with `LOCALAPPDATA` pointed at a fake location without

`OpenAI\Codex\bin`, setup exits successfully and does not create the

directory

- restored the real runtime bin with inherited ACLs and confirmed the

final SDDL matched the baseline exactly

- Route ThreadManager rollout-path resume/fork through ThreadStore

history reads.

- Add in-memory store coverage proving path-addressed reads are used.

This isn't strictly necessary for the ThreadStore migration, since these

ThreadManager methods _only_ work for path-based lookups, but I'm trying

to migrate all the rollout recorder callsites to use the threadstore

were possible for consistency.

## Summary

Fix `getConversationSummary` so thread-id summaries work for stored

threads that do not have a local rollout path, such as remote thread

stores.

The root cause was that `summary_from_stored_thread` returned `None`

when `StoredThread.rollout_path` was absent, and

`get_thread_summary_response_inner` treated that as an internal error.

This made conversation-id lookups depend on a local-only field even

though the thread store can address the thread by id.

- Route live thread renames through `ThreadStore` metadata updates.

- Read resumed thread names from store metadata with legacy local

fallback preserved in the store.

## Why

This is the next stacked step after deleting the tool-handler kind

indirection. Specs should come from the registered handlers themselves

so registry construction has a single source of truth for handler

behavior and exposed tool definitions.

## What changed

- Added `ToolHandler::spec()` plus handler-provided parallel/code-mode

metadata, and made `ToolRegistryBuilder::register_handler` automatically

collect specs from registered handlers.

- Moved builtin tool spec construction into the corresponding handlers

and their adjacent `_spec` modules, including shell, unified exec, apply

patch, view image, request plugin install, tool search, MCP resource,

goals, planning, permissions, agent jobs, and multi-agent tools.

- Reworked configurable handlers to receive their tool-building options

through constructors, with non-optional handler options where the

handler is always spec-backed. Shell fallback handlers keep an explicit

no-spec mode because they are also registered as hidden dispatch

aliases.

- Kept `CodeModeExecuteHandler` on the explicit configured wrapper so

the code-mode exec spec can still be built from the nested registry.

## Verification

- `cargo check -p codex-core`

- `cargo test -p codex-core tools::spec_plan::tests`

- `cargo test -p codex-core tools::spec::tests`

- `cargo test -p codex-core tools::handlers::multi_agents_spec::tests`

- `RUST_MIN_STACK=16777216 cargo test -p codex-core

tools::handlers::multi_agents::tests`

- `cargo test -p codex-core tools::handlers::apply_patch::tests`

- `cargo test -p codex-core tools::handlers::unified_exec::tests`

- `just fix -p codex-core`

- `git diff --check`

## Why

App-server config writes were leaving existing threads partially stale.

After a config mutation, the app-server told each live thread to run

`Op::ReloadUserConfig`, but that path only re-read the user

`config.toml` layer. Settings that came from the app-server's

materialized config snapshot did not propagate to existing threads until

restart.

This change prevent a FS access from `core` for CCA.

## What changed

- add `CodexThread::refresh_runtime_config()` and

`Session::refresh_runtime_config()` so the app-server can push a freshly

rebuilt config snapshot into a live thread

- rebuild the latest config with each thread's `cwd` after config

mutations, then refresh the thread from that snapshot instead of asking

it to reload only `config.toml`

- keep session-static settings unchanged during refresh, while updating

runtime-refreshable state such as the config layer stack,

`tool_suggest`, and derived hook/plugin/skill state

- keep `reload_user_config_layer()` as the file-backed fallback for

legacy local reload flows, but route the shared refresh logic through

the new runtime refresh path

## Testing

- add a session test that verifies `refresh_runtime_config()` rebuilds

hooks from refreshed config

- add a session test that verifies runtime-refreshable fields update

while session-static settings like `model` and `notify` stay unchanged

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

`codex --enable remote_control app-server --listen off` is the current

way to start a headless, remote-controllable app-server, but it is hard

to remember and exposes implementation details.

This adds `codex remote-control` as a friendly top-level wrapper for

that flow. The command starts a foreground app-server with local

transports disabled and enables `remote_control` only for that

invocation.

## Changes

- Add a visible `codex remote-control` CLI subcommand.

- Launch app-server with `AppServerTransport::Off`.

- Append `features.remote_control=true` after root feature toggles so

the explicit command wins over `--disable remote_control`.

- Reject root `--remote` / `--remote-auth-token-env`, matching other

non-TUI subcommands.

- Add tests for parsing, launch defaults, override ordering, and remote

flag rejection.

## Verification

- `cargo test -p codex-cli`

- `just fix -p codex-cli`

## Summary

This PR removes the synthetic `HashMap<String, ToolInfo>` keys from MCP

tool discovery. `McpConnectionManager::list_all_tools()` now returns

normalized `Vec<ToolInfo>`, and downstream code derives identity from

`ToolInfo::canonical_tool_name()`.

The motivation is to keep model-visible tool identity on

`ToolName`/`ToolInfo` instead of parallel string map keys, so future

namespace changes do not have to preserve otherwise-unused lookup keys.

## Changes

- Rename the MCP normalization path from `qualify_tools` to

`normalize_tools_for_model` and return tool values directly.

- Flow MCP tool lists through connectors, plugin injection, router/spec

building, code mode, and tool search as vectors/slices.

- Keep direct/deferred subtraction local to `mcp_tool_exposure`, using

`ToolName` values.

- Update tests to compare `ToolName` instances where MCP identity

matters.

## Validation

- `cargo test -p codex-mcp test_normalize_tools`

- `cargo test -p codex-core mcp_tool_exposure`

- `cargo test -p codex-core

direct_mcp_tools_register_namespaced_handlers`

- `cargo test -p codex-core

search_tool_registers_namespaced_mcp_tool_aliases`

- `just fix -p codex-mcp`

- `just fix -p codex-core`

## Why

We want to emit terminal review analytics for tool-related approval

flows, but the event contract needs to exist before the reducer can

publish anything.

This PR is the schema-only slice for the Codex review event family.

## What changed

- add the `ReviewEvent` analytics envelope in

`codex-rs/analytics/src/events.rs`

- define the review subject kind, reviewer, trigger, terminal status,

and post-review resolution enums

- define the review event payload with thread, turn, item, lineage,

tool, and timing fields that the emitter stack will populate

## Verification

- stacked verification in dependent PRs: `cargo test -p codex-analytics

analytics_client_tests --manifest-path codex-rs/Cargo.toml`

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/18747).

* #18748

* #21434

* __->__ #18747

* #17090

* #17089

* #20514