Fetch the published per-target checksum asset alongside the musl archive and binding so Cargo musl jobs keep working without re-expanding the MODULE.bazel checksum manifest contract.

Add the `crdtp_binding.cc` source and CRDTP headers to the 147 Bazel V8

binding target so Windows GNU builds provide the symbols required by the

`v8::crdtp` Rust APIs.

Add a regression test that exercises CRDTP JSON conversion and dispatchable

message parsing.

Keep non-Windows rusty-v8 consumers on the resolved 147.4.0 source-built graph. Avoid mixing LLVM's default libc++ headers into rusty-v8's custom libc++ build, propagate that configuration to external C++ deps, and export the musl-specific libc++ define through the shared header target.

Also make checksum validation follow the remaining prebuilt asset version while the source-build migration is in progress.

Co-authored-by: Codex <noreply@openai.com>

Produce explicitly named sandbox release pairs alongside the current compatibility artifacts, and validate staged sandbox outputs before publication across the supported artifact targets.

Co-authored-by: Codex <noreply@openai.com>

## Summary

- Populate `plugin/list` interface metadata for installed Git-sourced

marketplace plugins from the active cached plugin bundle.

- Preserve marketplace category precedence so list behavior matches

`plugin/read`.

- Keep existing fallback behavior when the cache or manifest is missing

or invalid.

## Test Plan

- `cd codex-rs && just fmt`

- `cd codex-rs && cargo test -p codex-core-plugins

list_marketplaces_installed_git_source_reads_metadata_from_cache_without_cloning`

- `cd codex-rs && cargo test -p codex-app-server

plugin_list_returns_installed_git_source_interface_from_cache`

- `cd codex-rs && just fix -p codex-core-plugins`

- `cd codex-rs && just fix -p codex-app-server`

- `git diff --check`

Server-truth check: OpenAI monorepo app-server generated types already

expose `PluginSummary.interface`, and the webview consumes it for plugin

cards. This PR keeps the protocol/schema unchanged and fills the

existing field from the cached installed bundle for Git-backed

cross-repo plugins.

## Why

The TUI currently treats Markdown tables as ordinary wrapped text, which

makes table-heavy responses hard to read and brittle across narrow panes

and terminal resizes.

This change teaches the TUI to render Markdown tables responsively while

preserving the raw Markdown source needed to re-render streamed and

finalized transcript content after width changes. The goal is to keep

tables legible during streaming, after resize, and once a turn has

finished, without corrupting scrollback ordering.

## What Changed

- add table detection and responsive table rendering in the Markdown

renderer

- render standard tables with Unicode box-drawing borders when the pane

is wide enough

- add a vertical readability fallback for constrained or dense tables so

narrow panes still show each row clearly

- keep links and `<br>` content inside table cells instead of leaking

text outside the table

- avoid table normalization inside fenced or indented code blocks

- preserve raw streamed Markdown source and keep the active table as a

mutable tail until finalization

- consolidate finalized streamed content into source-backed transcript

cells so post-resize re-rendering stays correct

- add snapshot and targeted streaming/resize regression coverage for the

new table behavior

## How to Test

1. Start Codex TUI from this branch.

2. Paste this exact prompt:

`This is a session to test codex, no need to do any thinking, just end

different markdown tables, with columns exploring different markdown

contents, like links, bold italic, code, etc. Make them different sizes,

some 30+ rows, some not and intertwine them with some paragraphs with

complex formatting as well.`

3. Confirm the response includes several Markdown tables mixed with

richly formatted paragraphs.

4. Confirm wide-enough tables render with box-drawing borders instead of

plain wrapped pipe text.

5. Resize the terminal narrower while the answer is still streaming and

confirm the in-progress table stays coherent instead of duplicating

headers or leaving broken scrollback behind.

6. Resize again after the turn finishes and confirm the finalized

transcript re-renders cleanly at the new width.

7. In a narrow pane, verify dense tables fall back to the vertical

per-row layout instead of producing unreadable wrapped columns.

8. Also verify pipe-heavy fenced code blocks still render as code, not

as tables.

Targeted tests:

- `cargo test -p codex-tui table_readability_fallback --no-fail-fast`

- `cargo test -p codex-tui markdown_render --no-fail-fast`

- `cargo test -p codex-tui streaming::controller --no-fail-fast`

- `cargo test -p codex-tui table_resize_lifecycle --no-fail-fast`

## Docs

No developer docs update appears necessary.

## Summary

The issue digest uses recent posts, comments, and reactions to decide

which issues deserve attention. A single active user could previously

raise an issue's apparent importance by commenting or reacting multiple

times in the window.

This changes `codex-issue-digest` so `user_interactions` counts unique

human GitHub users per issue across new issue posts, new comments, and

new reactions. Raw reaction/comment counts are still preserved for

detail output, and the skill guidance now describes `Interactions` as a

unique-human-user count.

## Why

On native Windows, running `/mcp` can leak `taskkill`'s normal

`SUCCESS:` messages into the Codex TUI while the temporary MCP inventory

process tree is being torn down. That corrupts the screen even though

MCP itself is working correctly.

Fixes#20845.

## What Changed

- Redirect the Windows-only MCP teardown `taskkill` subprocess to null

stdio so its console output cannot reach the TUI.

## How to Test

1. On native Windows, configure a stdio MCP server, for example:

```powershell

codex mcp add sequential-thinking -- npx -y

@modelcontextprotocol/server-sequential-thinking

```

2. With the latest released Codex CLI, start Codex and run `/mcp`.

3. Confirm the current behavior: `taskkill` `SUCCESS:` lines appear in

the TUI during the MCP refresh.

4. Switch to this branch's build, start Codex again, and run `/mcp`.

5. Confirm the MCP inventory still renders normally and the `taskkill`

lines no longer appear.

6. Repeat `/mcp` once more on this branch to verify the regression does

not recur on repeated inventory requests.

Targeted tests:

- `cargo test -p codex-rmcp-client`

- `cargo test -p codex-rmcp-client --test process_group_cleanup --quiet`

## Why

Inside tmux, `Shift+Enter` can still reach Codex as a plain `Enter` even

when tmux has extended keys enabled. In `csi-u` tmux panes, Codex needs

to request `modifyOtherKeys` mode 2 so tmux moves the pane from `VT10x`

into extended-key mode and preserves the Shift modifier. Without that

extra request, composer `Shift+Enter` submits the draft instead of

inserting a newline.

Fixes#21699.

## What Changed

- Detect tmux sessions and read the active `extended-keys-format`,

preferring the pane-local value before falling back to the global

option.

- Request `modifyOtherKeys` mode 2 for tmux panes using `csi-u` extended

keys, and reset it when restoring keyboard reporting.

- Add unit coverage for tmux detection, the format gate, and the emitted

`modifyOtherKeys` escape sequence.

## How to Test

1. In tmux, configure:

```tmux

set-option -g extended-keys on

set-option -g extended-keys-format csi-u

```

2. Start Codex in a fresh tmux pane from this branch.

3. From another pane, confirm the Codex pane reports `mode=Ext 2`:

```bash

tmux list-panes -a -F '#{session_name}:#{window_index}.#{pane_index}

mode=#{pane_key_mode} cmd=#{pane_current_command}'

```

4. Type a draft in the composer and press `Shift+Enter`; confirm it

inserts a newline instead of submitting.

5. Also confirm plain `Enter` still submits as before.

Targeted tests:

- `cargo test -p codex-tui`

## Notes

- Manual verification used both real `Shift+Enter` in iTerm2/tmux and

`tmux send-keys ... S-Enter` to confirm the tmux pane changes from

`VT10x` to `Ext 2` and preserves newline behavior.

- On this checkout, the broader `codex-tui` test run currently reaches

unrelated existing failures in `status::tests::*` plus a later stack

overflow in

`tests::fork_last_filters_latest_session_by_cwd_unless_show_all`.

### Motivation

- Normalize persisted service tier so selecting the request value

`priority` (or legacy `fast`) is stored as `fast` while preserving

unknown tier IDs and keeping request-time behavior unchanged.

### Description

- Update persistence logic in `codex-rs/core/src/config/edit.rs` so

`ConfigEdit::SetServiceTier` maps request values: `priority`/`fast` ->

`"fast"`, `flex` -> `"flex"`, and leaves unknown strings unchanged.

- Add unit tests in `codex-rs/core/src/config/edit_tests.rs` that verify

a `priority` selection is written to `config.toml` as `"fast"` and that

unknown tiers are preserved.

- Add a config load test in `codex-rs/core/src/config/config_tests.rs`

to ensure `service_tier = "priority"` still resolves to the `priority`

request value at load time.

- Add the required import `use

codex_protocol::config_types::ServiceTier;` to the edited modules.

### Testing

- Ran `just fmt` and `just fix -p codex-core` to apply formatting and

lints and they completed successfully.

- Ran `cargo test -p codex-core --lib service_tier` (focused unit tests

for the change) and the tests passed.

- Ran `cargo test -p codex-protocol` and the protocol test suite passed.

- Note: an initial broader `cargo test -p codex-core service_tier`

invocation matched integration tests and produced unrelated

failures/hangs, so that run was interrupted and the focused `--lib`

unit-test invocation was used instead.

------

[Codex

Task](https://chatgpt.com/codex/cloud/tasks/task_i_69ffc5a1262c8321af91b69c9845147f)

## Summary

`ChatWidget` has been carrying several independent domains in one large

state bag: transcript bookkeeping, turn lifecycle, queued input, status

surfaces, connectors, review mode, and protocol dispatch. That makes

otherwise-local changes hard to reason about because unrelated fields

and side effects live beside each other in `chatwidget.rs`.

This is the first cleanup PR in a larger decomposition effort. It does

not try to make `chatwidget.rs` small in one sweep; instead, it

establishes focused state boundaries that later handler, popup,

rendering, and effect-synchronization extractions can build on.

This PR keeps `ChatWidget` as the composition layer while moving focused

state into smaller `codex-tui` modules. The widget still owns effects

that touch the bottom pane, app events, command submission, redraw

scheduling, and terminal-title updates.

## Changes

- Add focused state modules under `codex-rs/tui/src/chatwidget/` for

input queues, turn lifecycle, transcript bookkeeping, status state,

connectors, review mode, and app-server protocol dispatch.

- Update `ChatWidget` to hold grouped state structs and route

input/lifecycle/status operations through those focused helpers.

- Move app-server notification dispatch into `chatwidget/protocol.rs`

while leaving feature handlers and side effects on `ChatWidget`.

- Replace the large manual `ChatWidget` test literal with the normal

constructor plus narrow test overrides, so future state moves do not

require every field to be restated in test setup.

- Update existing tests to access the new grouped state or narrower

helpers without changing snapshot behavior.

## Longer-term direction

Follow-up PRs can continue shrinking `chatwidget.rs` by moving behavior,

not just state, into focused modules:

- Extract input/submission flow, turn/stream handling, and tool-cell

lifecycles into domain modules that call the new state reducers.

- Move popup/settings builders and rendering helpers out of the main

widget file so `ChatWidget` stays focused on composition.

- Reduce direct `BottomPane` mutation by applying domain-specific sync

outputs at clearer boundaries.

## Why

Fixes#16688.

The TUI currently hydrates collab receiver metadata by awaiting

`thread/read` before each active-thread notification is rendered. During

large subagent fan-outs, the embedded app-server can be busy starting

agents and processing spawn work, so those synchronous metadata reads

queue behind the fan-out and block the TUI event loop. That makes the UI

appear frozen even though the underlying agent work can continue.

## What Changed

- Replaced eager `thread/read` metadata hydration on the active

notification path with local receiver-thread caching.

- Kept `ThreadStarted` and picker refreshes as the places that fill in

agent nickname/role metadata when it is available.

- Skipped caching receiver threads that are explicitly reported as

`NotFound`, avoiding live-looking ghost entries for failed stale-agent

calls.

- Added TUI tests covering both local receiver caching and `NotFound`

suppression.

## Verification

- `cargo test -p codex-tui collab_receiver_notification`

- `just fix -p codex-tui`

I also ran the full `cargo test -p codex-tui`; the new test passed, but

the full process later aborted with an unrelated stack overflow in

`tests::fork_last_filters_latest_session_by_cwd_unless_show_all`.

# Why

Hooks that need trust review were easy to miss, and the existing TUI

flow made users discover `/hooks` manually before they could decide

whether to inspect or trust them.

# What

- add a startup review prompt for new or changed hooks before normal

composer use

- add a top-level `t` shortcut in `/hooks` to trust every review-needed

hook at once

- make pending-review rows and helper copy use warning styling

## TUI

### Startup review interstitial

```text

Hooks need review

2 hooks are new or changed.

Hooks can run outside the sandbox after you trust them.

› 1. Review hooks

2. Trust all and continue

3. Continue without trusting (hooks won't run)

```

### Top-level `/hooks` page when review is needed

```text

Hooks

Lifecycle hooks from config and enabled plugins.

⚠ 1 hook needs review before it can run.

Event Installed Active Review Description

PreToolUse 1 0 1 Before a tool executes

...

Press t to trust all; enter to review hooks; esc to close

```

## Why

On light terminal backgrounds, selected rows in several TUI pickers were

rendered with the same bright cyan accent used on dark themes. Against

the light menu surface, that made the current selection hard to

distinguish at a glance.

<table><tr>

<td>

<p align="center">Before</p>

<img width="1109" height="864" alt="SCR-20260509-nmtz"

src="https://github.com/user-attachments/assets/b31ce0d0-19c2-4bdd-a220-7acc77bd8e8e"

/>

</td>

<td>

<p align="center">After</p>

<img width="1164" height="844" alt="SCR-20260509-nmox"

src="https://github.com/user-attachments/assets/7b3fede0-4739-4a9f-a979-cdbb7451841f"

/>

</td>

</tr></table>

## What changed

- Added a shared background-aware accent style for active/selected TUI

controls.

- Use a darker cyan-family accent on light backgrounds while preserving

the existing bright cyan accent on dark or unknown backgrounds.

- Reused that accent across shared picker rows and the custom

selection-like surfaces that had drifted separately: picker tabs, hooks

browsing, external-agent migration choices, and /keymap affordances.

- Added focused tests for the light/dark accent rule and rendered

selected-row styling.

## How to Test

1. Start Codex in a terminal using a light background theme.

2. Type `/` to open the slash-command picker and move the selection

through a few rows.

3. Confirm that the selected row is visibly colored with strong contrast

instead of blending into the popup surface.

4. Open `/keymap` and confirm the active tab, selected rows, and picker

hint accents use the same light-theme accent treatment.

5. In a dark terminal theme, repeat the slash-picker check and confirm

the existing bright cyan selection styling is preserved.

Targeted tests:

- `cargo test -p codex-tui accent_style_uses_`

- `cargo test -p codex-tui selected_rows_use_the_shared_accent_style`

- `cargo test -p codex-tui

selected_event_rows_use_the_shared_accent_style`

Notes:

- A full `cargo test -p codex-tui` run reached the end of the suite but

hit an unrelated existing stack overflow in

`tests::fork_last_filters_latest_session_by_cwd_unless_show_all`.

## Why

Mixed prose lines that contained URLs started taking the URL-preserving

wrapping path, but that path could split ordinary words mid-token. A

follow-up issue remained in scrollback insertion: when already-rendered

indented rows were wrapped again, continuation rows could lose their

margin and fall back to terminal hard wrapping. Together those bugs made

normal Markdown output look broken around links, lists, blockquotes, and

indented content.

Separately, the local argument-comment lint wrappers failed under

environments that set `PYTHONSAFEPATH=1`, because Python no longer adds

the script directory to `sys.path` automatically. That prevented the

lint from reaching Rust callsites at all.

<img width="1778" height="1558" alt="CleanShot 2026-05-09 at 11 51 38"

src="https://github.com/user-attachments/assets/9274d150-1757-4f1a-89ac-5bdc9997d8cb"

/>

## What Changed

- Preserve URL tokens without turning every neighboring prose word into

a character-level split point.

- Add a mixed URL/prose wrapper that keeps ordinary words whole,

preserves leading whitespace, and re-splits long non-URL tokens against

the actual width available on continuation rows.

- Reuse a rendered history row's leading whitespace as the continuation

indent when scrollback insertion has to pre-wrap it again.

- Add regression coverage for markdown wrapping, history-cell rendering,

scrollback continuation margins, leading-indent width accounting, and

continuation-row re-splitting.

- Make both argument-comment lint entrypoints explicitly add their own

directory to `sys.path`, so sibling imports still work when

`PYTHONSAFEPATH=1`.

## How to Test

1. Start Codex and render a long Markdown response that mixes prose with

inline links, blockquotes, lists, and indented code-like text.

2. Confirm that ordinary words next to links stay whole instead of

breaking mid-word.

3. Resize or replay the transcript and confirm wrapped continuation rows

keep their expected left margin for blockquotes, lists, and indented

content.

4. Run the source argument-comment lint from a shell with

`PYTHONSAFEPATH=1` and confirm it starts normally instead of failing to

import `wrapper_common`.

Targeted tests:

- `cargo test -p codex-tui mixed_line --lib`

- `cargo test -p codex-tui preserves_prefix_on_wrapped_rows --lib`

- `cargo test -p codex-tui

agent_markdown_cell_does_not_split_words_after_inline_markdown --lib`

- `cargo test -p codex-tui

mixed_url_markdown_wraps_prose_without_splitting_words_snapshot --lib`

- `python3 tools/argument-comment-lint/test_wrapper_common.py`

- `just argument-comment-lint-from-source -p codex-tui -- --lib`

Notes:

- `cargo test -p codex-tui` currently reaches the new tests

successfully, then still aborts in the pre-existing

`tests::fork_last_filters_latest_session_by_cwd_unless_show_all`

stack-overflow failure.

## Why

[PR #1705](https://github.com/openai/codex/pull/1705) moved

`apply_patch` execution under the configured sandbox and called out the

need for integration coverage. We already covered textual `../` escapes,

but did not have coverage for link aliases that live inside a writable

workspace while pointing at, or aliasing, files visible outside it.

This PR locks in the current sandbox boundary without changing

production write semantics. Symlink escapes into a read-only outside

root should fail and leave the outside file unchanged. Existing hard

links are characterized separately: if a user-created hard link already

exists inside the writable root, sandboxed writes preserve normal

hard-link semantics rather than replacing the link and silently breaking

that relationship.

## What Changed

- Added

`apply_patch_cli_does_not_write_through_symlink_escape_outside_workspace`

to verify `apply_patch` cannot update a symlink that targets a file

outside the writable workspace.

- Added `apply_patch_cli_preserves_existing_hard_link_outside_workspace`

to verify `apply_patch` intentionally writes through an existing hard

link and does not unlink or replace it.

- Added `file_system_sandboxed_write_preserves_existing_hard_link` to

verify sandboxed `fs/writeFile` preserves an existing hard link and

writes the shared inode.

## Testing

- `cargo test -p codex-exec-server file_system_sandboxed_write`

- `cargo test -p codex-core

apply_patch_cli_does_not_write_through_symlink_escape_outside_workspace`

- `cargo test -p codex-core

apply_patch_cli_preserves_existing_hard_link_outside_workspace`

- `just fix -p codex-exec-server -p codex-core`

- `just fix -p codex-core`

---

[//]: # (BEGIN SAPLING FOOTER)

Stack created with [Sapling](https://sapling-scm.com). Best reviewed

with [ReviewStack](https://reviewstack.dev/openai/codex/pull/21819).

* #21845

* __->__ #21819

## Why

Service-tier slash commands are built from model-catalog metadata. If

the catalog returns a name like `Fast`, the TUI currently exposes

`/Fast` and exact dispatch expects that casing, which is inconsistent

with the lowercase command style used elsewhere.

## What

- Lowercase service-tier command names when converting catalog tiers

into `ServiceTierCommand` values.

- Add regression coverage that seeds a catalog tier named `Fast` and

expects the generated command to be `fast`.

## Testing

Not run locally per repo instruction; PR CI should run the new

`service_tier_commands_lowercase_catalog_names` coverage.

## Why

The Python SDK previously protected the stdio transport with a single

active turn-consumer guard. That avoided competing reads from stdout,

but it also meant one `Codex`/`AsyncCodex` client could not stream

multiple active turns at the same time. Notifications could also arrive

before the caller received a `TurnHandle` and registered for streaming,

so the SDK needed an explicit routing layer instead of letting

individual API calls read directly from the shared transport.

## What Changed

- Added a private `MessageRouter` that owns per-request response queues,

per-turn notification queues, pending turn-notification replay, and

global notification delivery behind a single stdout reader thread.

- Generated typed notification routing metadata so turn IDs come from

known payload shapes instead of router-side attribute guessing, with

explicit fallback handling for unknown notification payloads.

- Updated sync and async turn streaming so `TurnHandle.stream()`/`run()`

and `stream_text()` consume only notifications for their own turn ID,

while `AsyncAppServerClient` no longer serializes all transport calls

behind one async lock.

- Cleared pending turn-notification buffers when unregistered turns

complete so never-consumed turn handles do not leave stale queues

behind.

- Removed the internal stream-until helper now that turn completion

waiting can register directly with routed turn notifications.

- Updated Python SDK docs and focused tests for concurrent transport

calls, interleaved turn routing, buffered early notifications, unknown

notification routing, async delegation, and routed turn completion

behavior.

## Validation

- `uv run --extra dev ruff format scripts/update_sdk_artifacts.py

src/codex_app_server/_message_router.py src/codex_app_server/client.py

src/codex_app_server/generated/notification_registry.py

tests/test_client_rpc_methods.py

tests/test_public_api_runtime_behavior.py

tests/test_async_client_behavior.py`

- `uv run --extra dev ruff check scripts/update_sdk_artifacts.py

src/codex_app_server/_message_router.py src/codex_app_server/client.py

src/codex_app_server/generated/notification_registry.py

tests/test_client_rpc_methods.py

tests/test_public_api_runtime_behavior.py

tests/test_async_client_behavior.py`

- `uv run --extra dev pytest tests/test_client_rpc_methods.py

tests/test_public_api_runtime_behavior.py

tests/test_async_client_behavior.py`

- `git diff --check`

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

The model-visible `<network>` context currently repeats indentation and

a pair of XML tags for every allowed or denied domain. Large domain sets

spend a surprising amount of prompt budget on that scaffolding instead

of the actual policy values.

## What changed

- Render allowed domains as one comma-separated `<allowed>` value

instead of one element per domain.

- Render denied domains the same way.

- Keep the full allow/deny domain sets model-visible while updating the

serialization and settings-update coverage for the denser shape.

## Example

Before:

```xml

<network enabled="true">

<allowed>api.example.test</allowed>

<allowed>cdn.example.test</allowed>

<denied>blocked.example.test</denied>

</network>

```

After:

```xml

<network enabled="true"><allowed>api.example.test,cdn.example.test</allowed><denied>blocked.example.test</denied></network>

```

## Validation

- `cargo test -p codex-core environment_context`

- `cargo test -p codex-core

build_settings_update_items_emits_environment_item_for_network_changes`

- Ran a local `codex` session with a real network context containing 121

allowed domains and 42 denied domains, then inspected the raw prompt

with `raw_token_viewer_cli.py`. With the same domain set, the rendered

`<network>` section shrank from 7,175 characters across 161 lines to

3,666 characters on one line, and the containing environment-context

block fell from 6,428 tokens to 5,379 tokens.

Expose discoverability and full share principals in share context, carry

roles through save/updateTargets, hydrate local shared plugin reads, and

keep share URLs only under plugin.shareContext.

## Why

The app-server watcher relocation leaves the generic filesystem watcher

as the last watcher-specific implementation still living inside

`codex-core`. Moving that code to a small crate keeps `codex-core`

focused on thread execution and lets app-server depend on the watcher

without reaching back into core for filesystem watching primitives.

This PR is stacked on #21287.

## What changed

- Added a new `codex-file-watcher` crate containing the existing watcher

implementation and its unit tests.

- Updated app-server `fs_watch`, `skills_watcher`, and listener state to

import watcher types from `codex-file-watcher`.

- Removed the `file_watcher` module and `notify` dependency from

`codex-core`.

- Updated Cargo workspace metadata and `Cargo.lock` for the new internal

crate.

## Validation

- `cargo check -p codex-file-watcher -p codex-core -p codex-app-server`

- `cargo test -p codex-file-watcher`

- `cargo test -p codex-app-server

skills_changed_notification_is_emitted_after_skill_change`

- `just bazel-lock-update`

- `just bazel-lock-check`

- `just fix -p codex-file-watcher`

- `just fix -p codex-core`

- `just fix -p codex-app-server`

## Why

PR #21460 reverted the earlier move of skills change watching from

`codex-core` into app-server. This reapplies that boundary change so

app-server owns client-facing `skills/changed` notifications and core no

longer carries the watcher.

## What

- Restore the app-server `SkillsWatcher` and register it from thread

listener setup.

- Remove the core-owned skills watcher and its core live-reload

integration surface.

- Restore app-server coverage for `skills/changed` notifications after a

watched skill file changes.

## Validation

- `cargo test -p codex-app-server --test all

suite::v2::skills_list::skills_changed_notification_is_emitted_after_skill_change

-- --exact --nocapture`

- `cargo test -p codex-core --lib --no-run`

## Why

We'd like SQLite state to become required and load-bearing. As a first

step, let's remove the mechanism that allows us to blow away the SQLite

DB on a version bump, and instead rely on graceful migrations.

The original motivation

([PR](https://github.com/openai/codex/pull/10623)) behind this mechanism

was to care less about backwards compatibility while SQLite was being

landed, but I'd say it's quite important now to keep the data in it.

## What changed

- Make `STATE_DB_FILENAME` and `LOGS_DB_FILENAME` the full canonical

filenames: `state_5.sqlite` and `logs_2.sqlite`.

- Remove `STATE_DB_VERSION` / `LOGS_DB_VERSION` and the helper that

constructed filenames from versions.

- Stop `StateRuntime::init` from scanning for or deleting older SQLite

DB filenames at startup.

- Delete the tests that encoded legacy state/logs DB deletion behavior.

## Verification

- `cargo test -p codex-state`

## Why

Amazon Bedrock Mantle needs a stable client-agent header so requests

from the built-in Bedrock provider can be identified as coming from

Codex for safety stack.

## What changed

- Added `x-amzn-mantle-client-agent: codex` to the built-in Amazon

Bedrock provider default HTTP headers.

## Why

Desktop and mobile Codex clients need a machine-readable way to

bootstrap and manage `codex app-server` on remote machines reached over

SSH. The same flow is also useful for bringing up app-server with

`remote_control` enabled on a fresh developer machine and keeping that

managed install current without requiring a human session.

## What changed

- add the new experimental `codex-app-server-daemon` crate and wire it

into `codex app-server daemon` lifecycle commands: `start`, `restart`,

`stop`, `version`, and `bootstrap`

- add explicit `enable-remote-control` and `disable-remote-control`

commands that persist the launch setting and restart a running managed

daemon so the change takes effect immediately

- emit JSON success responses for daemon commands so remote callers can

consume them directly

- support a Unix-only pidfile-backed detached backend for lifecycle

management

- assume the standalone `install.sh` layout for daemon-managed binaries

and always launch `CODEX_HOME/packages/standalone/current/codex`

- add bootstrap support for the standalone managed install plus a

detached hourly updater loop

- harden lifecycle management around concurrent operations, pidfile

ownership, stale state cleanup, updater ownership, managed-binary

preflight, Unix-only rejection, forced shutdown after the graceful

window, and updater process-group tracking/cleanup

- document the experimental Unix-only support boundary plus the

standalone bootstrap/update flow in

`codex-rs/app-server-daemon/README.md`

## Verification

- `cargo test -p codex-app-server-daemon -p codex-cli`

- live pid validation on `cb4`: `bootstrap --remote-control`, `restart`,

`version`, `stop`

## Follow-up

- Add updater self-refresh so the long-lived `pid-update-loop` can

replace its own executable image after installing a newer managed Codex

binary.

## Why

The environment-backed exec-server transport currently hardcodes 5

second connect and initialize timeouts in `client_transport.rs`. That is

short for SSH-backed stdio environments and remote websocket

environments, and there is currently no way to raise those values from

`CODEX_HOME/environments.toml`.

This stacked follow-up raises the default environment transport timeouts

and lets each configured environment override them in

`environments.toml`.

## What Changed

- raise the default environment transport connect and initialize

timeouts from 5s to 10s

- store concrete timeout values on `ExecServerTransportParams` instead

of hardcoding them in `connect_for_transport(...)`

- add `connect_timeout_sec` and `initialize_timeout_sec` to

`[[environments]]` entries in `environments.toml`

- apply parse-time defaults so runtime transport code receives fully

resolved timeout values

- reject `connect_timeout_sec` on stdio environments because it only

applies to websocket transports

- extend parser tests to cover the new fields and defaults

## Stack

- base: https://github.com/openai/codex/pull/21794

- this PR: configurable environment transport timeouts

## Validation

- `cd

/Users/starr/code/codex-worktrees/exec-env-timeouts-config-20260508/codex-rs

&& just fmt`

- not run: tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

Support registry-backed remote executors end to end so downstream

services can resolve an executor id into an exec-server URL and make

that environment available to Codex without relying on the legacy cloud

environments flow.

## What changed

- switch remote executor registration to the executor registry bootstrap

contract

- allow named remote environments to be inserted into

`EnvironmentManager` at runtime

- add the experimental app-server RPC `environment/add` so initialized

experimental clients can register those remote environments for later

`thread/start` and `turn/start` selection

## Validation

Ran focused validation locally:

- `cargo test -p codex-exec-server environment_manager_`

- `cargo test -p codex-exec-server

register_executor_posts_with_bearer_token_header`

- `cargo test -p codex-app-server-protocol`

## Why

The app-server daemon work needs two app-server behaviors to be safe

when lifecycle management is driven by a helper process:

- a readiness probe must not become the process-wide client identity

just because it connects first

- a graceful reload signal needs to keep draining active turns even if

it is delivered more than once

## What changed

- Treat `codex_app_server_daemon` initialization as a probe-only client

for process-global originator and user-agent suffix state.

- Distinguish forceable shutdown signals from graceful-only ones, and

treat Unix `SIGHUP` as graceful-only while leaving `SIGTERM` and Ctrl-C

forceable.

- Add regression coverage for daemon probe initialization and repeated

`SIGHUP` delivery while a turn is still running.

## Testing

- `cargo test -p codex-app-server`

- The new daemon-probe and repeated-`SIGHUP` coverage passed.

- The run still failed in the existing

`suite::conversation_summary::get_conversation_summary_by_relative_rollout_path_resolves_from_codex_home`

and

`suite::conversation_summary::get_conversation_summary_by_thread_id_reads_rollout`

tests because their initialize handshake timed out.

- `cargo test -p codex-app-server --test all

suite::conversation_summary::`

- Reproduced the same two existing initialize-timeout failures in

isolation.

## Summary

- make EnvironmentProvider::snapshot path-free and keep providers

focused on provider-owned remote environments

- let provider snapshots request local inclusion via include_local, with

environments.toml including local and CODEX_EXEC_SERVER_URL excluding

local

- move reserved local environment construction into EnvironmentManager

using ExecServerRuntimePaths

Follow-up to https://github.com/openai/codex/pull/20667

## Testing

- just fmt

- git diff --check

- devbox: bazel build --bes_backend= --bes_results_url=

//codex-rs/exec-server:exec-server

- devbox: bazel test --bes_backend= --bes_results_url=

//codex-rs/exec-server:exec-server-unit-tests

Co-authored-by: Codex <noreply@openai.com>

## Why

PR CI should test the exact commit that was pushed to the PR branch. By

default, GitHub's `pull_request` event checks out a synthetic merge

commit from `refs/pull/<number>/merge`, so the tested tree can include

an implicit merge with the current base branch instead of matching the

pushed head SHA.

Using the PR head SHA makes each check result correspond to a concrete

commit the author submitted. This also behaves better for stacked PR

workflows, including Sapling stacks and other Git stack tooling: a

middle PR's head commit already contains the lower stack changes in its

tree, without pulling in commits above it or GitHub's temporary merge

ref.

## What Changed

- Set every `actions/checkout` in `pull_request` workflows under

`.github/workflows` to use `github.event.pull_request.head.sha` on PR

events and `github.sha` otherwise.

- Updated `blob-size-policy` to compare

`github.event.pull_request.base.sha` and

`github.event.pull_request.head.sha`, since it no longer checks out

GitHub's merge commit where `HEAD^1`/`HEAD^2` represented the PR range.

## Verification

- Parsed the edited workflow YAML files with Ruby.

- Checked that every checkout block in the `pull_request` workflows has

the PR-head `ref`.

## Summary

Startup tool construction currently depends on connector directory

metadata for `tool_suggest` discoverables. On a cold directory cache,

that can put slow connector-directory requests on the blocking path even

though the tools array only needs directory data for install

suggestions, not for the live connector MCP tools themselves.

This PR keeps the discoverables path off that cold network fetch:

- read connector directory metadata from cache only when building

discoverable tools

- persist connector directory metadata to

`~/.codex/cache/codex_app_directory/<hash>.json` and use it to hydrate

the in-memory cache on later runs before the normal refresh path updates

it

- use connector-directory-specific cache naming to distinguish this

metadata cache from the separate Codex Apps tools-spec cache

This reduces first-turn startup work without changing how live connector

MCP tools are sourced. Longer term, directory-backed install suggestions

should move to a search-based flow so they no longer need to be inlined

into the tools prompt at all.

## Testing

- `cargo test -p codex-connectors`

- `cargo test -p codex-chatgpt`

- `cargo test -p codex-core

request_plugin_install_is_available_without_search_tool_after_discovery_attempts`

- `cargo test -p codex-core

tool_suggest_uses_connector_id_fallback_when_directory_cache_is_empty`

## Summary

In https://github.com/openai/codex/pull/21584, we disabled doctests for

crates that lack any doctests. We can enforce that property via `cargo

shear --deny-warnings`: crates that lack doctests will be flagged if

doctests are enabled, and crates with doctests will be flagged if

doctests are disabled.

A few additional notes:

- By adding `--deny-warnings`, `cargo shear` also flagged a number of

modules that were not reachable at all. Some of those have been removed.

- This PR removes a usage of `windows_modules!` (since `cargo shear` and

`rustfmt` couldn't see through it) in favor of simple `#[cfg(target_os =

"windows")]` macros. As a consequence, many of these files exhibit churn

in this PR, since they weren't being formatted by `rustfmt` at all on

main.

- Again, to make the code more analyzable, this PR also removes some

usages of `#[path = "cwd_junction.rs"]` in favor of a more standard

module structure. The bin sidecar structure is still retained, but,

e.g., `windows-sandbox-rs/src/bin/command_runner.rs` was moved to

`windows-sandbox-rs/src/bin/command_runner/main.rs`, and so on.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

The legacy `AfterToolUse` hook path was still wired through core tool

dispatch even though the hooks registry never populated any handlers for

it. The supported hook surface is `PostToolUse`, so the old

infrastructure was dead code on the hot path.

## What changed

- Removed the legacy `AfterToolUse` dispatch from `codex-core` tool

execution.

- Removed the unused legacy hook payload types and exports from

`codex-hooks`.

- Simplified legacy notify handling now that `HookEvent` only carries

`AfterAgent`.

## Validation

- `cargo test -p codex-hooks`

- `cargo test -p codex-core registry`

## Why

`apply_patch` is now a freeform/custom tool. Keeping the old

JSON/function-style registration and parsing path left another way for

models and tests to invoke `apply_patch`, which made the tool surface

harder to reason about.

## What changed

- Removed the `ApplyPatchToolType::Function` variant, JSON `apply_patch`

spec, and handler support for function payloads.

- Kept `apply_patch_tool_type = freeform` as the supported model

metadata path, including Bedrock catalog metadata.

- Migrated `apply_patch` tests and SSE fixtures to custom/freeform tool

calls.

## Verification

- `cargo test -p codex-tools -p codex-protocol -p codex-model-provider`

- `cargo test -p codex-core tools::handlers::apply_patch --lib`

- `cargo test -p codex-core --test all

apply_patch_tool_executes_and_emits_patch_events`

- `cargo test -p codex-core --test all

apply_patch_reports_parse_diagnostics`

- `cargo test -p codex-exec test_apply_patch_tool`

- `just fix -p codex-core`

- `just fix -p codex-tools -p codex-protocol -p codex-model-provider -p

codex-exec`

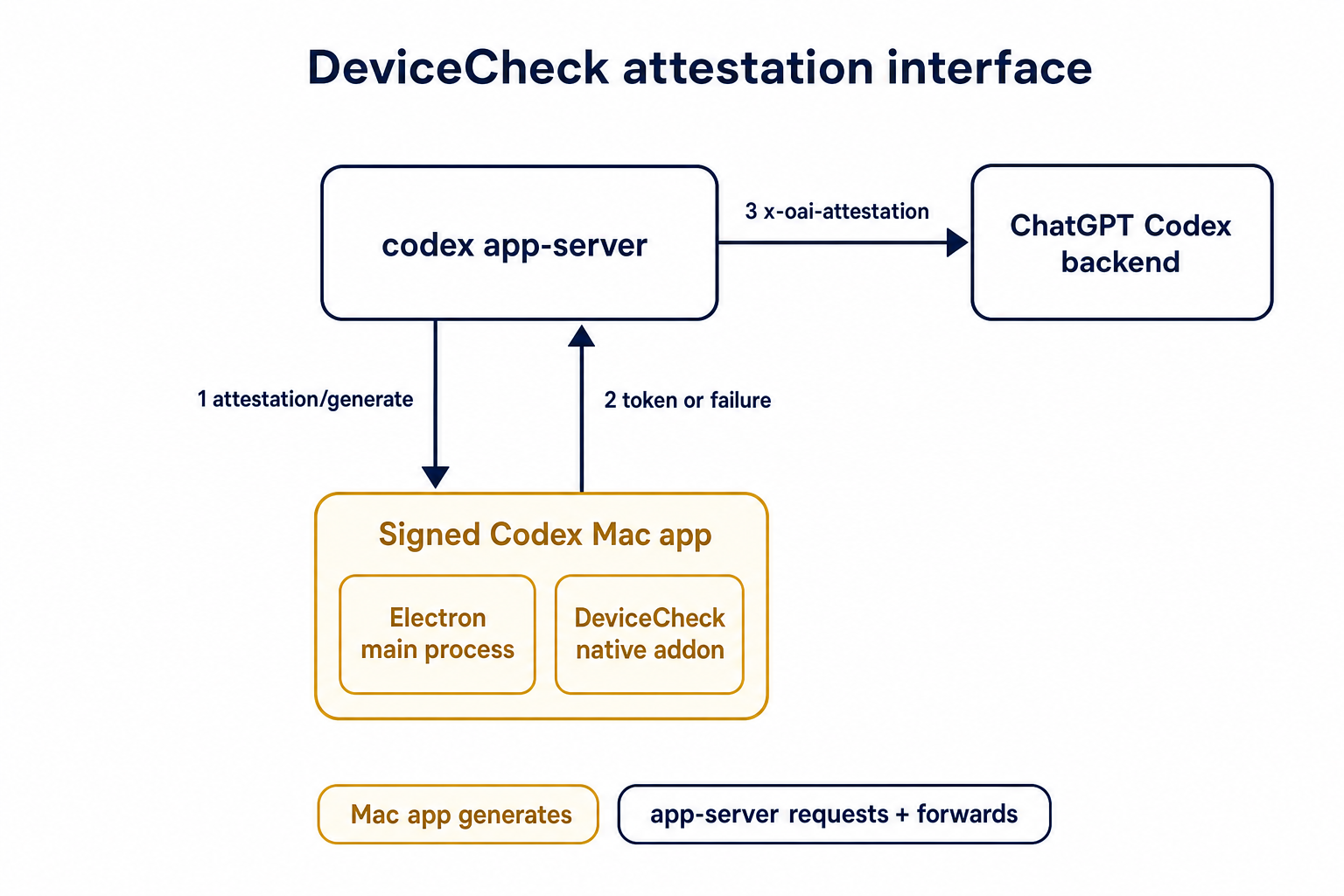

## Summary

TL;DR: teaches `codex-rs` / app-server to request a desktop-provided

attestation token and attach it as `x-oai-attestation` on the scoped

ChatGPT Codex request paths.

## Details

This PR teaches the Codex app-server runtime how to request and attach

an attestation token. It does not generate DeviceCheck tokens directly;

instead, it relies on the connected desktop app to advertise that it can

generate attestation and then asks that app for a fresh header value

when needed.

The flow is:

1. The Codex desktop app connects to app-server.

2. During `initialize`, the app can advertise that it supports

`requestAttestation`.

3. Before app-server calls selected ChatGPT Codex endpoints, it sends

the internal server request `attestation/generate` to the app.

4. app-server receives a pre-encoded header value back.

5. app-server forwards that value as `x-oai-attestation` on the scoped

outbound requests.

The code in this repo is mostly protocol and runtime plumbing: it adds

the app-server request/response shape, introduces an attestation

provider in core, wires that provider into Responses / compaction /

realtime setup paths, and covers the intended scoping with tests. The

signed macOS DeviceCheck generation remains owned by the desktop app PR.

## Related PR

- Codex desktop app implementation:

https://github.com/openai/openai/pull/878649

## Validation

<details>

<summary>Tests run</summary>

```sh

cargo test -p codex-app-server-protocol

cargo test -p codex-core attestation --lib

cargo test -p codex-app-server --lib attestation

```

Also ran:

```sh

just fix -p codex-core

just fix -p codex-app-server

just fix -p codex-app-server-protocol

just fmt

just write-app-server-schema

```

</details>

<details>

<summary>E2E DeviceCheck validation</summary>

First validated the signed desktop app boundary directly: launched a

packaged signed `Codex.app`, sent `attestation/generate`, decoded the

returned `v1.` attestation header, and validated the extracted

DeviceCheck token with `personal/jm/verify_devicecheck_token.py` using

bundle ID `com.openai.codex`. Apple returned `status_code: 200` and

`is_ok: true`.

Then ran the fuller app + app-server flow. The packaged `Codex.app`

launched a current-branch app-server via `CODEX_CLI_PATH`, and a local

MITM proxy intercepted outbound `chatgpt.com` traffic. The app-server

requested `attestation/generate` from the real Electron app process, and

the intercepted `/backend-api/codex/responses` traffic included

`x-oai-attestation` on both routes:

```text

GET /backend-api/codex/responses Upgrade: websocket x-oai-attestation: present

POST /backend-api/codex/responses Upgrade: none x-oai-attestation: present

```

The captured header decoded to a DeviceCheck token that also validated

with Apple for `com.openai.codex` (`status_code: 200`, `is_ok: true`,

team `2DC432GLL2`).

</details>

---------

Co-authored-by: Codex <noreply@openai.com>