## Why

Service-tier slash commands are built from model-catalog metadata. If

the catalog returns a name like `Fast`, the TUI currently exposes

`/Fast` and exact dispatch expects that casing, which is inconsistent

with the lowercase command style used elsewhere.

## What

- Lowercase service-tier command names when converting catalog tiers

into `ServiceTierCommand` values.

- Add regression coverage that seeds a catalog tier named `Fast` and

expects the generated command to be `fast`.

## Testing

Not run locally per repo instruction; PR CI should run the new

`service_tier_commands_lowercase_catalog_names` coverage.

## Why

The model-visible `<network>` context currently repeats indentation and

a pair of XML tags for every allowed or denied domain. Large domain sets

spend a surprising amount of prompt budget on that scaffolding instead

of the actual policy values.

## What changed

- Render allowed domains as one comma-separated `<allowed>` value

instead of one element per domain.

- Render denied domains the same way.

- Keep the full allow/deny domain sets model-visible while updating the

serialization and settings-update coverage for the denser shape.

## Example

Before:

```xml

<network enabled="true">

<allowed>api.example.test</allowed>

<allowed>cdn.example.test</allowed>

<denied>blocked.example.test</denied>

</network>

```

After:

```xml

<network enabled="true"><allowed>api.example.test,cdn.example.test</allowed><denied>blocked.example.test</denied></network>

```

## Validation

- `cargo test -p codex-core environment_context`

- `cargo test -p codex-core

build_settings_update_items_emits_environment_item_for_network_changes`

- Ran a local `codex` session with a real network context containing 121

allowed domains and 42 denied domains, then inspected the raw prompt

with `raw_token_viewer_cli.py`. With the same domain set, the rendered

`<network>` section shrank from 7,175 characters across 161 lines to

3,666 characters on one line, and the containing environment-context

block fell from 6,428 tokens to 5,379 tokens.

Expose discoverability and full share principals in share context, carry

roles through save/updateTargets, hydrate local shared plugin reads, and

keep share URLs only under plugin.shareContext.

## Why

The app-server watcher relocation leaves the generic filesystem watcher

as the last watcher-specific implementation still living inside

`codex-core`. Moving that code to a small crate keeps `codex-core`

focused on thread execution and lets app-server depend on the watcher

without reaching back into core for filesystem watching primitives.

This PR is stacked on #21287.

## What changed

- Added a new `codex-file-watcher` crate containing the existing watcher

implementation and its unit tests.

- Updated app-server `fs_watch`, `skills_watcher`, and listener state to

import watcher types from `codex-file-watcher`.

- Removed the `file_watcher` module and `notify` dependency from

`codex-core`.

- Updated Cargo workspace metadata and `Cargo.lock` for the new internal

crate.

## Validation

- `cargo check -p codex-file-watcher -p codex-core -p codex-app-server`

- `cargo test -p codex-file-watcher`

- `cargo test -p codex-app-server

skills_changed_notification_is_emitted_after_skill_change`

- `just bazel-lock-update`

- `just bazel-lock-check`

- `just fix -p codex-file-watcher`

- `just fix -p codex-core`

- `just fix -p codex-app-server`

## Why

PR #21460 reverted the earlier move of skills change watching from

`codex-core` into app-server. This reapplies that boundary change so

app-server owns client-facing `skills/changed` notifications and core no

longer carries the watcher.

## What

- Restore the app-server `SkillsWatcher` and register it from thread

listener setup.

- Remove the core-owned skills watcher and its core live-reload

integration surface.

- Restore app-server coverage for `skills/changed` notifications after a

watched skill file changes.

## Validation

- `cargo test -p codex-app-server --test all

suite::v2::skills_list::skills_changed_notification_is_emitted_after_skill_change

-- --exact --nocapture`

- `cargo test -p codex-core --lib --no-run`

## Why

We'd like SQLite state to become required and load-bearing. As a first

step, let's remove the mechanism that allows us to blow away the SQLite

DB on a version bump, and instead rely on graceful migrations.

The original motivation

([PR](https://github.com/openai/codex/pull/10623)) behind this mechanism

was to care less about backwards compatibility while SQLite was being

landed, but I'd say it's quite important now to keep the data in it.

## What changed

- Make `STATE_DB_FILENAME` and `LOGS_DB_FILENAME` the full canonical

filenames: `state_5.sqlite` and `logs_2.sqlite`.

- Remove `STATE_DB_VERSION` / `LOGS_DB_VERSION` and the helper that

constructed filenames from versions.

- Stop `StateRuntime::init` from scanning for or deleting older SQLite

DB filenames at startup.

- Delete the tests that encoded legacy state/logs DB deletion behavior.

## Verification

- `cargo test -p codex-state`

## Why

Amazon Bedrock Mantle needs a stable client-agent header so requests

from the built-in Bedrock provider can be identified as coming from

Codex for safety stack.

## What changed

- Added `x-amzn-mantle-client-agent: codex` to the built-in Amazon

Bedrock provider default HTTP headers.

## Why

Desktop and mobile Codex clients need a machine-readable way to

bootstrap and manage `codex app-server` on remote machines reached over

SSH. The same flow is also useful for bringing up app-server with

`remote_control` enabled on a fresh developer machine and keeping that

managed install current without requiring a human session.

## What changed

- add the new experimental `codex-app-server-daemon` crate and wire it

into `codex app-server daemon` lifecycle commands: `start`, `restart`,

`stop`, `version`, and `bootstrap`

- add explicit `enable-remote-control` and `disable-remote-control`

commands that persist the launch setting and restart a running managed

daemon so the change takes effect immediately

- emit JSON success responses for daemon commands so remote callers can

consume them directly

- support a Unix-only pidfile-backed detached backend for lifecycle

management

- assume the standalone `install.sh` layout for daemon-managed binaries

and always launch `CODEX_HOME/packages/standalone/current/codex`

- add bootstrap support for the standalone managed install plus a

detached hourly updater loop

- harden lifecycle management around concurrent operations, pidfile

ownership, stale state cleanup, updater ownership, managed-binary

preflight, Unix-only rejection, forced shutdown after the graceful

window, and updater process-group tracking/cleanup

- document the experimental Unix-only support boundary plus the

standalone bootstrap/update flow in

`codex-rs/app-server-daemon/README.md`

## Verification

- `cargo test -p codex-app-server-daemon -p codex-cli`

- live pid validation on `cb4`: `bootstrap --remote-control`, `restart`,

`version`, `stop`

## Follow-up

- Add updater self-refresh so the long-lived `pid-update-loop` can

replace its own executable image after installing a newer managed Codex

binary.

## Why

The environment-backed exec-server transport currently hardcodes 5

second connect and initialize timeouts in `client_transport.rs`. That is

short for SSH-backed stdio environments and remote websocket

environments, and there is currently no way to raise those values from

`CODEX_HOME/environments.toml`.

This stacked follow-up raises the default environment transport timeouts

and lets each configured environment override them in

`environments.toml`.

## What Changed

- raise the default environment transport connect and initialize

timeouts from 5s to 10s

- store concrete timeout values on `ExecServerTransportParams` instead

of hardcoding them in `connect_for_transport(...)`

- add `connect_timeout_sec` and `initialize_timeout_sec` to

`[[environments]]` entries in `environments.toml`

- apply parse-time defaults so runtime transport code receives fully

resolved timeout values

- reject `connect_timeout_sec` on stdio environments because it only

applies to websocket transports

- extend parser tests to cover the new fields and defaults

## Stack

- base: https://github.com/openai/codex/pull/21794

- this PR: configurable environment transport timeouts

## Validation

- `cd

/Users/starr/code/codex-worktrees/exec-env-timeouts-config-20260508/codex-rs

&& just fmt`

- not run: tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

Support registry-backed remote executors end to end so downstream

services can resolve an executor id into an exec-server URL and make

that environment available to Codex without relying on the legacy cloud

environments flow.

## What changed

- switch remote executor registration to the executor registry bootstrap

contract

- allow named remote environments to be inserted into

`EnvironmentManager` at runtime

- add the experimental app-server RPC `environment/add` so initialized

experimental clients can register those remote environments for later

`thread/start` and `turn/start` selection

## Validation

Ran focused validation locally:

- `cargo test -p codex-exec-server environment_manager_`

- `cargo test -p codex-exec-server

register_executor_posts_with_bearer_token_header`

- `cargo test -p codex-app-server-protocol`

## Why

The app-server daemon work needs two app-server behaviors to be safe

when lifecycle management is driven by a helper process:

- a readiness probe must not become the process-wide client identity

just because it connects first

- a graceful reload signal needs to keep draining active turns even if

it is delivered more than once

## What changed

- Treat `codex_app_server_daemon` initialization as a probe-only client

for process-global originator and user-agent suffix state.

- Distinguish forceable shutdown signals from graceful-only ones, and

treat Unix `SIGHUP` as graceful-only while leaving `SIGTERM` and Ctrl-C

forceable.

- Add regression coverage for daemon probe initialization and repeated

`SIGHUP` delivery while a turn is still running.

## Testing

- `cargo test -p codex-app-server`

- The new daemon-probe and repeated-`SIGHUP` coverage passed.

- The run still failed in the existing

`suite::conversation_summary::get_conversation_summary_by_relative_rollout_path_resolves_from_codex_home`

and

`suite::conversation_summary::get_conversation_summary_by_thread_id_reads_rollout`

tests because their initialize handshake timed out.

- `cargo test -p codex-app-server --test all

suite::conversation_summary::`

- Reproduced the same two existing initialize-timeout failures in

isolation.

## Summary

- make EnvironmentProvider::snapshot path-free and keep providers

focused on provider-owned remote environments

- let provider snapshots request local inclusion via include_local, with

environments.toml including local and CODEX_EXEC_SERVER_URL excluding

local

- move reserved local environment construction into EnvironmentManager

using ExecServerRuntimePaths

Follow-up to https://github.com/openai/codex/pull/20667

## Testing

- just fmt

- git diff --check

- devbox: bazel build --bes_backend= --bes_results_url=

//codex-rs/exec-server:exec-server

- devbox: bazel test --bes_backend= --bes_results_url=

//codex-rs/exec-server:exec-server-unit-tests

Co-authored-by: Codex <noreply@openai.com>

## Summary

Startup tool construction currently depends on connector directory

metadata for `tool_suggest` discoverables. On a cold directory cache,

that can put slow connector-directory requests on the blocking path even

though the tools array only needs directory data for install

suggestions, not for the live connector MCP tools themselves.

This PR keeps the discoverables path off that cold network fetch:

- read connector directory metadata from cache only when building

discoverable tools

- persist connector directory metadata to

`~/.codex/cache/codex_app_directory/<hash>.json` and use it to hydrate

the in-memory cache on later runs before the normal refresh path updates

it

- use connector-directory-specific cache naming to distinguish this

metadata cache from the separate Codex Apps tools-spec cache

This reduces first-turn startup work without changing how live connector

MCP tools are sourced. Longer term, directory-backed install suggestions

should move to a search-based flow so they no longer need to be inlined

into the tools prompt at all.

## Testing

- `cargo test -p codex-connectors`

- `cargo test -p codex-chatgpt`

- `cargo test -p codex-core

request_plugin_install_is_available_without_search_tool_after_discovery_attempts`

- `cargo test -p codex-core

tool_suggest_uses_connector_id_fallback_when_directory_cache_is_empty`

## Summary

In https://github.com/openai/codex/pull/21584, we disabled doctests for

crates that lack any doctests. We can enforce that property via `cargo

shear --deny-warnings`: crates that lack doctests will be flagged if

doctests are enabled, and crates with doctests will be flagged if

doctests are disabled.

A few additional notes:

- By adding `--deny-warnings`, `cargo shear` also flagged a number of

modules that were not reachable at all. Some of those have been removed.

- This PR removes a usage of `windows_modules!` (since `cargo shear` and

`rustfmt` couldn't see through it) in favor of simple `#[cfg(target_os =

"windows")]` macros. As a consequence, many of these files exhibit churn

in this PR, since they weren't being formatted by `rustfmt` at all on

main.

- Again, to make the code more analyzable, this PR also removes some

usages of `#[path = "cwd_junction.rs"]` in favor of a more standard

module structure. The bin sidecar structure is still retained, but,

e.g., `windows-sandbox-rs/src/bin/command_runner.rs` was moved to

`windows-sandbox-rs/src/bin/command_runner/main.rs`, and so on.

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

The legacy `AfterToolUse` hook path was still wired through core tool

dispatch even though the hooks registry never populated any handlers for

it. The supported hook surface is `PostToolUse`, so the old

infrastructure was dead code on the hot path.

## What changed

- Removed the legacy `AfterToolUse` dispatch from `codex-core` tool

execution.

- Removed the unused legacy hook payload types and exports from

`codex-hooks`.

- Simplified legacy notify handling now that `HookEvent` only carries

`AfterAgent`.

## Validation

- `cargo test -p codex-hooks`

- `cargo test -p codex-core registry`

## Why

`apply_patch` is now a freeform/custom tool. Keeping the old

JSON/function-style registration and parsing path left another way for

models and tests to invoke `apply_patch`, which made the tool surface

harder to reason about.

## What changed

- Removed the `ApplyPatchToolType::Function` variant, JSON `apply_patch`

spec, and handler support for function payloads.

- Kept `apply_patch_tool_type = freeform` as the supported model

metadata path, including Bedrock catalog metadata.

- Migrated `apply_patch` tests and SSE fixtures to custom/freeform tool

calls.

## Verification

- `cargo test -p codex-tools -p codex-protocol -p codex-model-provider`

- `cargo test -p codex-core tools::handlers::apply_patch --lib`

- `cargo test -p codex-core --test all

apply_patch_tool_executes_and_emits_patch_events`

- `cargo test -p codex-core --test all

apply_patch_reports_parse_diagnostics`

- `cargo test -p codex-exec test_apply_patch_tool`

- `just fix -p codex-core`

- `just fix -p codex-tools -p codex-protocol -p codex-model-provider -p

codex-exec`

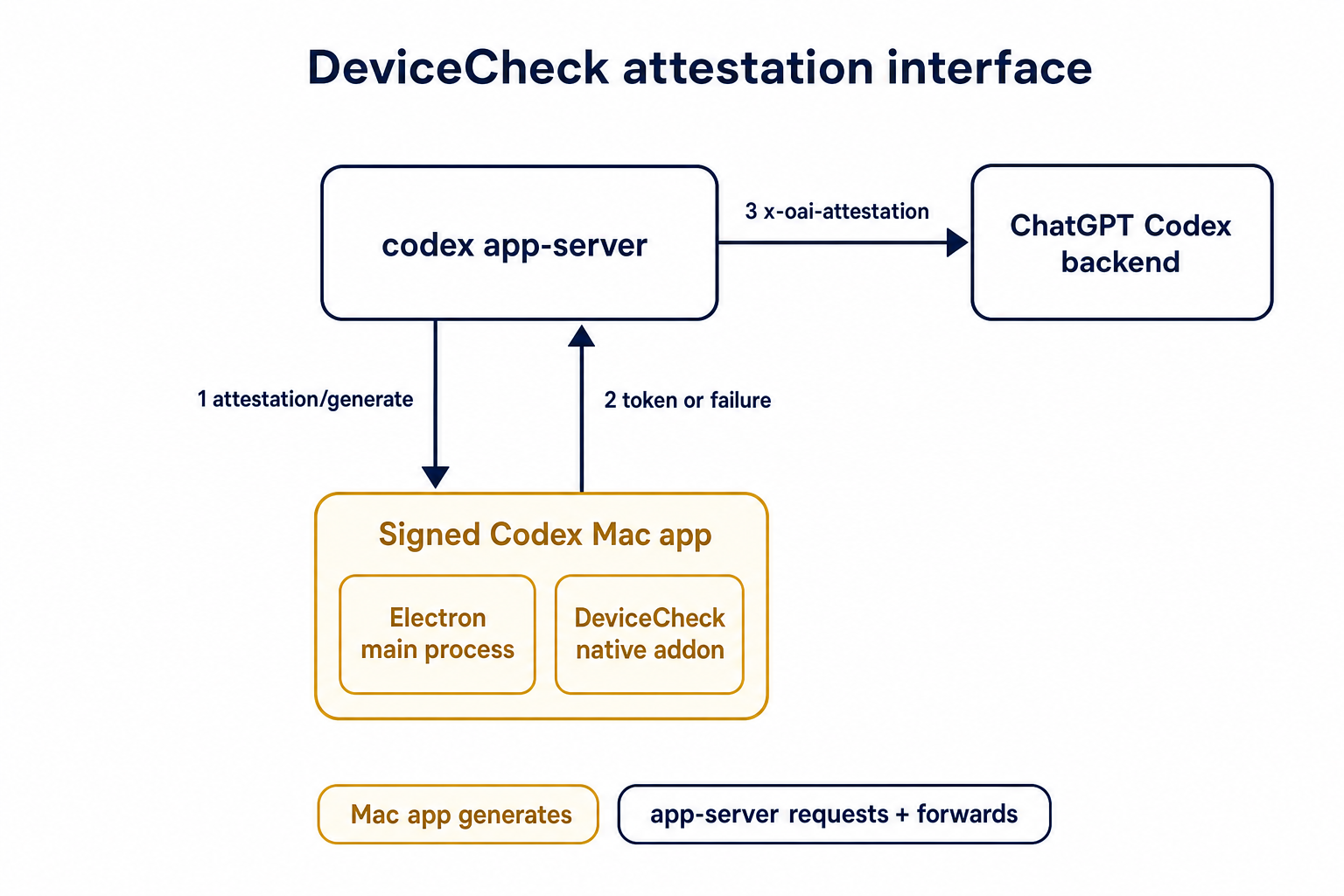

## Summary

TL;DR: teaches `codex-rs` / app-server to request a desktop-provided

attestation token and attach it as `x-oai-attestation` on the scoped

ChatGPT Codex request paths.

## Details

This PR teaches the Codex app-server runtime how to request and attach

an attestation token. It does not generate DeviceCheck tokens directly;

instead, it relies on the connected desktop app to advertise that it can

generate attestation and then asks that app for a fresh header value

when needed.

The flow is:

1. The Codex desktop app connects to app-server.

2. During `initialize`, the app can advertise that it supports

`requestAttestation`.

3. Before app-server calls selected ChatGPT Codex endpoints, it sends

the internal server request `attestation/generate` to the app.

4. app-server receives a pre-encoded header value back.

5. app-server forwards that value as `x-oai-attestation` on the scoped

outbound requests.

The code in this repo is mostly protocol and runtime plumbing: it adds

the app-server request/response shape, introduces an attestation

provider in core, wires that provider into Responses / compaction /

realtime setup paths, and covers the intended scoping with tests. The

signed macOS DeviceCheck generation remains owned by the desktop app PR.

## Related PR

- Codex desktop app implementation:

https://github.com/openai/openai/pull/878649

## Validation

<details>

<summary>Tests run</summary>

```sh

cargo test -p codex-app-server-protocol

cargo test -p codex-core attestation --lib

cargo test -p codex-app-server --lib attestation

```

Also ran:

```sh

just fix -p codex-core

just fix -p codex-app-server

just fix -p codex-app-server-protocol

just fmt

just write-app-server-schema

```

</details>

<details>

<summary>E2E DeviceCheck validation</summary>

First validated the signed desktop app boundary directly: launched a

packaged signed `Codex.app`, sent `attestation/generate`, decoded the

returned `v1.` attestation header, and validated the extracted

DeviceCheck token with `personal/jm/verify_devicecheck_token.py` using

bundle ID `com.openai.codex`. Apple returned `status_code: 200` and

`is_ok: true`.

Then ran the fuller app + app-server flow. The packaged `Codex.app`

launched a current-branch app-server via `CODEX_CLI_PATH`, and a local

MITM proxy intercepted outbound `chatgpt.com` traffic. The app-server

requested `attestation/generate` from the real Electron app process, and

the intercepted `/backend-api/codex/responses` traffic included

`x-oai-attestation` on both routes:

```text

GET /backend-api/codex/responses Upgrade: websocket x-oai-attestation: present

POST /backend-api/codex/responses Upgrade: none x-oai-attestation: present

```

The captured header decoded to a DeviceCheck token that also validated

with Apple for `com.openai.codex` (`status_code: 200`, `is_ok: true`,

team `2DC432GLL2`).

</details>

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`ToolName::display()` made it too easy to flatten tool identity and

accidentally compare rendered strings. Tool identity should stay

structural until a legacy string boundary actually requires the

flattened spelling.

## What

- Removes `ToolName::display()` and relies on the existing `Display`

impl for messages and errors.

- Adds structural ordering for `ToolName` and uses it for

sorting/deduping deferred tools.

- Carries `ToolName` through tool/sandbox plumbing, flattening only at

legacy boundaries such as hook payloads, telemetry tags, and Responses

tool names.

- Updates MCP normalization tests to assert `ToolName` structure instead

of rendered strings.

## Testing

- `cargo test -p codex-mcp test_normalize_tools`

- `cargo test -p codex-core unavailable_tool`

- `just fix -p codex-protocol`

- `just fix -p codex-mcp`

- `just fix -p codex-core`

## Why

Codex assisted-code attribution needs a client-side accepted-code source

that does not upload raw code. This adds a hash-only analytics event

derived from the turn diff so downstream attribution can compare

accepted Codex lines against commit or PR diffs.

## What Changed

- Parse accepted/effective added lines from the final turn diff and emit

`codex_accepted_line_fingerprints` analytics.

- Hash repo, path, and normalized line content before upload; raw code

and raw diffs are not included in the event.

- Chunk large fingerprint payloads and send accepted-line fingerprint

events in isolated requests while preserving normal batching for other

analytics events.

- Canonicalize Git remote URLs before repo hashing so SSH/HTTPS GitHub

remotes join to the same repo hash.

- Add parser coverage for unified diff hunk lines that look like `+++`

or `---` file headers.

## Verification

- `cargo test -p codex-analytics`

- `cargo test -p codex-git-utils canonicalize_git_remote_url`

- `just fix -p codex-analytics`

- `just bazel-lock-check`

- `git diff --check`

## Why

The earlier PRs add stdio transport support and the config-backed

environment provider, but the feature remains inert until normal Codex

entrypoints construct `EnvironmentManager` with enough context to

discover `CODEX_HOME/environments.toml`. This final stack PR activates

the provider while preserving the legacy `CODEX_EXEC_SERVER_URL`

fallback when no environments file exists.

**Stack position:** this is PR 5 of 5. It is the product wiring PR that

activates the configured environment provider added in PR 4.

## What Changed

- Thread `codex_home` into `EnvironmentManagerArgs`.

- Change `EnvironmentManager::new(...)` to load the provider from

`CODEX_HOME`.

- Preserve legacy behavior by falling back to

`DefaultEnvironmentProvider::from_env()` when `environments.toml` is

absent.

- Make `environments.toml`-backed managers start new threads with all

configured environments, default first, while keeping the legacy env-var

path single-default.

- Update the app-server, TUI, exec, MCP server, connector, prompt-debug,

and thread-manager-sample callsites to pass `codex_home` and handle

provider-loading errors.

## Self-Review Notes

- The multi-environment startup path is intentionally tied to the

`environments.toml` provider. Using `>1` configured environment as the

only signal would also expand the legacy `CODEX_EXEC_SERVER_URL`

provider because it keeps `local` addressable alongside `remote`.

- The startup environment list is still derived inside

`EnvironmentManager`; the provider only says whether its snapshot should

start new threads with all configured environments.

- The thread-manager sample was updated to pass the current

`ThreadManager::new(...)` installation id argument so the stack compiles

under Bazel.

## Stack

- 1. https://github.com/openai/codex/pull/20663 - Add stdio exec-server

listener

- 2. https://github.com/openai/codex/pull/20664 - Add stdio exec-server

client transport

- 3. https://github.com/openai/codex/pull/20665 - Make environment

providers own default selection

- 4. https://github.com/openai/codex/pull/20666 - Add CODEX_HOME

environments TOML provider

- **5. This PR:** https://github.com/openai/codex/pull/20667 - Load

configured environments from CODEX_HOME

Split from original draft: https://github.com/openai/codex/pull/20508

## Validation

- `just fmt`

- `git diff --check`

- `bazel build --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/thread-manager-sample:codex-thread-manager-sample`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel

//codex-rs/exec-server:exec-server-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=default_thread_environment_selections_use_manager_default_id

//codex-rs/core:core-unit-tests`

- `bazel test --config=remote --strategy=remote

--remote_download_toplevel --test_sharding_strategy=disabled

--test_arg=start_thread_uses_all_default_environments_from_codex_home

//codex-rs/core:core-unit-tests`

## Documentation

This activates `CODEX_HOME/environments.toml`; user-facing documentation

should be added before this stack is treated as a documented public

workflow.

---------

Co-authored-by: Codex <noreply@openai.com>

* Pass installation ID for storage on enrollments server for

deduping/grouping multiple appservers per installation

* Pass installation ID in remoteControl/status/changed events

## Why

`/fast` was wired as a one-off slash command even though model metadata

now exposes service tiers as catalog data. That meant adding another

tier, such as a slower/cheaper tier, would require more hardcoded TUI

plumbing instead of letting the model catalog drive the available

commands.

This change makes service-tier commands data-driven: each advertised

`service_tiers` entry becomes a `/name` command using the catalog

description, while the request path sends the tier `id` only when the

selected model supports it.

## What Changed

- Removed the hardcoded `/fast` slash-command variant and introduced

dynamic service-tier command items in the composer and command popup.

- Added toggle behavior for service-tier commands: invoking `/name`

selects that tier, and invoking it again clears the selection.

- Preserved the existing Fast-mode keybinding/status affordances by

resolving the current model tier whose name is `fast`, while still

sending the tier request value such as `priority`.

- Persisted service-tier selections as raw request strings so non-fast

tiers can round-trip through config.

- Updated the Bedrock catalog entry to advertise fast support through

`service_tiers` with `id: "priority"` and `name: "fast"`.

- Added defensive filtering in core so unsupported selected service

tiers are omitted from `/responses` requests.

## Validation

- Added/updated coverage for dynamic service-tier slash command lookup,

popup descriptions, composer dispatch, TUI fast toggling, and

unsupported-tier omission in core request construction.

- Local tests were not run per request.

---------

Co-authored-by: Codex <noreply@openai.com>

Since https://github.com/openai/codex/pull/21255, `rust-ci-full` has

been failing due to a missing `bwrap`.

```

thread 'main' panicked at linux-sandbox/src/launcher.rs:43:13:

bubblewrap is unavailable: no system bwrap was found on PATH and no bundled codex-resources/bwrap binary was found next to the Codex executable

```

Since the happy path is now to use the system binary, let's ensure

that's installed.

8d51826631

was necessary for the `bwrap` executable to be discoverable when the

working directory is `/`.

I ran `rust-ci-full` at

https://github.com/openai/codex/actions/runs/25528074506

---------

Co-authored-by: Codex <noreply@openai.com>

## Summary

1. Removes the broad `DARWIN_USER_CACHE_DIR` write rule from the macOS

Seatbelt network policy.

2. Removes the now unused policy parameter plumbing for that cache path.

3. Adds sandboxing coverage that keeps `com.apple.trustd.agent` for TLS

while rejecting the cache write rule.

## Why

This closes the exact cache poisoning boundary. The earlier `gh` TLS

issue is now covered by trustd access, so the cache write is no longer

needed.

## Validation

1. Rust formatting passed.

2. The sandboxing crate tests passed.

3. Local macOS Seatbelt repro with patched policy passed. `gh api`

returned `21442` without the cache write rule.

Provider initialization installs process-global OTEL state, so invalid

trace metadata needs to fail before setup begins.

Use the same span attribute validator as config loading when traces are

exported so provider startup enforces the config contract without

duplicating validation logic.

## Why

Some consumers expect conventional hyphenated HTTP headers. Codex

already sends the session and thread IDs on outbound Responses requests,

but it only uses the underscore spellings today, which makes those IDs

harder to consume in systems that normalize or reject underscore header

names.

Full context here:

https://openai.slack.com/archives/C08KCGLSPSQ/p1778248578422369

## What changed

- `build_session_headers` now emits both `session_id` and `session-id`

when a session ID is present.

- It does the same for `thread_id` and `thread-id`.

- Added regression coverage in `codex-api/tests/clients.rs` and

`core/tests/suite/client.rs` so both the lower-level client tests and

the end-to-end request tests assert the two header spellings are

present.

## Test plan

- Added header assertions in `codex-api/tests/clients.rs`.

- Added request-header assertions in `core/tests/suite/client.rs` for

both the `/v1/responses` and `/api/codex/responses` request paths.

Fixes#21665.

## Why

The TUI status line is the right place for compact, glanceable session

state. The original request was motivated by the need to see the active

permission posture without opening `/permissions` or `/status`,

especially when switching between safer and more permissive modes during

a session.

This PR intentionally separates `permissions` from `approval-mode`

instead of combining them into one status-line item. They answer related

but different questions: `permissions` describes the active

sandbox/profile shape, while `approval-mode` describes how command

approvals are handled. Keeping them separate makes each item

independently configurable and avoids long combined labels in an already

space-constrained status line.

The tradeoff is that users who want the full permission posture in the

status line need to opt into both items. In exchange, users can show

only the sandbox/profile label, only the approval behavior, or both, and

named user-defined profiles remain concise. Non-standard permission

shapes are rendered as `Custom permissions` rather than trying to

squeeze detailed profile contents into the status line; `/status`

remains the fuller explanatory surface.

## What changed

- Added a configurable `permissions` status-line item.

- Added a separate `approval-mode` status-line item, with `approval` as

an alias.

- Render standard permission states compactly as `Read Only`,

`Workspace`, or `Full Access`.

- Preserve user-defined permission profile names directly in the status

line.

- Render unnamed non-standard permission shapes as `Custom permissions`.

- Refresh status surfaces when `/permissions` updates the permission

profile, approval policy, or approval reviewer.

- Updated status-line preview snapshot coverage for the new items.

## Verification

- `cargo test -p codex-tui

status_permissions_non_default_workspace_write_uses_workspace_label`

- `cargo test -p codex-tui

permissions_selection_emits_history_cell_when_selection_changes`

- `cargo insta pending-snapshots --manifest-path tui/Cargo.toml`

## Why

The configurable `/statusline` and terminal title can display session

token usage. That display was using the raw total token count, which

includes cached input tokens, so it significantly overstated the token

usage compared with the blended token count shown elsewhere (in

`/status` and tracked in goals). This inconsistency resulted in user

confusion. We don't want to report cached tokens because we don't charge

for them and they are somewhat of an implementation detail that users

shouldn't care about.

## What changed

- Use `TokenUsage::blended_total()` for the `used-tokens` status surface

item so cached input is excluded.

- Add a brief comment to `tokens_in_context_window()` clarifying that it

returns raw `total_tokens`, whose meaning depends on whether the caller

has last-turn or accumulated usage.

## Summary

- enable `apply_patch_freeform` by default in the feature registry

## Why

- make the freeform `apply_patch` tool available by default when model

metadata does not explicitly opt into another mode

## Validation

- `just fmt`

- did not run tests

---------

Co-authored-by: Codex <noreply@openai.com>

## Why

`service_tier` in `config.toml` and profile config was still modeled as

an enum, which blocked newer or experimental service tier IDs even

though the runtime paths already carry string IDs.

This change makes the TOML-facing config accept string service tier IDs

directly while keeping the legacy `fast` alias behavior by normalizing

it to the request value `priority`.

## What Changed

- change the TOML-facing `service_tier` fields in global and profile

config to `Option<String>`

- keep config-load normalization so legacy `fast` still resolves to

`priority`

- persist resolved service tier strings directly in config locks so

arbitrary IDs round-trip cleanly

- regenerate the config schema and add config coverage for arbitrary

string IDs plus legacy `fast` normalization

## Verification

- added config tests for arbitrary string service tiers and legacy

`fast` normalization

- ran `just write-config-schema`

- CI

---------

Co-authored-by: Codex <noreply@openai.com>